#Facial Machine

Explore tagged Tumblr posts

Text

hydramaster pro e, lymphtic hand demo

1 note

·

View note

Text

Beauty Machine | Medspaline

Expert Skincare to Give You that Radiant Glow!

Introducing the ultimate game-changer in the world of beauty! Say hello to the beauty machine by Medspaline, your secret weapon for flawless skin.

This innovative device combines cutting-edge technology with expert skincare to give you that radiant glow you've always dreamed of.

Get ready to elevate your skincare routine to the next level with Medspaline's beauty machine.

0 notes

Text

I can't remember all the times I tried to tell myself

to hold on to these moments as they pass

- counting crows, a long december

detail below the cut (and wolverine angsty musings in the tags lol)

#poolverine#the song lyrics are supposed to reflect upon logan's pensive facial expression#I imagine even after he's settled in with wade and feels like that dumpy lil apartment is a sort of home#he would still be waiting for the other shoe to drop#because he's never been able to hold onto happiness for long and he sees no reason this time would be the exception#and when wade is asleep he isnt there to distract him from these thoughts#so he just. drifts off into a melancholic daze#vaguely wondering what will happen to wade#to althea#to puppins#to laura#what bizarre universal machinations are already at play to tear what joy he has been able to scrape together#and quietly ruminates on what he'll do next once it is all inevitably ripped away again#which is why he secretly prefers when wade us awake and Constantly Talking#(though he would never openly admit it)#because then he can just listen and block the weight of knowing deep down it can't last because it never does#I wonder how long it would take him to accept that he couldnt lose wade if he tried#deadpool and wolverine#deadclaws#wolverine#deadpool#logan howlett#old man yaoi#wade wilson#deadpool & wolverine#anyway just always thinking about them on some level#also I'm reading that “psychology of wolverine” book and it just. damn. he never gets to hold on to anything or anyone for very long#especially not worstie

279 notes

·

View notes

Text

This is so cute 😭❤️

Is that why he sometimes let us win by putting random cards in the wrong cups.. because he doesn't like it when we get upset 😭

#he also mentioned how interesting our facial expression gets and how he could see our smile from the reflection of the claw machine#no matter what we're doing.. his eyes are always on us 😭#love and deepspace#zayne love and deepspace#love and deepspace zayne#♡

1K notes

·

View notes

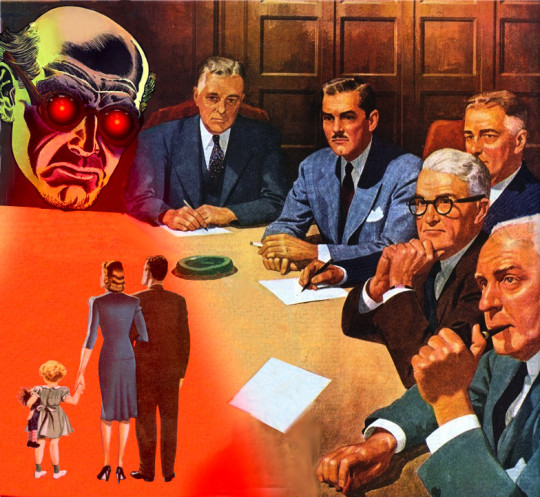

Photo

(via Vending machine error reveals secret face image database of college students | Ars Technica)

Canada-based University of Waterloo is racing to remove M&M-branded smart vending machines from campus after outraged students discovered the machines were covertly collecting facial-recognition data without their consent.

The scandal started when a student using the alias SquidKid47 posted an image on Reddit showing a campus vending machine error message, "Invenda.Vending.FacialRecognitionApp.exe," displayed after the machine failed to launch a facial recognition application that nobody expected to be part of the process of using a vending machine.

"Hey, so why do the stupid M&M machines have facial recognition?" SquidKid47 pondered.

The Reddit post sparked an investigation from a fourth-year student named River Stanley, who was writing for a university publication called MathNEWS.

Stanley sounded alarm after consulting Invenda sales brochures that promised "the machines are capable of sending estimated ages and genders" of every person who used the machines without ever requesting consent.

This frustrated Stanley, who discovered that Canada's privacy commissioner had years ago investigated a shopping mall operator called Cadillac Fairview after discovering some of the malls' informational kiosks were secretly "using facial recognition software on unsuspecting patrons."

Only because of that official investigation did Canadians learn that "over 5 million nonconsenting Canadians" were scanned into Cadillac Fairview's database, Stanley reported. Where Cadillac Fairview was ultimately forced to delete the entire database, Stanley wrote that consequences for collecting similarly sensitive facial recognition data without consent for Invenda clients like Mars remain unclear.

Stanley's report ended with a call for students to demand that the university "bar facial recognition vending machines from campus."

what the motherfuck

#m&m vending machine#secret face image database#college students#massive invasion of privacy#tech#collecting facial-recognition data without consent

474 notes

·

View notes

Text

the thangs

#bendy is so fun to draw facial expressions for my freako#batim#bendy and the ink machine#batdr#bendy and the dark revival#bendy the dancing demon#ink bendy#ink demon#sammy lawrence#boris the wolf#tom boris#thomas connor#alice angel

161 notes

·

View notes

Text

Gurls gonna melt his hand off if she keeps holding on to it

Also uhh I don’t ship Alice x Bendy btw, I just thing this audio fits them

#Imagine pretending to be a couple in public because not being straight wasn’t the norm in the 1930s 😭#they literally hate each other/hj#but yay new animatic for the year#also I needed an excuse to practice drawing my Alice Angle design#my art#art#drawing#digital art#jai art#batim#bendy#bendy the dancing demon#alice angel#animatic#audio if from Chowder but I bet you knew that already#had some fun with the facial expressions#bendy and the ink machine

94 notes

·

View notes

Text

Hypothetical AI election disinformation risks vs real AI harms

I'm on tour with my new novel The Bezzle! Catch me TONIGHT (Feb 27) in Portland at Powell's. Then, onto Phoenix (Changing Hands, Feb 29), Tucson (Mar 9-12), and more!

You can barely turn around these days without encountering a think-piece warning of the impending risk of AI disinformation in the coming elections. But a recent episode of This Machine Kills podcast reminds us that these are hypothetical risks, and there is no shortage of real AI harms:

https://soundcloud.com/thismachinekillspod/311-selling-pickaxes-for-the-ai-gold-rush

The algorithmic decision-making systems that increasingly run the back-ends to our lives are really, truly very bad at doing their jobs, and worse, these systems constitute a form of "empiricism-washing": if the computer says it's true, it must be true. There's no such thing as racist math, you SJW snowflake!

https://slate.com/news-and-politics/2019/02/aoc-algorithms-racist-bias.html

Nearly 1,000 British postmasters were wrongly convicted of fraud by Horizon, the faulty AI fraud-hunting system that Fujitsu provided to the Royal Mail. They had their lives ruined by this faulty AI, many went to prison, and at least four of the AI's victims killed themselves:

https://en.wikipedia.org/wiki/British_Post_Office_scandal

Tenants across America have seen their rents skyrocket thanks to Realpage's landlord price-fixing algorithm, which deployed the time-honored defense: "It's not a crime if we commit it with an app":

https://www.propublica.org/article/doj-backs-tenants-price-fixing-case-big-landlords-real-estate-tech

Housing, you'll recall, is pretty foundational in the human hierarchy of needs. Losing your home – or being forced to choose between paying rent or buying groceries or gas for your car or clothes for your kid – is a non-hypothetical, widespread, urgent problem that can be traced straight to AI.

Then there's predictive policing: cities across America and the world have bought systems that purport to tell the cops where to look for crime. Of course, these systems are trained on policing data from forces that are seeking to correct racial bias in their practices by using an algorithm to create "fairness." You feed this algorithm a data-set of where the police had detected crime in previous years, and it predicts where you'll find crime in the years to come.

But you only find crime where you look for it. If the cops only ever stop-and-frisk Black and brown kids, or pull over Black and brown drivers, then every knife, baggie or gun they find in someone's trunk or pockets will be found in a Black or brown person's trunk or pocket. A predictive policing algorithm will naively ingest this data and confidently assert that future crimes can be foiled by looking for more Black and brown people and searching them and pulling them over.

Obviously, this is bad for Black and brown people in low-income neighborhoods, whose baseline risk of an encounter with a cop turning violent or even lethal. But it's also bad for affluent people in affluent neighborhoods – because they are underpoliced as a result of these algorithmic biases. For example, domestic abuse that occurs in full detached single-family homes is systematically underrepresented in crime data, because the majority of domestic abuse calls originate with neighbors who can hear the abuse take place through a shared wall.

But the majority of algorithmic harms are inflicted on poor, racialized and/or working class people. Even if you escape a predictive policing algorithm, a facial recognition algorithm may wrongly accuse you of a crime, and even if you were far away from the site of the crime, the cops will still arrest you, because computers don't lie:

https://www.cbsnews.com/sacramento/news/texas-macys-sunglass-hut-facial-recognition-software-wrongful-arrest-sacramento-alibi/

Trying to get a low-waged service job? Be prepared for endless, nonsensical AI "personality tests" that make Scientology look like NASA:

https://futurism.com/mandatory-ai-hiring-tests

Service workers' schedules are at the mercy of shift-allocation algorithms that assign them hours that ensure that they fall just short of qualifying for health and other benefits. These algorithms push workers into "clopening" – where you close the store after midnight and then open it again the next morning before 5AM. And if you try to unionize, another algorithm – that spies on you and your fellow workers' social media activity – targets you for reprisals and your store for closure.

If you're driving an Amazon delivery van, algorithm watches your eyeballs and tells your boss that you're a bad driver if it doesn't like what it sees. If you're working in an Amazon warehouse, an algorithm decides if you've taken too many pee-breaks and automatically dings you:

https://pluralistic.net/2022/04/17/revenge-of-the-chickenized-reverse-centaurs/

If this disgusts you and you're hoping to use your ballot to elect lawmakers who will take up your cause, an algorithm stands in your way again. "AI" tools for purging voter rolls are especially harmful to racialized people – for example, they assume that two "Juan Gomez"es with a shared birthday in two different states must be the same person and remove one or both from the voter rolls:

https://www.cbsnews.com/news/eligible-voters-swept-up-conservative-activists-purge-voter-rolls/

Hoping to get a solid education, the sort that will keep you out of AI-supervised, precarious, low-waged work? Sorry, kiddo: the ed-tech system is riddled with algorithms. There's the grifty "remote invigilation" industry that watches you take tests via webcam and accuses you of cheating if your facial expressions fail its high-tech phrenology standards:

https://pluralistic.net/2022/02/16/unauthorized-paper/#cheating-anticheat

All of these are non-hypothetical, real risks from AI. The AI industry has proven itself incredibly adept at deflecting interest from real harms to hypothetical ones, like the "risk" that the spicy autocomplete will become conscious and take over the world in order to convert us all to paperclips:

https://pluralistic.net/2023/11/27/10-types-of-people/#taking-up-a-lot-of-space

Whenever you hear AI bosses talking about how seriously they're taking a hypothetical risk, that's the moment when you should check in on whether they're doing anything about all these longstanding, real risks. And even as AI bosses promise to fight hypothetical election disinformation, they continue to downplay or ignore the non-hypothetical, here-and-now harms of AI.

There's something unseemly – and even perverse – about worrying so much about AI and election disinformation. It plays into the narrative that kicked off in earnest in 2016, that the reason the electorate votes for manifestly unqualified candidates who run on a platform of bald-faced lies is that they are gullible and easily led astray.

But there's another explanation: the reason people accept conspiratorial accounts of how our institutions are run is because the institutions that are supposed to be defending us are corrupt and captured by actual conspiracies:

https://memex.craphound.com/2019/09/21/republic-of-lies-the-rise-of-conspiratorial-thinking-and-the-actual-conspiracies-that-fuel-it/

The party line on conspiratorial accounts is that these institutions are good, actually. Think of the rebuttal offered to anti-vaxxers who claimed that pharma giants were run by murderous sociopath billionaires who were in league with their regulators to kill us for a buck: "no, I think you'll find pharma companies are great and superbly regulated":

https://pluralistic.net/2023/09/05/not-that-naomi/#if-the-naomi-be-klein-youre-doing-just-fine

Institutions are profoundly important to a high-tech society. No one is capable of assessing all the life-or-death choices we make every day, from whether to trust the firmware in your car's anti-lock brakes, the alloys used in the structural members of your home, or the food-safety standards for the meal you're about to eat. We must rely on well-regulated experts to make these calls for us, and when the institutions fail us, we are thrown into a state of epistemological chaos. We must make decisions about whether to trust these technological systems, but we can't make informed choices because the one thing we're sure of is that our institutions aren't trustworthy.

Ironically, the long list of AI harms that we live with every day are the most important contributor to disinformation campaigns. It's these harms that provide the evidence for belief in conspiratorial accounts of the world, because each one is proof that the system can't be trusted. The election disinformation discourse focuses on the lies told – and not why those lies are credible.

That's because the subtext of election disinformation concerns is usually that the electorate is credulous, fools waiting to be suckered in. By refusing to contemplate the institutional failures that sit upstream of conspiracism, we can smugly locate the blame with the peddlers of lies and assume the mantle of paternalistic protectors of the easily gulled electorate.

But the group of people who are demonstrably being tricked by AI is the people who buy the horrifically flawed AI-based algorithmic systems and put them into use despite their manifest failures.

As I've written many times, "we're nowhere near a place where bots can steal your job, but we're certainly at the point where your boss can be suckered into firing you and replacing you with a bot that fails at doing your job"

https://pluralistic.net/2024/01/15/passive-income-brainworms/#four-hour-work-week

The most visible victims of AI disinformation are the people who are putting AI in charge of the life-chances of millions of the rest of us. Tackle that AI disinformation and its harms, and we'll make conspiratorial claims about our institutions being corrupt far less credible.

If you'd like an essay-formatted version of this post to read or share, here's a link to it on pluralistic.net, my surveillance-free, ad-free, tracker-free blog:

https://pluralistic.net/2024/02/27/ai-conspiracies/#epistemological-collapse

Image: Cryteria (modified) https://commons.wikimedia.org/wiki/File:HAL9000.svg

CC BY 3.0 https://creativecommons.org/licenses/by/3.0/deed.en

#pluralistic#ai#disinformation#algorithmic bias#elections#election disinformation#conspiratorialism#paternalism#this machine kills#Horizon#the rents too damned high#weaponized shelter#predictive policing#fr#facial recognition#labor#union busting#union avoidance#standardized testing#hiring#employment#remote invigilation

146 notes

·

View notes

Text

hey small messy manga boys

#jjk#jjk art#satoru gojo#choso#mahito#manga panel redraws that I might tattoo one day. if I can figure out how to cross hatch lines properly w the machine#ive been studying non lightsource dependant highlights on black clothing and hair recently bcus clothes is SO HARD for me#I think this worked out OKAY. i only used the panels as a ref for the outlines and specific facial features#so going on nothing but mind for the highlights its not bad like its def getting better#anyways. my small manga boys#my art

27 notes

·

View notes

Text

BOSS FIGHT!

#bmc#be more chill#jeremy heere#michael mell#jeremy bmc#michael bmc#boyf riends#hi hello this is actually propaganda for my headcanons so study up guys. consider yourself conscripted#jeremy with severe acne and an unfortunately placed mole#who wears the same sweater literally every day and its starting to wear white and get holes#(btw wovenvessel if youre seeing this yes i stole your sweater drawing technique im sorry dflksdjlfksf)#michael who has embarrassing patchy teen facial hair and also sews all his patches on really shittily with a machine#michael has one of those epic transparent controllers and they have fought over it so much#that they have slowly developed an incredibly convoluted system to determine who gets it each time#also slow stoner michael and anxious stoner jeremy#he has a bad time like half the time but he does it anyway#my art#my posts#art#posts#jeremy#michael#bmc michael#wuuujer#bmc jeremy#oh also gave jeremy my irl sunflower converse bc i do what i want#(actually so my cosplay feels more accurate shhhhh)

315 notes

·

View notes

Text

Local idiot draws Big-Chested Angel and Go-Pro Blue Edition for the first time. It’s either a blessing or a curse.

#i personally think it’s a curse for three reasons#1 - i gave gabriel some kind of a face#2 - i’ve started calling him both ‘gabby’ and ‘big-chested angel’#3 - ‘THAT’S RIGHT MACHINE I ALSO LACK DEPTH PERCEPTION’ is a vocal stim now#bro looks like half his face loaded in. he’s got the facial structure at least#ears? none that I can see#eyes? only one to the left of his head#nose? not that i can see#mouth? only sometimes#ultrakill#gabriel ultrakill#ultrakill gabriel#v1 ultrakill#tag this as you will :3#zeisty’s goofs#y'all my memory recall's so bad i need to refresh myself on this game before i draw more stuff surrounding it

58 notes

·

View notes

Note

missed ur writing sm glad ur better

Thank you!! It has honestly been miserable the past month, this has been the worst and most terrifying medical experience of my life.

In almost 26 years I've never been allergic to anything, not even pollen/seasonal allergies, and all of a sudden I'm swollen all over gagging for breath, my fingers and feet and lips turned purple, I couldn't walk, my face looked like a pufferfish... then it came back again and again so I had to keep going back to the hospital. Apparently I had a "prolonged viral reaction" (I didn't even know that was a thing), so even after the worst was over, I was covered in hives for weeks.

Whatever the allergen was, I pray to God I never encounter it again.

#it really started registering how bad it was when i got to the hospital#bc 1) they took me back without any wait which is like... not a good sign#and then 2) the first doctor walks into the room and takes one look at me and goes '...hang on ill be right back'#and in like 15 seconds four people come rushing into the room carrying tubes and bags and hauling some big machine#with really serious facial expressions/tone of voice#that was my '...oh this is like for real' moment 😅😅#but hey now that i know what mortal fear is like i can probably write it better#positive mindset!

52 notes

·

View notes

Text

Elevate your skincare routine with the powerful benefits of a hydrogen oxygen facial machine from Medspaline!

Say goodbye to dull and lackluster skin and hello to a radiant, hydrated complexion. Trust us, your skin will thank you.

0 notes

Text

MOFF TARKIN AND GLOSSU RABBAN SQUARES

🟨🟦🟥🟥◼️🟥🟦🟦🟥🟥

◼️🟦🟥🟦◼️◼️🟦🟦◼️◼️

SQUARE MILITARY RANK INSIGNIA MILITARY SALESMEN FROM OUTSIDE THIS CLUSTER OF GALAXIES

NO I DON'T WANT TO BUY ANY MORE STORMTROOPER ARMOR. I HAVE ENOUGH, THANK YOU.

#◼️#🟦#🟥#🟨#moff tarkin#glossu rabban#star wars#films#books#media#language#earth#english language#alderaan#giedi#giedi prime#github#commercially available facial recognition software#reel to reel tape machines#janitors#laboratory coats#white laboratory coats#white stormtrooper armor#stormtroopers#terran time traveling criminals#this planet the planet earth was originally named the planet terra#time travel#cube#minecraft#space probes

43 notes

·

View notes

Text

Absolutely living for these pictures of Michael rehearsing for Nye at the National Theatre.

#michael sheen#welsh seduction machine#nye the play#national theatre#the first one is by far my favorite#the two fingers. the facial expression...#no way he doesn't know exactly what he is doing#oh Michael#i hope i'll be able to see him in the theatre for real someday#can't wait to watch#amazing

80 notes

·

View notes

Note

27 and Archie for the spotify 100 thing,,, also hope you're feeling ok kara beloved!!

I've blown apart my life for you

And bodies hit the floor for you

And break me, shake me, devastate me

Come here, baby, tell me that I'm wrong

#the bomb by florence + the machine#hihi HI LAURIE ty for the request :3 !#i Kinda just did it based around the album cover but shhh Its ok#also . I'm doing ok i just have a headache :((#my art#archie collymore#dnd#dungeons and dragons#orignal character#spotify wrapped 2025#thedndgoblinwholivesinyourwalls#Archie does not like 2 be angry because it reminds him of someone who was super angry in his life (his dad)#also i forgot his facial hair Damn im too lazy 2 go fix it tho BAHAHAGAH#ITS BEEN SO LONG SINCE IVE DRAWN HIM OK!!!!!#camp oleander

11 notes

·

View notes