#politicians like us

Text

Source

Occasionally, the media does its job with headlines like this

#rip these politicians to shreds like they deserve#politics#us politics#government#the left#progressive#twitter post#current events#news#hunger#food insecurity

1K notes

·

View notes

Text

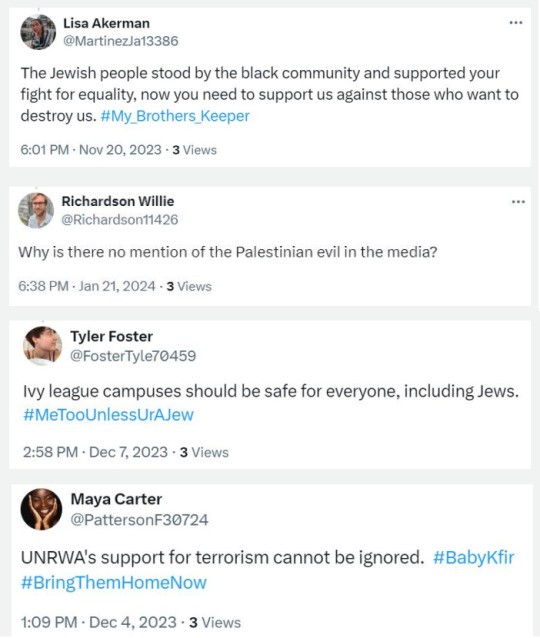

An Israeli influence campaign is using hundreds of online avatars and fake social media accounts to attack Democratic lawmakers critical of Israel and promote news articles disapproving of the United Nations Palestine refugee agency (Unrwa), according to a report by the Israeli online watchdog, Fake Reporter.

According to the report, the targeted campaign has used more than 600 avatars, sending out 58,000 tweets and social media posts to circulate articles published by The Guardian, CNN and Wall Street Journal, among other major news outlets that amplify Israel’s position on the war.

The campaign relies on three major social networks, UnFold Magazine, Non-Agenda and The Moral Alliance, which were created prior to the war in Gaza. But the Hamas-led 7 October attack on southern Israel sent the accounts into round-the-clock posting.

The sites, according to Fake Reporter, are geared specifically to a “progressive audience”, publishing content on climate change, AI regulation, and human rights, in addition to the war in Gaza. They have more than 43,000 followers across Facebook, Instagram and Twitter.

The avatars promoting the content talk up their identity with lines like, “As a middle-aged African American woman” and use hashtags like #FaithJourney and #AfricanAmericanSpirituality.

Some examples from the report:

And continuing,

The avatars were all created on the same day and their profiles were written with the same formula, subbing out just a few words. The declared gender and ethnicity of the avatars don’t match the profile photos, which have been taken from websites selling headshots.

The campaign works to amplify news stories published by major media outlets. First, the fake news sites share the reports. Then, the avatars share them across social media, including on the official accounts of Democratic lawmakers.

Avatars also shared social media posts showing video clips of what appeared to be Pro-Palestinian protestors calling for "massacres to be normalised" and calling for the US to "go to hell", contrasting that with peaceful protests of pro-Israel protestors.

In other cases, Avatars simply reshared widely published video clips of US lawmakers questioning the heads of Ivy League schools about antisemitism on campus.

[...] According to the report, around 85 percent of all the US politicians targeted by the campaign were Democrats, and 90 percent of them were African Americans.

Ritchie Torres, a black Democratic Congressman with generally pro-Israel views, garnered the most social media engagement from the avatars. Other lawmakers targeted included Cori Bush; Lucy McBath; House minority leader Hakeem Jeffries; and Democratic Senator Raphael Warnock.

Israeli news site Haaretz reported in January that the Israeli government had launched an online influence campaign to respond to pro-Palestinian content and reports about Hamas.

It’s unclear whether the campaign revealed by Fake Reporter is part of that initiative.

. . . continues at MME (20 Mar 2024)

#free palestine#palestine#israel#gaza#hasbara#bot network#if you're active on twitter you've likely encountered these israeli bot networks already#but note how targeted it is#''around 85 percent of all the US politicians targeted by the campaign were Democrats#and 90 percent of them were African Americans''

1K notes

·

View notes

Text

A male South Australian politician is going to introduce a bill to restrict how women have their abortion today. At present, abortion is legal in South Australia, but any abortion procedure must be approved by two doctors, and must be to protect the health of the pregnant woman; under the proposed changes, women who want an abortion would instead be forced to be induced and put the foetus up for adoption (assuming it survives; there's no detail about what rights this unwanted parasite would have to identify its mother or what responsibilities women would incur for the unwanted parasite's future, e.g. around disclosing health problems). The politician claims the bill is necessary to balance the 'choice' of the mother and the 'rights' of the 'child' (and isn't it interesting how women have 'choices' and foetuses have 'rights;' that, in the discourse, women are referred to as gender-neutral 'people' whereas foetuses ad referred to as 'babies').

I don't know about y'all, but I'm just so tired of all the insidious ways that men tell us they hate us: that they can't trust our decisions (what if we regret wanting to terminate a pregnancy?), that they can't trust our intentions (what if we want an abortion for a 'bad' reason, like being pregnant and not wanting to be?), that we can't be objective (we might want rights, but what about our unwanted parasites), and so on.

#and the thing that gets me: men know bodily autonomy is important and that's why they'll never compel someone to donate against their will#they just reserve it for us#anyway fuck this male politician & everyone who thinks like him & anyone who entertains this#radblr

253 notes

·

View notes

Text

De-aged and injured Danny

Danny is found out by his parents. They don't take it well.

Clockwork is very upset about this, because he'd gambled on almost-certain odds of them being chill about it. So now he has to run damage-control before this very unlikely time-line goes even further off the deep end.

Unfortunately, Danny needs to be in the living world, not the Infinite Realms. Which means that Clockwork needs to put Danny somewhere safe. Somewhere where nobody will find him.

And double-unfortunately, the only place that remotely fits this bill is to contact Lady Gotham.

City-spirits aren't... super-reliable. They're Neverborns who very very rarely consider "humanoid shapes" worth figuring out. So they just kind of... exist. An ectoplasmic presence that's undeniable, but also extremely difficult to have a conversation with.

Thankfully, Lady Gotham is (for all of her... quirks) generally very hero-aligned. Which is why she's the best one to ask for sanctuary for Danny.

Danny who Clockwork de-aged as a way to "limit his injuries" of being vivisected.

Lady Gotham agrees, but she only has one "safe place" to put him. And her Knight is a little bit too paranoid for her to just dump an injured child in his lair, without causing more trouble than it's worth.

But it's hardly a difficult thing, to arrange a few things, and place Danny in a spot where his injuries will cause her Knight to hurry to his aid.

Such as... in a room filled with medical equipment, right next door to where Joker has just lost a fight with Batman.

Things escalate somewhat when Batman finds him and makes some assumptions about what Joker has been up to. Tempers run a bit high, someone loses a few extra teeth, someone else has to physically drag Bruce off Joker's body before he beats him to death, and the Joker considers the whole thing a grand old laugh (he has no idea what's going on, but it sure pissed off Batty, and that's always a treat).

Of course, the Batfam has to actually investigate the scene, evacuate Danny, give Danny medical aid, and then also ask Danny about what happened.

Danny wakes up and is very confused about a lot of things.

He's no longer being vivisected. Great. Love that part.

He's somewhere he doesn't recognize (the Batcave). Could be good, could be bad. At least the bed is pretty nice?

He's very small. This feels like a personal attack. He might not have gotten a good growth-spurt yet, but taking away what he had is cruel and unusual.

And there's a weirdo in an... armored bat-costume? Who isn't setting off his ghost-sense? What the hell kind of "normal" person wears something like that?

Still, Danny does answer the questions that Batman asks him, because... well, there's a green post-it-note in his pocket that says he shouldn't lie.

So Danny tells Batman about his parents cutting him up "for science". And Batman hears that the Joker somehow managed to hire two mad scientists who (upon the tiniest bit of suggestion from the Joker, who'd definitely seen the similarities between Danny and Jason and thought it would be a "funny prank") had leapt at the opportunity to vivisect their own son.

This is definitely worrying, because from the phrasing, they'd been "wanting to do it for a long time". And considering Danny's slow heartbeat and low body-temperature? They'd been wanting to do it because he was a meta.

So, somewhere out there (the Bats had found no trace of the two) were two deranged lunatics who wanted to cut open metas to "see how they worked".

Batman does the very reasonable thing and actually contacts the rest of the Justice League with their descriptions, just in case they'd managed to leave Gotham before the Bats had tracked them down.

#danny might mention the anti-ecto acts. which would lead them to the GIW which would lead them to Amity Park and to the Fentons.#and would likely stir up a LOT of outrage for a bunch of politicians effectively creating a loophole in a very clear-cut humanitarian law#(the meta-protection acts). and those politicians would probably be very nervous about having gotten caught.#(think about all the cool ecto-tech they could use to make tons and tons of money. as long as ecto-entities don't have any rights)#also also. upon investigating amity park in person? jazz would probably witness against her parents on the spot. no questions asked.#like. she comes home. and danny is gone? her parents are talking about ghost-kidnapping? and now someone official is asking questions?#yes. please. please arrest my parents and tell me where my little brother is. tell me he's safe. tell me he isn't buried somewhere.#jazz is very aware of the risks of her parents ''reacting badly'' to the phantom-reveal. which is why she's been covering for him.#but yes. this is mostly written out as a ''and here is de-aged and traumatized danny in batfam-custody''-setting idea.#bcs involving LadyGotham is fine. but having her TALK to her bats? communicate clearly? make her presence known?#never. she'd refuse on principle. she'd rather stage an arkham-breakout and indirectly murder thousands.#it's her love-language. you wouldn't understand.#laughing#my writing#danny phantom#dc comics#batman#stories

236 notes

·

View notes

Text

You're being targeted by disinformation networks that are vastly more effective than you realize. And they're making you more hateful and depressed.

(This essay was originally by u/walkandtalkk and posted to r/GenZ on Reddit two months ago, and I've crossposted here on Tumblr for convenience because it's relevant and well-written.)

TL;DR: You know that Russia and other governments try to manipulate people online. But you almost certainly don't how just how effectively orchestrated influence networks are using social media platforms to make you -- individually-- angry, depressed, and hateful toward each other. Those networks' goal is simple: to cause Americans and other Westerners -- especially young ones -- to give up on social cohesion and to give up on learning the truth, so that Western countries lack the will to stand up to authoritarians and extremists.

And you probably don't realize how well it's working on you.

This is a long post, but I wrote it because this problem is real, and it's much scarier than you think.

How Russian networks fuel racial and gender wars to make Americans fight one another

In September 2018, a video went viral after being posted by In the Now, a social media news channel. It featured a feminist activist pouring bleach on a male subway passenger for manspreading. It got instant attention, with millions of views and wide social media outrage. Reddit users wrote that it had turned them against feminism.

There was one problem: The video was staged. And In the Now, which publicized it, is a subsidiary of RT, formerly Russia Today, the Kremlin TV channel aimed at foreign, English-speaking audiences.

As an MIT study found in 2019, Russia's online influence networks reached 140 million Americans every month -- the majority of U.S. social media users.

Russia began using troll farms a decade ago to incite gender and racial divisions in the United States

In 2013, Yevgeny Prigozhin, a confidante of Vladimir Putin, founded the Internet Research Agency (the IRA) in St. Petersburg. It was the Russian government's first coordinated facility to disrupt U.S. society and politics through social media.

Here's what Prigozhin had to say about the IRA's efforts to disrupt the 2022 election:

"Gentlemen, we interfered, we interfere and we will interfere. Carefully, precisely, surgically and in our own way, as we know how. During our pinpoint operations, we will remove both kidneys and the liver at once."

In 2014, the IRA and other Russian networks began establishing fake U.S. activist groups on social media. By 2015, hundreds of English-speaking young Russians worked at the IRA. Their assignment was to use those false social-media accounts, especially on Facebook and Twitter -- but also on Reddit, Tumblr, 9gag, and other platforms -- to aggressively spread conspiracy theories and mocking, ad hominem arguments that incite American users.

In 2017, U.S. intelligence found that Blacktivist, a Facebook and Twitter group with more followers than the official Black Lives Matter movement, was operated by Russia. Blacktivist regularly attacked America as racist and urged black users to rejected major candidates. On November 2, 2016, just before the 2016 election, Blacktivist's Twitter urged Black Americans: "Choose peace and vote for Jill Stein. Trust me, it's not a wasted vote."

Russia plays both sides -- on gender, race, and religion

The brilliance of the Russian influence campaign is that it convinces Americans to attack each other, worsening both misandry and misogyny, mutual racial hatred, and extreme antisemitism and Islamophobia. In short, it's not just an effort to boost the right wing; it's an effort to radicalize everybody.

Russia uses its trolling networks to aggressively attack men. According to MIT, in 2019, the most popular Black-oriented Facebook page was the charmingly named "My Baby Daddy Aint Shit." It regularly posts memes attacking Black men and government welfare workers. It serves two purposes: Make poor black women hate men, and goad black men into flame wars.

MIT found that My Baby Daddy is run by a large troll network in Eastern Europe likely financed by Russia.

But Russian influence networks are also also aggressively misogynistic and aggressively anti-LGBT.

On January 23, 2017, just after the first Women's March, the New York Times found that the Internet Research Agency began a coordinated attack on the movement. Per the Times:

More than 4,000 miles away, organizations linked to the Russian government had assigned teams to the Women’s March. At desks in bland offices in St. Petersburg, using models derived from advertising and public relations, copywriters were testing out social media messages critical of the Women’s March movement, adopting the personas of fictional Americans.

They posted as Black women critical of white feminism, conservative women who felt excluded, and men who mocked participants as hairy-legged whiners.

But the Russian PR teams realized that one attack worked better than the rest: They accused its co-founder, Arab American Linda Sarsour, of being an antisemite. Over the next 18 months, at least 152 Russian accounts regularly attacked Sarsour. That may not seem like many accounts, but it worked: They drove the Women's March movement into disarray and eventually crippled the organization.

Russia doesn't need a million accounts, or even that many likes or upvotes. It just needs to get enough attention that actual Western users begin amplifying its content.

A former federal prosecutor who investigated the Russian disinformation effort summarized it like this:

It wasn’t exclusively about Trump and Clinton anymore. It was deeper and more sinister and more diffuse in its focus on exploiting divisions within society on any number of different levels.

As the New York Times reported in 2022,

There was a routine: Arriving for a shift, [Russian disinformation] workers would scan news outlets on the ideological fringes, far left and far right, mining for extreme content that they could publish and amplify on the platforms, feeding extreme views into mainstream conversations.

China is joining in with AI

[A couple months ago], the New York Times reported on a new disinformation campaign. "Spamouflage" is an effort by China to divide Americans by combining AI with real images of the United States to exacerbate political and social tensions in the U.S. The goal appears to be to cause Americans to lose hope, by promoting exaggerated stories with fabricated photos about homeless violence and the risk of civil war.

As Ladislav Bittman, a former Czechoslovakian secret police operative, explained about Soviet disinformation, the strategy is not to invent something totally fake. Rather, it is to act like an evil doctor who expertly diagnoses the patient’s vulnerabilities and exploits them, “prolongs his illness and speeds him to an early grave instead of curing him.”

The influence networks are vastly more effective than platforms admit

Russia now runs its most sophisticated online influence efforts through a network called Fabrika. Fabrika's operators have bragged that social media platforms catch only 1% of their fake accounts across YouTube, Twitter, TikTok, and Telegram, and other platforms.

But how effective are these efforts? By 2020, Facebook's most popular pages for Christian and Black American content were run by Eastern European troll farms tied to the Kremlin. And Russia doesn't just target angry Boomers on Facebook. Russian trolls are enormously active on Twitter. And, even, on Reddit.

It's not just false facts

The term "disinformation" undersells the problem. Because much of Russia's social media activity is not trying to spread fake news. Instead, the goal is to divide and conquer by making Western audiences depressed and extreme.

Sometimes, through brigading and trolling. Other times, by posting hyper-negative or extremist posts or opinions about the U.S. the West over and over, until readers assume that's how most people feel. And sometimes, by using trolls to disrupt threads that advance Western unity.

As the RAND think tank explained, the Russian strategy is volume and repetition, from numerous accounts, to overwhelm real social media users and create the appearance that everyone disagrees with, or even hates, them. And it's not just low-quality bots. Per RAND,

Russian propaganda is produced in incredibly large volumes and is broadcast or otherwise distributed via a large number of channels. ... According to a former paid Russian Internet troll, the trolls are on duty 24 hours a day, in 12-hour shifts, and each has a daily quota of 135 posted comments of at least 200 characters.

What this means for you

You are being targeted by a sophisticated PR campaign meant to make you more resentful, bitter, and depressed. It's not just disinformation; it's also real-life human writers and advanced bot networks working hard to shift the conversation to the most negative and divisive topics and opinions.

It's why some topics seem to go from non-issues to constant controversy and discussion, with no clear reason, across social media platforms. And a lot of those trolls are actual, "professional" writers whose job is to sound real.

So what can you do? To quote WarGames: The only winning move is not to play. The reality is that you cannot distinguish disinformation accounts from real social media users. Unless you know whom you're talking to, there is a genuine chance that the post, tweet, or comment you are reading is an attempt to manipulate you -- politically or emotionally.

Here are some thoughts:

Don't accept facts from social media accounts you don't know. Russian, Chinese, and other manipulation efforts are not uniform. Some will make deranged claims, but others will tell half-truths. Or they'll spin facts about a complicated subject, be it the war in Ukraine or loneliness in young men, to give you a warped view of reality and spread division in the West.

Resist groupthink. A key element of manipulate networks is volume. People are naturally inclined to believe statements that have broad support. When a post gets 5,000 upvotes, it's easy to think the crowd is right. But "the crowd" could be fake accounts, and even if they're not, the brilliance of government manipulation campaigns is that they say things people are already predisposed to think. They'll tell conservative audiences something misleading about a Democrat, or make up a lie about Republicans that catches fire on a liberal server or subreddit.

Don't let social media warp your view of society. This is harder than it seems, but you need to accept that the facts -- and the opinions -- you see across social media are not reliable. If you want the news, do what everyone online says not to: look at serious, mainstream media. It is not always right. Sometimes, it screws up. But social media narratives are heavily manipulated by networks whose job is to ensure you are deceived, angry, and divided.

Edited for typos and clarity. (Tumblr-edited for formatting and to note a sourced article is now older than mentioned in the original post. -LV)

P.S. Apparently, this post was removed several hours ago due to a flood of reports. Thank you to the r/GenZ moderators for re-approving it.

Second edit:

This post is not meant to suggest that r/GenZ is uniquely or especially vulnerable, or to suggest that a lot of challenges people discuss here are not real. It's entirely the opposite: Growing loneliness, political polarization, and increasing social division along gender lines is real. The problem is that disinformation and influence networks expertly, and effectively, hijack those conversations and use those real, serious issues to poison the conversation. This post is not about left or right: Everyone is targeted.

(Further Tumblr notes: since this was posted, there have been several more articles detailing recent discoveries of active disinformation/influence and hacking campaigns by Russia and their allies against several countries and their respective elections, and barely touches on the numerous Tumblr blogs discovered to be troll farms/bad faith actors from pre-2016 through today. This is an ongoing and very real problem, and it's nowhere near over.

A quote from NPR article linked above from 2018 that you might find familiar today: "[A] particular hype and hatred for Trump is misleading the people and forcing Blacks to vote Killary. We cannot resort to the lesser of two devils. Then we'd surely be better off without voting AT ALL," a post from the account said.")

#propaganda#psyops#disinformation#US politics#election 2024#us elections#YES we have legitimate criticisms of our politicians and systems#but that makes us EVEN MORE susceptible to radicalization. not immune#no not everyone sharing specific opinions are psyops. but some of them are#and we're more likely to eat it up on all sides if it aligns with our beliefs#the division is the point#sound familiar?#voting#rambles#long post

158 notes

·

View notes

Text

thinking about the czech anthem in comparison to the majority of other national anthems and we truly are the poor little meow meow of countries

#but like#i like it#i like it a lot after thinking about it#like the majority of anthems i know of are about fighting#and ours is just like ''my home is so pretty i love it here so much ;~; ''#and in connection to our history it feels kinda like...passive resistance sort of way?#it's not about the fight. it's about survival#no matter who the current king or tyrant or shitty politician is we'll just keep on living here#describes us pretty well tbh. both in the positive and negative aspect#like there is this sort of ''keep your head down and wait for the bad times to blow over without giving much effort to make it better''#but whatever#we've got pretty trees amiright lol

2K notes

·

View notes

Text

Biden dropping out has been, no joke, the best thing for the Democratic Party in years.

I haven't felt this sort of excitement in electoral politics since Bernie's 2015/2016 campaign. I genuinely do not believe that the Dems have had anything this good happen for them since Obergefell v. Hodges in 2015.

After weeks, months! of Biden fumbling so fucking much (student protests for a ceasefire in Gaza, god-awful debate, etc), to drop out and suddenly . . .

the main Democratic candidate is now a charismatic woman of color who called for a ceasefire in Gaza

a category 5 storm of memes about the biggest album of the year in pop music and pop culture and Kamala Harris

the Republican VP pick generally being an off-putting grifter weirdo

a rumor about aforementioned VP pick having sex with a couch starts to pick up traction

a genuinely exciting search for the Democratic VP slot

Minnesota governor Tim Walz (correctly) calls Trump and Trumpian Republicans “weird”

Harris picks Tim Walz, a middle class, ex-military, former public school teacher who coached his school's football team and sponsored the gay-straight alliance, now a pro-union Democrat attack dog (which I have never seen on a Democratic ticket as long as I have been alive) to be her running mate

he makes a JD Vance couch joke in his first speech as Harris’ VP pick!

How absolutely perfect is this? I have never seen this energy and excitement in electoral politics (save for MAGA-types [but they're a cult]) in my life. This is potentially a godsend for the Democratic party.

Moreover, I genuinely think this could make conditions for pushing for more progressive and left-leaning policies at all levels of government in the U.S. possible.

#once again THERE IS NO SUCH THING AS A GOOD US POLITICIAN#BUT I DO NOT CARE#THIS IS SUCH A GOOD OUTCOME FOR US POLITICS#I don't want to jinx it but I wonder if this is the dawn of a new golden age of progressive politics in the US#obviously led by activists & students & organizers#but the conditions FOR organizing & agitating & unionizing & protesting will be SO much better#and leftists can get more DONE#and if the overton window is shifting left that means that more progressive politicians can get elected and actually PASS legislation!#anyways I'm very excited#also we DO get sweet midwestern dad Tim Walz on a presidential ticket and I do really like that#US politics#u.s. politics#2024#2024 presidential election#fuck trump#fuck jd vance#tim walz#politics

105 notes

·

View notes

Text

kamala almost calling trump a fucker on live tv was crazy actually

#tumblr#us politics#united states#kamala harris#donald trump#us debate#presidential debate#2024 presidential election#I don’t like glazing politicians but I do have to give her that one. she should’ve said it#if I had to listen to a geezer talk about transgender alien immigrant abortion I’d put a bullet in his head and then in mine

128 notes

·

View notes

Text

Also while I'm here slandering Jiang Cheng. FUCK off with the Nie Mingjue would ever treat Wei Wuxian well when he was all for letting the Wens die just for their surname and has no morals despite screaming about them to Jin Guangyao. And WHY he's a brainless meat walker zombie that is too stupid to tell people apart.

And also much as I love Lan Xichen and don't drink the hate him kool-aid he's also a ignorant fool who doesn't bother with anything aside from facades and being so passive it becomes cruelty despite all his well meaning intentions that are easily manipulated due to him choosing to be seen as unaffected by things unlike his brother who was lead astray by emotions~ unlike Lan Xichen (who is the biggest instigator of burying his head in the sand if I can't see it it doesn't exist)

#mdzs#mo dao zu shi#you want me to shit talk characters flaws let's start with the ones this fandom refuses to hold accountable#for how they actively condemned the good moral man of the story#and the point of the novel#no he hasn't and wasn't as bad as your blorbo#and why you can't use he's as flawed as these ones#his flaws are like a kindergartner next to a nasty politician

110 notes

·

View notes

Note

What's stopping the possibility of a ceasefire is pretty simple. Hamas is holding 239 Israeli civilians hostage including children and the elderly. What's happening in Palestine is a travesty and horrendous. But Israel can't initiate a ceasefire from the position they're in, so we need to be agitating for Hamas to release the hostages and call for a ceasefire instead.

NO GENOCIDE IS JUSTIFIABLE

HOW DOES THE KILLING OF INNOCENT PEOPLE ON THIS EXTREME LEVEL FORCE HAMAS TO RETURN HOSTAGES??

ISRAEL'S BOMBARDMENT AND INDISCRIMINATE SHOOTING IN GAZA THREATEN EVERYONE THERE INCLUDING DOCTORS JOURNALISTS CHILDREN ENTIRE FAMILIES AND THE HOSTAGES

EVERYONE IS TARGETED

YOU HAVE HOSPITALS BOMBED HOW ANY OF THIS IS JUSTIFIED

tumblr

@sarroora @fairuzfan @palipunk @wearenotjustnumbers2

You know more about this than I do.

#do you really think this will work on me; like hell I'm gonna stay silent for you#I hoard bookmarks like a dragon so guess what I have been saving from the posts I had reblogged to this blog and my sideblog#firefox bookmarks manager are a blessing oh my gods#how does one block anons#sorry for going full Black here on this post but yeah I'm a little livid#the entirety of Western media heavily propagandized for Israel and the US#how the US media covered this look at how our politicians keep funding Israel with money that could have gone to#our schools healthcare housing etc; my tax payer money is being used to kill innocent people and silence protesters#tw death#tw racial profiling#palestine#update: changed a few tags because I mistakenly compared Al Jazeera's coverage to Western Coverage#Al Jazeera has the best coverage of what is happening in Gaza and unfortunately also lost journalists#They deserve respect for what they are doing#thank you for the corrections wearenotjustnumbers2 (see their response in the notes pls)#genocide

272 notes

·

View notes

Text

Everyone here thinks you have to 100% agree with your chosen politician/leader. I hate to say this but if you agree with everything, as in absolutely everything, they say, you are in a cult/likely brainwashed.

#Harris 2024#you do not have to love your politicians#you don’t even have to like them#as people#just what they stand for#and even then#not everything#tumblr#hellsite#hellsite (derogatory)#Biden#us politics#USA#Biden 2024#us elections

144 notes

·

View notes

Text

note to franc peartree from orin

i don't know if you can actually find this in game but it doesn't seem to be loaded into any of the map files and it's not in franc peartree's house or basement, so it's likely you can't? anyway. i feel like it somehow paints a hornier picture of gortash and peartree's relationship than his original unedited letters

here is its roottemplates entry, it's named "BOOK_LOW_SerialKiller_PeartreeOrinThreat", and its name in game is "choose wisely"

the note you find on peartree's body is named "BOOK_LOW_SerialKiller_OrinThreat", in game "bloodied note"

#tell me more about gortash's fingers orin#paired with this it's like... his letters reflect his collected politician persona and then orin's writing pulls back the curtain#anyway someone let me know if they've actually found this because i'm convinced it's not in the game#i used the cheat engine to spawn it in my inventory btw#baldur's gate#enver gortash#orin the red#franc peartree#★

170 notes

·

View notes

Text

JD Vance seems to be advocating for the right to spread disinformation??? That is a WILD take

#us politics#vice presidential debate#election 2024#Vance is a better speaker than trump#and more of a politician#but that just makes walz seem more just like a regular likable guy

50 notes

·

View notes

Text

Bruh who keeps touching his face that is insane ...

#>gifs#us presidents#us history#history#b&w#vintage#1960s#60s#potus#jfk#the kennedys#jack kennedy#john f kennedy#idk what was up with crowds and beingso physical#like idk u just dont see that with politicians#its so invasive i feel#gif#BRUH WHO KEEPS SHAKING HIS SHOULDER LEAVE THE DAMN MAN 😭😭😭😭#ITS NOT THE SECOND COMING LMFAOO

47 notes

·

View notes

Text

Long after Zuko has passed away and students start to learn about Zuko in university/school, it quickly becomes a fun past time to… document all of Fire Lord Zuko’s ✨crimes✨

Everyone knows that not everything would have been documented/Zuko definitely had his secrets so a Fun Activity for history and/or political students to do is play a game of Would Fire Lord Zuko Commit This Crime? even the law students are involved. Some even make it into a drinking game. One of the philosophy students swears Zuko came to them in a dream to tell them they’re underestimating his ability to break into places (“could you break into the pentagon?” “in my sleep 😌”). Now everyone’s hosting seances to ask the REAL hard hitting questions like “did you rob rich people?” (the answer is yes. And also people that pissed him off)

Some like to act like they’re above the shenanigans, but they’re all jealous of how one the political phd students managed to score a topic like how has the life and crimes of Fire Lord Zuko influenced politicians of the current age.

#zuko - appearing to a politician in a meeting who’s using zuko as a reason he should stay in office: i will beat ur ass from the GRAVE#pure shit post hope u like it.#zuko#avatar the last airbender#atla#avatar#jack talks

127 notes

·

View notes

Text

the supreme court is so comically evil like you really have to vote blue across the board they made it legal to criminalize homelessness, overturned chevron which means the extremely conservative courts get to override health officials and environmental regulations

like infant mortality has increased by 8% in some states post roe, they will avoid the trump immunity case as long as possible, they essentially shielded all the jan 6 rioters

if biden loses we could be stuck with 6-3 or 7-2 extremely conservative judges for decades!!! that could mean 40 years of social rights regulations and health codes thrown out the door!!! look how much we’ve lost in 8 years?

and what about pack the courts? you can’t pack the courts with this split congress you can’t pass roe laws with this split congress you really have to vote blue all the way

#us politics#presidential debate#supreme court#I HATE THE SUPREME COURT I WANT THEM GONE SO BADLY#it’s so easy to lose everything at once!!!#yall we can’t do this again!!!!!!!!!!!!#ALITO AND THOMAS WILL QUIT#AND TRUMP WILL APPOINT FRESH ULTRA RIGHT CONSERVATIVE 30 SOMETHING YEAR OLDS#WHO WILL BE THERE FOR EONS#trump is so much worse than biden in like every way dawg#i feel sick guys LOOK AT OUR LIBERALS#thought the democratic party did this to themselves#fucking idiots! should’ve let biden step down#like we can go all the way back to RBG and Hillary Clinton like SHIT#this year i did a huge project on feminism in the us and to think all of those policies could be lost within my lifetime#we just got those#biden sucks he sucks a lot but trump is the devil#this country is not organized enough for 3rd party especially not with trump’s cult of personality#republicans will vote for him no matter what!!!#we should’ve done more about those damn courts uggg my head#this is why i can never be a politician id get a terrible headache and just start knocking shit over#one of my friends is voting green cause the democrats disappointed her an i agree they suck they suck so badly but#but the last thing i’ll do is let this country move any further to the right#i’ll take shit piece of trash liberal than i think people shouldn’t have rights conservative

81 notes

·

View notes