#materials for models

Explore tagged Tumblr posts

Text

#scale models#piping systems#materials for models#plastics in modeling#metals in modeling#composites in modeling#resins and epoxies#acrylic in models#PVC in models#ABS in models#polyethylene in models#benefits of scale models#visualization tools#engineering design#design validation#industrial plants

1 note

·

View note

Text

i don't normally participate in these redraw challenges but it's megumi so i'll make an exception

#my art#jujutsu kaisen#jjk#megumi fushiguro#fushiguro megumi#fanart#jjk fanart#jujutsu kaisen fanart#jjk megumi#megumi#looks at clock UHHHHHHHH oops#i got lost in the sauce that is rendering his gd chin and under his lips.... ive been in stylized anime mouth land 2 long i fear#i had forgotten how much of a pain those shadows are :'>>> eSP at a lookdown angle#fought a bit but little did he know i spent years doing coloured pencil portraits. this is My domain#god but the rest of the skin render was so FUN i love . warm grey in2 brown in2 red/orange fr the deep underneck shadow#lip tint heavy blush freckles glossier model fushiguro megumi...........im a believer i fear#had a bit of a hard time finding a middle ground between how i normally draw his hair and a more Realistic take on it#the model in the og has hair that's pretty close but i think the strands r a bit short n too heavily curved fr my tastes#its my brand im afraid i simply must give itfs both longer hair#nothing else feels Right#but god i underestimated how Good this photoshoot is as megu material . i get the hype now i get it#i did the sketch n i looked at it and i had an oh /oh/ moment#smh megumi put those lustrous emerald orbs away before u hurt some1#his gaze is too powerful . slaps a red bg on him makes him my new icon :)#anyway its 6am it is morning time do i sleep fr like 3 hrs or do i say megumi voice Whatever we shall see

2K notes

·

View notes

Text

how much shall we make jaskier pose this season? joey batey: yes

5K notes

·

View notes

Text

Diancie | Haruko Ichikawa

#blender#3d model#3d modeling#3d art#art#artists on tumblr#pokemon#pokemon tcg#diancie#testing out some different materials#queue

438 notes

·

View notes

Text

When hops are harvested every autumn in Germany's Hallertau region - the world’s largest hops-growing area about an hour north of Oktoberfest - for every one kilogram of material inside the cones that can be used to brew beer, there are 3.5 kilograms of wasted biomass from the rest of the plant. That's a ratio that's roughly 20 per cent usable product to 80 per cent waste.

Some of the hops waste can be used for fertilisers, and a portion can be sold to biogas plants to produce energy. But the majority is unusable for farmers, who may be forced to rent additional farmland to dump piles of the waste away from their crops. The piles can ferment and emit greenhouse gases - and sometimes catch fire.

“We saw a huge potential in sourcing locally and also using a waste stream that was neglected by basically most people," HopfON entrepreneur Mauricio Fleischer Acuña told The Associated Press.

#solarpunk#solarpunk business#solarpunk business models#solar punk#startup#reculture#construction material#materials innovation#germany

321 notes

·

View notes

Note

Nobody's going to talk about how Shockwave is like, the most charismatic man in the Mecha au? I'm sure people place bets on why he always wears a mask but then they see his face and are like: "Dang...Am I gay?"

And the answer is always yes. Yes you are now.

#if he wasn’t experimenting on people he would be considered one of the most attractive people in Mecha program#easily#he has this kind of face that charismatic and gorgeous#enough sharp angles to be photo model material but soft eyes and smile (although he doesn’t smile often)#tf mecha universe

272 notes

·

View notes

Text

3D MV Teaser for "Fusion" ft. DI: Verse; Song composed by DECO*27

#ah screw it i'm updating my tags on this#thank you user bitchyhideoutnerd i rlly needed the validation /gen#CHIAKI AND TSUKASA MATCHING EACH OTHER'S ENERGY GIVES ME LIFE#rui and natsume together ough... i'm weak#this song probably wouldn't be complete without a rap segment from jun and akito or smth right#i think the most unusual pairing here is toya and izumi lol like ok they're both blue and ig toya is model material?#i've been listening to this teaser on loop#this makes me so happy despite the current enstars situation#i'm torn#jun sazanami#chiaki morisawa#izumi sena#natsume sakasaki#akito shinonome#tsukasa tenma#toya aoyagi#rui kamishiro#ensemble stars#enstars#あんさんぶるスターズ!#あんスタ#project sekai colorful stage#project sekai#pjsk#prsk#mo's simping hours#mo rambles into the void#chiatag

153 notes

·

View notes

Text

A place to rest.

#i nearly died drawing all the materials around him#i had to make a reference sheet just for them#also i think tfh might be the first appearance of the star fragments?#since in ss they were just gratitude crystals#though granted there are some statues in the mm observatory that look awfully similar#between that and the outfit system‚ it's surprising to see how many concepts were carried over into botw#these cicadas are modeled after the sand cicadas in ss#i love the cicada tree in Hytopia#something about it just hits right#my art#hm...yeah i'll tag it as#LU Doppelgänger AU#lu legend#i'm glad i made this in advance since finals are currently killing me#subtle signs that you need rest: you start imagining your fav fictional characters getting some sleep#hoping to get the next fic in the series up soon but it might have to wait until i'm done with exams

313 notes

·

View notes

Text

@scimagic Uhhh made this because I just think they’re dynamic is neat. Also completely agree with the Puzzle headcanon super fun silly and very on point. As we speak he is clinging for his dear life :))

I really enjoy seeing the illustrated storyline you have unfolding between the two and figured it would be nice to see this motorcycle sequence in motion. So tadaa here it is! In animated form! Now your obligated to make a full length written novel in-depth about their relationship /j

Sincerely though thanks for the creative inspiration and keep on being a swagger artist 👍✨

#Whoops seems my hand slipped—silly me these aren’t my characters! Here’s your lovelies back sorry for abducting them momentarily :))#tagging people is scary I’m just going to hide under a rock after this gets posted jksjsksp#my brain goes ‘teehee my genius hidden evil scheme no one saw coming—yess I shall gift lovely artists fanart when they least expect it’ >:3#and then once it’s actally time to post my brain goes crisis mode and implodes#like why am I drawing attention to myself huh? why can’t I scutter off as a masked anonymous figure into the night#oh well at least we made a dope ass motorcycle animation hell yea. Hopefully you like it <3#honestly in retrospect kinda surprises me that Puzzles doesn’t have a helmet…pretty sure his screen is durable but not THAT durable#one oopsie woopsie and that thing will get cracked again <<#but then again where are you ever going to find a rectangle screen shaped helmet to fit his head jksjsksp#there’s simply no winning#oh uh also incase anyone wishes to know the logistics of making this….didn’t take too long just three days! Pretty speedy :3#ok now this is the part where I twiddle my hands and await results lol#…..also just occurred to me the motorcycle model should’ve been a Harley or Suzuki I’m just dumb and forgor#even tho it was specified in the tags of the initial post I referenced heavily#like I was staring at the art for reference + online material but that useful tidbit of tag information flew over my head :P#sorry all you get is the generic motorcycle model….mission failed better luck next time *dies*#hplonesome art#not my characters#gift for someone else#do I even need to specify that in tags NO CLUE I’M PARANOID/j

179 notes

·

View notes

Text

The joy of Florida 🐚

#gay bald#gay selfie#briefs bulge#dick bulge#gay fitness#gay#male muscle#fit hunk#abs & pecs#gay abs#jock bulge#brief bulge#man bulge#bald muscle#gay self pics#gay model#gay undies#guys in briefs#gay briefs#classic briefs#gay guy#male physique#blond jock#hot jock#mensfitness#bulking#semi nude#husband material#homoerotic#homoerotism

216 notes

·

View notes

Text

Can an older woman still get likes? 💋

#mature woman#mature beauty#cougar#beautiful women#mature wives#beautiful model#wife material#olderwomen

375 notes

·

View notes

Text

The MADRA trend, but it's Mynah

I just finished this and I'm so proud of it! I feel like I should say more about this render but I'm really tired rn 😅

#Also ik you didn't ask#but the inside of her face is flesh#I think it lowkey looks more disturbing in material mode because you can see more clearly the texture and her eyes look dark/kinda dead#signalis#signalis fanart#mnhr#mynah signalis#my art#blender#3d model#3d render#body horror#(?)

212 notes

·

View notes

Text

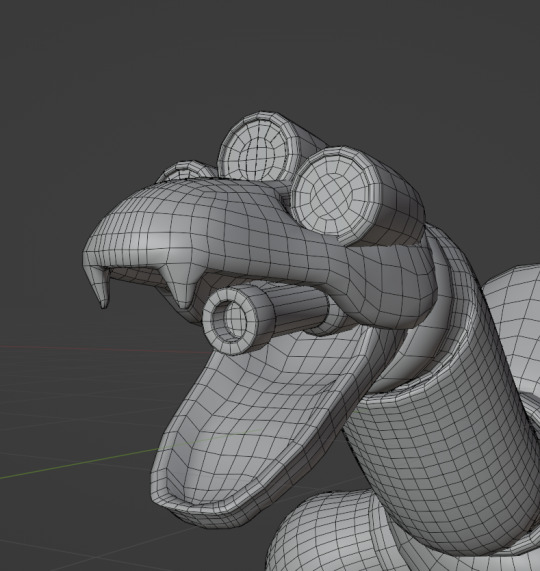

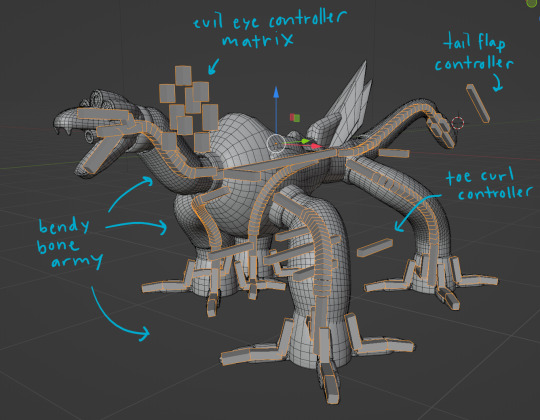

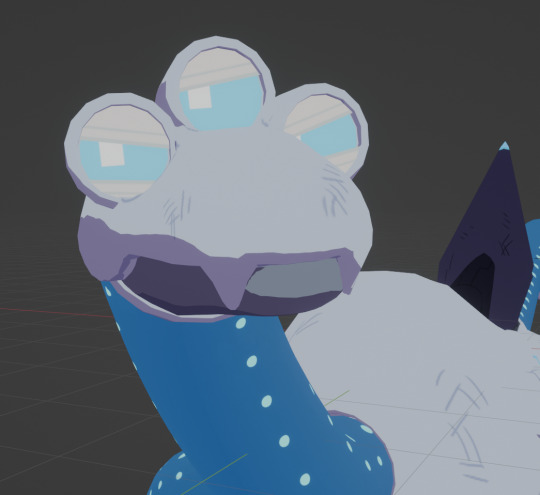

beast compilation. behold my funny dog

#quite honestly the most complex rig ive ever made so far and the first thing even resembling a facial rig#the eyes are 3 layers of mesh w materials hooked up to drivers. thank you guy who made a sonic model for tutorial#steelheart redux#mercury#my art#3d#blender#“for the love of god use custom bone shapes for your controllers” no <3 im lazy#see *i* know what everything does usually. so its fine

126 notes

·

View notes

Link

#PPCU jacks off#modelling#aloy#leonardo da pistoia#e-girl#andrei belloli#swimming pool#ed my beloved#y'shtola rhul#cuteness#Mécistée#wife material#sooo sweet#aes

82 notes

·

View notes

Text

How do we feel about black and white pics?

#jock bulge#dick bulge#man bulge#gay#gay undies#gay underwear#bald muscle#gay bald#male muscle#gay man#gay fitness#male physique#gay guy#fit hunk#classic physique#gay selfie#gay self pics#semi nude#husband material#gayhot#gay smooth#male smut#gay men#gay model#gay jock#gayboy#gay abs#hunky man#male hunk#hunky guy

176 notes

·

View notes

Text

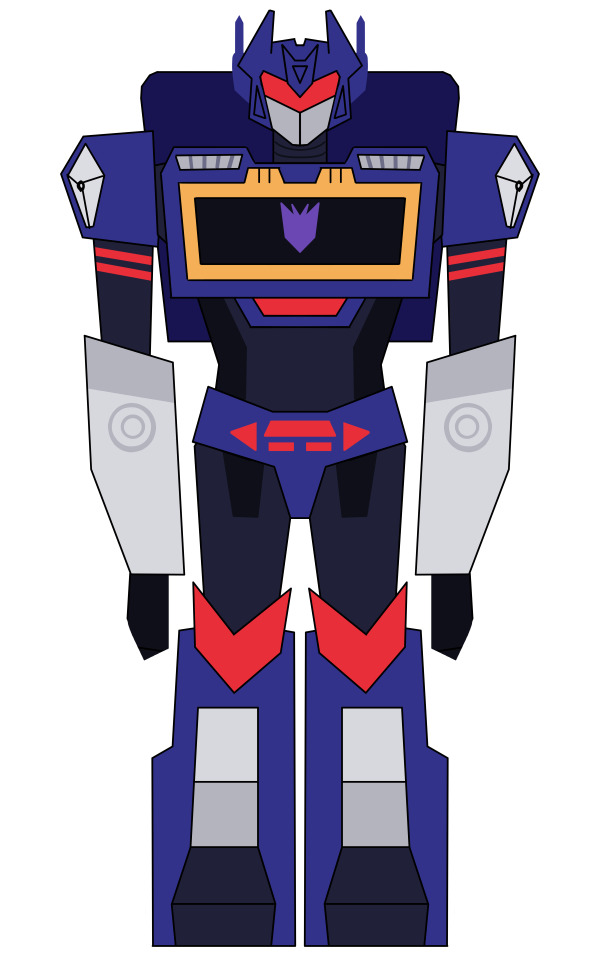

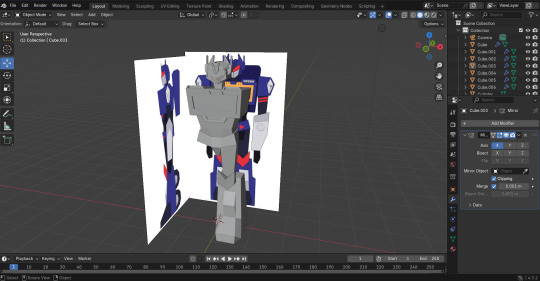

GUESS WHO FIGURED OUT 3D ANIMATION!!!!!!!

i started this project on the 15th with the 2d drawing at like 3am. the next day i modeled + textured it and today i spent all day rendering/animating!

this is the 2nd 3d model ive ever made and i couldnt have done it without @crashsune 's course on yt!! i have tried every couple months since 2020 to learn 3d modeling and this is the BEST tutorial i have ever seen.

lots of tutorials show you how to make like. a hyperrealistic donut. why would i want to make that. that is useless. i want silly blocky characters and i want them now!!

i started this series on jan 9th and i fully completed a love-chan using the free downloadables in ONE DAY!! then i watched it again a few days later and made THIS!! im still loosing my marbles like i had absolutely 0 experience or prior knowledge in any way,, (previous attempts never left me with any proper models, only weird squishy nightmare stress creations i gave up on instantly).

hes still a bit clunky but idc i love him. it is literally day 8 of me using blender of all time. ofc its gonna get a lil silly

youtube

its creature time baby (shockwave is next)

#my art#art#3d art#blender#transformers#soundwave#tf soundwave#tf au#3d animation#3d model#3d modeling#character design#low poly#lowpoly#blender 3d#3d artwork#low poly art#tf#tf fanart#transformers fanart#im going to make so many creatures you have no idea#i love making little guys but im in the middle of a months long chronic pain flareup rn#so i cant use clay/paint/sewing#cant walk/pick up heavy stuff/hand eye coordination went to shit#3d modeling is perfect#im sitting + all the materials are in one place + hands are mostly steady pressing keys n stuff#might update soundwaves textures a tad bit#more graidents and colors#but rn hes functional#Youtube

58 notes

·

View notes