#fr prediction

Explore tagged Tumblr posts

Text

random thoughts on a wind and light ancient. the wind one would be made of smoke or fog with some stoney accents

imagine blossom on that bad boy... but no probably wont happen since they're pretty similar to obbies, but its fun to dream!

also, wtf are they gonna do for the light ancient. aren't imperials the oldest dragons

9 notes

·

View notes

Text

A man and believe and dream <3

#flight rising#fr#fr art#flight rising art#I forgot the other tags for predictions or fan species uhhh#brain is very slow

667 notes

·

View notes

Text

idk about yall but life is good again

#my art#jujutsu kaisen#jjk#jjk fanart#jujutsu kaisen fanart#jjk art#jjk spoilers#jjk manga spoilers#jjk leaks#megumi fushiguro#fushiguro megumi#HES SMILINIG MINUNGGJSGJVSFVSFJH#MY DARLING BOY MY SON WHOM I BIRTHED I LOVE YOU#fushiguro megumi the way i would kill/cry/die fr u ur smile cures depression waters crops etc etc#your zuko costumes pretty good but the scars on the wrong side...................#cant believe i lost the scar side coin flip smh leave it to me who does not know her lefts from her rights 2 predict the Wrong Side#sue me fr thinking yuuji lost an eye fr good n wanting them 2 have complementary injuries smh >:/#its ok im over it im over it im just so happy we got scarred!megu im so happy we got smiling!megu im so happy we got ALIVE MEGU#oh my god ive been up all night hand hurt hand ouch but its fr him its worth it i can keep going i can go all day if i need to#god its a good day 2 b a megumi stan

1K notes

·

View notes

Text

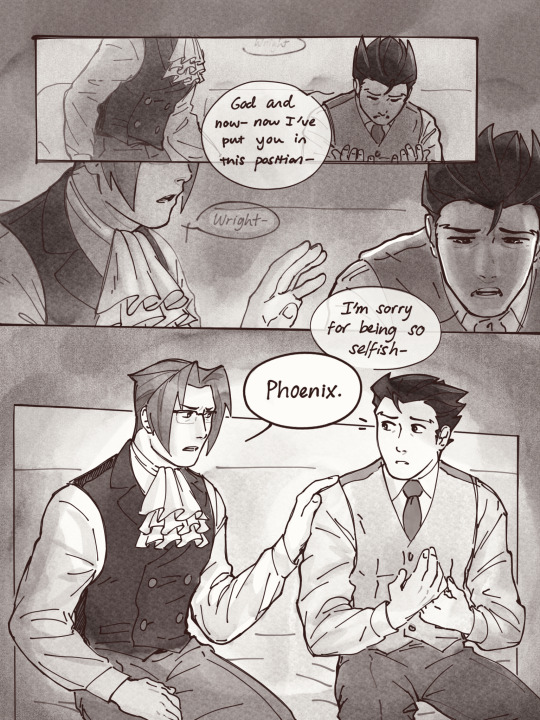

“Take my hand” pages 5-11

1 - day 2 - truth - 3

#nmweek23#narumitsu#wrightworth#phoenix wright#miles edgeworth#i spent all yesterday shading and lettering these your boy is so tired BUT IT WAS WORTH IT#in which i cram way too much into way too little and yet way too many pages for a single day#my sincerest apologies to them on their day but i will make it up to them i PROMISE#‘prove it’ you’ll NEVER GUESS what happens next :^))))) (<-guy who is extremely predictable)#phoenix is so strong because if miles looked at me like that i’d be going crazy and im like a known enemy of edgeworth#see you guys in like 5-7 business days on part 3 o7#fan art#aa#fan comic#rendevok#OH OH ALSO there’s like a whole fucking essay i could write about these pages esp wrt light and also The Hands but youll have to ask for it#just know that if you see something… there was probably a reason for it!#ok thats it fr this time

4K notes

·

View notes

Text

Ummm what are Finn Wolfhard and Noah Schnapp doing together at a panel??? Shouldn’t they be strictly locked up in the Duffers’ basement right about now??? 🧐🧐🧐

#a joke#but fr#they’re gonna fucking#spoiler byler endgame#I do NOT trust them#to be in the same room#and not spoil everything#lmao#lock them up promptly#byler#mike wheeler#will byers#finn wolfhard#noah schnapp#mike wheeler is in love with will byers#mike wheeler is not straight#byler is endgame#mike wheeler is a boykisser#mike wheeler is gay#st5#byler brainrot#stranger things#st4#st5 speculation#st5 predictions#st5 spoilers

216 notes

·

View notes

Text

Yeah ik it's shitty (hate the format but didn't know how else to do it) but I'm contributing to this 🙏 I've been hyperfixating on hannibal for a few months now and after watching season 2 of squid game and shipping these two I only have thoughts about the parallels of those two relationship in head (and I think I just love old men toxic yaoi doomed by the narrative)

Wasn't the 2024 hannigram comeback I expected but I'm glad nonetheless

#I should be studying my finals tho#like it's literally next week 💀#But fr tho#why are they just the same#I mean main difference imo is that hannigram is mostly canon when inho and gihun will never be a canon ship but aside from that#sigh I love dilf I fear#also season 2 of squid game was banger I just wished some plot weren't that easy to predicted but still#squid game#inho#In-Ho#Hwang In-Ho#the frontman#young-il#Seong Gi-Hun#Gi-hun#gihun#inho x gihun#squid game 2#hannibal#hannibal lecter#will graham#hannigram

130 notes

·

View notes

Text

White lily redesigns ^^

#i like how she looks normally but i just felt like doing this#ALSO i did a golden cheese redesign a while back AND YALL I PREDICTED HER GLOW UP FR#i was like this girl needs more of everything and THEY DELIVERED#i love her new design ♥#but this is about white lily not gc#oop this might show up in gc tag sorry guys#anyway tag time#crk#cookie run#crk white lily cookie#white lily cookie

121 notes

·

View notes

Text

#can't believe matthew lewis predicted flight rising omg#flight rising#frfanart#fr gaoler#sorrel the longneck#basilisk the gaoler#mask art

77 notes

·

View notes

Text

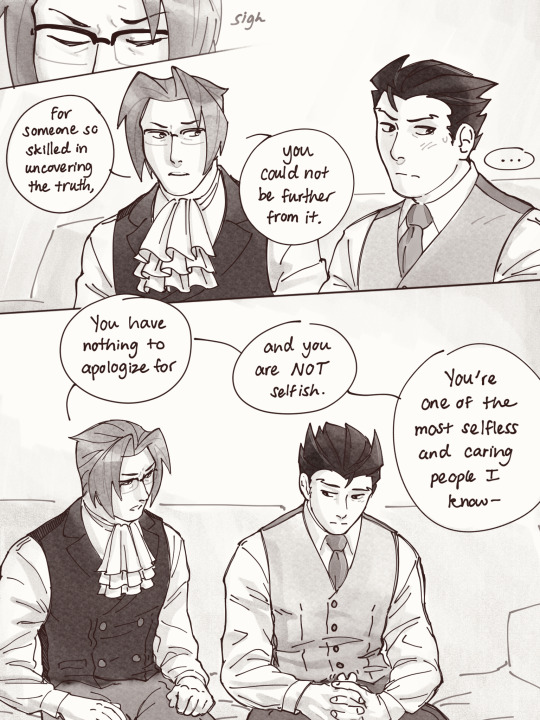

Hypothetical AI election disinformation risks vs real AI harms

I'm on tour with my new novel The Bezzle! Catch me TONIGHT (Feb 27) in Portland at Powell's. Then, onto Phoenix (Changing Hands, Feb 29), Tucson (Mar 9-12), and more!

You can barely turn around these days without encountering a think-piece warning of the impending risk of AI disinformation in the coming elections. But a recent episode of This Machine Kills podcast reminds us that these are hypothetical risks, and there is no shortage of real AI harms:

https://soundcloud.com/thismachinekillspod/311-selling-pickaxes-for-the-ai-gold-rush

The algorithmic decision-making systems that increasingly run the back-ends to our lives are really, truly very bad at doing their jobs, and worse, these systems constitute a form of "empiricism-washing": if the computer says it's true, it must be true. There's no such thing as racist math, you SJW snowflake!

https://slate.com/news-and-politics/2019/02/aoc-algorithms-racist-bias.html

Nearly 1,000 British postmasters were wrongly convicted of fraud by Horizon, the faulty AI fraud-hunting system that Fujitsu provided to the Royal Mail. They had their lives ruined by this faulty AI, many went to prison, and at least four of the AI's victims killed themselves:

https://en.wikipedia.org/wiki/British_Post_Office_scandal

Tenants across America have seen their rents skyrocket thanks to Realpage's landlord price-fixing algorithm, which deployed the time-honored defense: "It's not a crime if we commit it with an app":

https://www.propublica.org/article/doj-backs-tenants-price-fixing-case-big-landlords-real-estate-tech

Housing, you'll recall, is pretty foundational in the human hierarchy of needs. Losing your home – or being forced to choose between paying rent or buying groceries or gas for your car or clothes for your kid – is a non-hypothetical, widespread, urgent problem that can be traced straight to AI.

Then there's predictive policing: cities across America and the world have bought systems that purport to tell the cops where to look for crime. Of course, these systems are trained on policing data from forces that are seeking to correct racial bias in their practices by using an algorithm to create "fairness." You feed this algorithm a data-set of where the police had detected crime in previous years, and it predicts where you'll find crime in the years to come.

But you only find crime where you look for it. If the cops only ever stop-and-frisk Black and brown kids, or pull over Black and brown drivers, then every knife, baggie or gun they find in someone's trunk or pockets will be found in a Black or brown person's trunk or pocket. A predictive policing algorithm will naively ingest this data and confidently assert that future crimes can be foiled by looking for more Black and brown people and searching them and pulling them over.

Obviously, this is bad for Black and brown people in low-income neighborhoods, whose baseline risk of an encounter with a cop turning violent or even lethal. But it's also bad for affluent people in affluent neighborhoods – because they are underpoliced as a result of these algorithmic biases. For example, domestic abuse that occurs in full detached single-family homes is systematically underrepresented in crime data, because the majority of domestic abuse calls originate with neighbors who can hear the abuse take place through a shared wall.

But the majority of algorithmic harms are inflicted on poor, racialized and/or working class people. Even if you escape a predictive policing algorithm, a facial recognition algorithm may wrongly accuse you of a crime, and even if you were far away from the site of the crime, the cops will still arrest you, because computers don't lie:

https://www.cbsnews.com/sacramento/news/texas-macys-sunglass-hut-facial-recognition-software-wrongful-arrest-sacramento-alibi/

Trying to get a low-waged service job? Be prepared for endless, nonsensical AI "personality tests" that make Scientology look like NASA:

https://futurism.com/mandatory-ai-hiring-tests

Service workers' schedules are at the mercy of shift-allocation algorithms that assign them hours that ensure that they fall just short of qualifying for health and other benefits. These algorithms push workers into "clopening" – where you close the store after midnight and then open it again the next morning before 5AM. And if you try to unionize, another algorithm – that spies on you and your fellow workers' social media activity – targets you for reprisals and your store for closure.

If you're driving an Amazon delivery van, algorithm watches your eyeballs and tells your boss that you're a bad driver if it doesn't like what it sees. If you're working in an Amazon warehouse, an algorithm decides if you've taken too many pee-breaks and automatically dings you:

https://pluralistic.net/2022/04/17/revenge-of-the-chickenized-reverse-centaurs/

If this disgusts you and you're hoping to use your ballot to elect lawmakers who will take up your cause, an algorithm stands in your way again. "AI" tools for purging voter rolls are especially harmful to racialized people – for example, they assume that two "Juan Gomez"es with a shared birthday in two different states must be the same person and remove one or both from the voter rolls:

https://www.cbsnews.com/news/eligible-voters-swept-up-conservative-activists-purge-voter-rolls/

Hoping to get a solid education, the sort that will keep you out of AI-supervised, precarious, low-waged work? Sorry, kiddo: the ed-tech system is riddled with algorithms. There's the grifty "remote invigilation" industry that watches you take tests via webcam and accuses you of cheating if your facial expressions fail its high-tech phrenology standards:

https://pluralistic.net/2022/02/16/unauthorized-paper/#cheating-anticheat

All of these are non-hypothetical, real risks from AI. The AI industry has proven itself incredibly adept at deflecting interest from real harms to hypothetical ones, like the "risk" that the spicy autocomplete will become conscious and take over the world in order to convert us all to paperclips:

https://pluralistic.net/2023/11/27/10-types-of-people/#taking-up-a-lot-of-space

Whenever you hear AI bosses talking about how seriously they're taking a hypothetical risk, that's the moment when you should check in on whether they're doing anything about all these longstanding, real risks. And even as AI bosses promise to fight hypothetical election disinformation, they continue to downplay or ignore the non-hypothetical, here-and-now harms of AI.

There's something unseemly – and even perverse – about worrying so much about AI and election disinformation. It plays into the narrative that kicked off in earnest in 2016, that the reason the electorate votes for manifestly unqualified candidates who run on a platform of bald-faced lies is that they are gullible and easily led astray.

But there's another explanation: the reason people accept conspiratorial accounts of how our institutions are run is because the institutions that are supposed to be defending us are corrupt and captured by actual conspiracies:

https://memex.craphound.com/2019/09/21/republic-of-lies-the-rise-of-conspiratorial-thinking-and-the-actual-conspiracies-that-fuel-it/

The party line on conspiratorial accounts is that these institutions are good, actually. Think of the rebuttal offered to anti-vaxxers who claimed that pharma giants were run by murderous sociopath billionaires who were in league with their regulators to kill us for a buck: "no, I think you'll find pharma companies are great and superbly regulated":

https://pluralistic.net/2023/09/05/not-that-naomi/#if-the-naomi-be-klein-youre-doing-just-fine

Institutions are profoundly important to a high-tech society. No one is capable of assessing all the life-or-death choices we make every day, from whether to trust the firmware in your car's anti-lock brakes, the alloys used in the structural members of your home, or the food-safety standards for the meal you're about to eat. We must rely on well-regulated experts to make these calls for us, and when the institutions fail us, we are thrown into a state of epistemological chaos. We must make decisions about whether to trust these technological systems, but we can't make informed choices because the one thing we're sure of is that our institutions aren't trustworthy.

Ironically, the long list of AI harms that we live with every day are the most important contributor to disinformation campaigns. It's these harms that provide the evidence for belief in conspiratorial accounts of the world, because each one is proof that the system can't be trusted. The election disinformation discourse focuses on the lies told – and not why those lies are credible.

That's because the subtext of election disinformation concerns is usually that the electorate is credulous, fools waiting to be suckered in. By refusing to contemplate the institutional failures that sit upstream of conspiracism, we can smugly locate the blame with the peddlers of lies and assume the mantle of paternalistic protectors of the easily gulled electorate.

But the group of people who are demonstrably being tricked by AI is the people who buy the horrifically flawed AI-based algorithmic systems and put them into use despite their manifest failures.

As I've written many times, "we're nowhere near a place where bots can steal your job, but we're certainly at the point where your boss can be suckered into firing you and replacing you with a bot that fails at doing your job"

https://pluralistic.net/2024/01/15/passive-income-brainworms/#four-hour-work-week

The most visible victims of AI disinformation are the people who are putting AI in charge of the life-chances of millions of the rest of us. Tackle that AI disinformation and its harms, and we'll make conspiratorial claims about our institutions being corrupt far less credible.

If you'd like an essay-formatted version of this post to read or share, here's a link to it on pluralistic.net, my surveillance-free, ad-free, tracker-free blog:

https://pluralistic.net/2024/02/27/ai-conspiracies/#epistemological-collapse

Image: Cryteria (modified) https://commons.wikimedia.org/wiki/File:HAL9000.svg

CC BY 3.0 https://creativecommons.org/licenses/by/3.0/deed.en

#pluralistic#ai#disinformation#algorithmic bias#elections#election disinformation#conspiratorialism#paternalism#this machine kills#Horizon#the rents too damned high#weaponized shelter#predictive policing#fr#facial recognition#labor#union busting#union avoidance#standardized testing#hiring#employment#remote invigilation

145 notes

·

View notes

Text

lightning gryphon, with and without enhancements

#flight rising#fr resources#I CAN'T BELIEVE IT'S NOT PLAGUE#THAT WAS MY PREDICTION WHEN I POSTED THE LIGHT GRYPHON ON FR LMAOOOOOOO#WHAT'S PLAGUE'S GRYPHON GONNA BE I HAVE TO KNOW RIGHT NOW

57 notes

·

View notes

Text

NO WAY I PREDICTED THAT WATCHER WAS QUITTING YOUTUBE OH MY GODDDD!!!

#watcher#watcher entertainment#we are watcher#ryan bergara#shane madej#steven lim#LOOK AT MY BLOG I PREDICTED THAT SHIT!!!#haven’t watched the entire video so i’m sure my prediction wasn’t 100 percent correct#BUT I LITERALLY SAID#”what if they are leaving youtube”#I ATE UPPPP FR

75 notes

·

View notes

Text

😀 i love setting out to draw 1 character multiple times but ending up drawing multiple characters 1 time instead 😀 i love it so much 😀

#honestly the qi rong is still my fav.... first one i did.. its jsut so yummy to me ok#the he xuan too.. other two can go fuck off for all i care#i only care for the left side of this canvas#also i feel like i channeled my mbj design while trying to draw he xuan 😔 i cant help it--- like theyre both nonhumans with black n blue#color schemes in my head and.. yeah.. imean he xuan is water and mobei-jun is ice it makes sense in my mind#but also imagining that he xuan design absolutely tearing into food is cracking me up.. i just need to draw him enjoying a nice meal fr..#tian guan ci fu#tgcf#heaven official's blessing#the four calamities#qi rong#hua cheng#he xuan#bai wuxiang#my art#me @ everyone: you get long pointy ears 🥰🥰🥰. (gets to bwx) ... not you tho 🥰🥰🥰#i should draw every tgcf i want to so bad#you will never guess who my favs are i am so not predictable i am so unpredictable you would never see it coming you would never guess im s#i feel like i havent done a lot of tgcf posting on here tho.... . i mean my initial hyperfixation on it was last year i believe??#but i never really stopped thinking about adghasdhgahdjhafa

248 notes

·

View notes

Text

tried my best 2 power through but i am so sleepy so i will finish this megu draws tmr so uhhhhhhh hav hand wips in the meantime

#hina.txt#wip#art process#got real happy when i saw this photo actually had the model's hand in it front n centre n not Hidden#nothing 2 say fr myself. listen i may be a Predictable Bitch but sue me i can sure as fuck render a knuckle#may have made th hand Bigger than the ref....partially as a treat fr me but also bc u know megumi's got freaky long pianist's fingers#anyway tmr will have this up then . idk ! if no visions strike i will do assorted busts#realized its been a while since my last serious gojo or sukuna or any1 who isn't a first year draws#so i wil. endeavour 2 remedy this asap

54 notes

·

View notes

Text

(cue upbeat summer shopping montage)

#things that make sense to me andi and thea and will make sense to everyone else….. soon#wills hand looks so fucked up here whatever#hehehehehehe#as predicted my summer love fic is nowhere near done so#here#take this instead#bylerweek2023#will byers#mike wheeler#byler#idk i’ll post enough art to make an art tag . i’ll deal w that later#/astro posts#shoutout to bhavna’s rectangle brush it’s saving my life fr#acswy

788 notes

·

View notes

Text

I know people tend to think that Dustin, Lucas, Max, El and some of the other characters will have a difficult time wrapping their heads around Byler because of the time period, but I kind of think it will be like a lightbulb moment for them where they're like "Ohhhh so *that's* why." I mean, maybe it should be difficult for Jonathan and Joyce to wrap their heads around it too because of the time period, but it clearly isn't. They recognize the love that Mike and Will have for each other and most likely see it as being "different" from what other people experience, but no less beautiful or valid.

I can see the other party members having a similar reaction. They will be open to and accepting of the idea that Mike and Will belong together, not because they haven't been influenced by the negative stereotypes they've been taught to associate queerness with, but because they've all had a firsthand look at what Mike and Will's relationship is really all about. Like in s2 when Will was having visions in his now memories, the party saw the way that Mike snapped him out of it, wrapped an arm around him, and took him home. Lucas saw Mike's devotion towards apologizing to Will and making things right with him in s3. Like, they have seen and probably subconsciously understand that Mike and Will love each other: they just don't have the tools to recognize what the relationship is yet. But as soon as they realize what it is, I don't think they'll struggle to accept it at all and will probably actually be the boys' biggest supporters and defenders.

That's the dynamic the party has had from the beginning. Dustin and Lucas aren't homophobic---they were fine being friends with and defending the "gay kid" even if it meant getting bullied or harassed. The party is a group of disadvantaged kids whether because of queerness, race, disability or poverty, and they all love and accept each other not just in light of their disadvantages but in part BECAUSE of their disadvantages. I think a beautiful part of Byler will be that the party will accept Mike and Will with open arms, and Dustin and Lucas particularly won't be weird about it all since they've seen this love growing and flourishing since the BEGINNING. I hope we get scenes of the four boys together where Mike and Will are unabashedly a couple but the group's friendship dynamic hasn't changed at all other than maybe growing stronger because of it. Lucas and Dustin will totally take up for Byler and defend them because they essentially always have.

#i love when the party#yk#like i just love them all sm#they're all such sweethearts#byler's number 1 defenders fr#other than joyce and jonathan ofc#byler#byler nation#byler tumblr#byler is endgame#byler analysis#byler brainrot#byler is canon#mike wheeler#will byers#mike wheeler is a boykisser#mike wheeler is in love with will byers#mike wheeler is not straight#mike wheeler is gay#byler endgame#stranger things#st4#st5#st5 predictions#st5 speculation#lucas sinclair#dustin henderson#the party

266 notes

·

View notes

Text

Yeah im late to the bandwagon but i've been itching to redraw them for some days now,, theyre so small

#this scene means so much to me#its such a shame that bones made them look like ass#fr how hard is it to draw a nose??#anyways im going through my tgchk arc again#my shipping tendencies rotate in a very predictable pattern😭#it usually goes miryumi>midjoke>mmjr>tgchk#so expect Things#i have a playlist ready and all#they are sooooooo playlistable#my skrukles#my mentally ill products of their society#my queers#my narrative foils#my mirror characters#my doomed to be together and happy about its#togachako#tgchk#himiko toga#ochako uraraka#mha#bnha#my hero academia#wlw#chiquilines draws

286 notes

·

View notes