#tag docker image after build

Explore tagged Tumblr posts

Text

man, i LOVE the rush you get in the days before burning out

Took me a few days but I managed to upgrade a bunch of old code and packages, opened literally hundreds of documentation and troubleshooting tabs (and closed most of them! only about 1152 to go), BUT I managed to get the Heptapod runners to actually build and push docker images on commit, AND it deploys it to my kubernetes cluster.

And yeah I know that someone who just uses some free tier cloud services would take 2.4 minutes to do the same, but I get the extra satisfaction of knowing I can deploy a k8s cluster onto literally any machine that's connected to the internet, with ssl certificates, gitlab, harbor, postgres and w/e. Would be also nice to have an ELK stack or Loki and obviously prometheus+grafana, and backups, but whatever, I'll add those when I have actually something useful to run.

Toying with the idea of making my own private/secure messaging platform but it wouldn't be technically half as competent as Signal; However I still want to make the registration-less, anon ask + private reply platform. Maybe. And the product feature request rating site. And google keep that properly works in Firefox.

Anyway, realistically though I'll start with learning Vue 3 and making the idle counter button app for desktop/android, which I can later port to the web. (No mac/ios builds until someone donates a macbook and $100 for a developer license, which I don't want anyway.) This will start as a button to track routine activities (exercise, water drinking, doing dishes), or a button to click when forced to sit tight and feeling uncomfortable (eg. you can define one button for boring work meetings, one for crowded bus rides, one of insomnia, etc). The app will keep statistics per hour/day/etc. Maybe I'll add sub-buttons with tags like "anxious" "bored" "tired" "in pain" etc. I'm going to use it as a simpler method of journaling and keeping track of health related stuff.

After that I want to extend the app with mini-games that will be all optional, and progressively more engaging. At the lowest end it will be just moving mouse left and right to increase score, with no goal, no upgrades, no story, etc. This is something for me to do when watching a youtube tutorial but feeling too anxious/restless to just sit, and too tired to exercise.

On the other end it will be just whatever games you don't mind killing time with that are still simple and unobtrusive and only worth playing when you're too tired and brain dead to Do Cool Stuff. Maybe some infinite procedurally generated racing with no goals, some sort of platformer or minecraft-like world to just walk around in, without any goals or fighting or death. Or a post-collapse open world where you just pick up trash and demolish leftovers of capitalism. Stardew Valley without time pressure.

I might add flowcharts for ADHD / anxieties, sort of micro-CBT for things that you've already been in therapy for, but need regular reminders. (If the app gets popular it could also have just a these flowcharts contributed from users for download).

Anyway, ideas are easy, good execution is hard, free time is scarce. I hope I get the ball rolling though.

0 notes

Text

Version 556

youtube

windows

zip

exe

macOS

app

linux

tar.zst

I had an ok week. I fixed some bugs and added a system to force-set filetypes.

You will be asked on update if you want to regenerate some animation thumbnails. The popup explains the decision, I recommend 'yes'.

full changelog

forced filetype

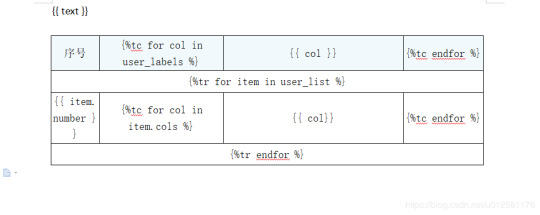

The difference between a zip and an Ugoira and a cbz is not perfectly clear cut. I am happy with the current filetype scanner--and there are a couple more improvements this week--but I'm sure there will always be some fuzziness in the difficult cases. This also applies to some clever other situations, like files that secretly store a zip concatenated after a jpeg. You might want that file to be considered something other than hydrus thinks it technically is.

So, on any file selection, you can now hit right-click->manage->force filetype. You can set any file to be seen as any other file. The changes take affect immediately, are reflected in presentation and system:filetype searches, and the files themselves will be renamed on disk (to aid 'open externally'). The original filetype is remembered, and everything is easily undoable through the same dialog.

Also added is 'system:has forced filetype', under the 'system:file properties' entry, if you'd like to find what you have set one way or the other.

This is experimental, and I don't recommend it for the most casual users, but if you are comfortable, have a play with it. I still need to write better error handling for complete nonsense cases (e.g. calling a webm a krita is probably going to raise an error somewhere), but let me know how you get on.

other highlights

I fixed some dodgy numbers in Mr. Bones (deleted file count) and the file history chart (inbox/archive count). If you have had some whack results here, let me know if things are any better! If they aren't, does changing the search to something more specific than 'all my files'/'system:everything' improve things?

Some new boot errors, mostly related to missing database components, are now handled with nicer dialog prompts, and even interactive console prompts serverside.

I _may_ have fixed/relieved the 'program is hung when restored from minimise to system tray' issue, but I am not confident. If you still have this, let me know how things are now. If you still get a hang, more info on what your client was doing during the minimise would help--I just cannot reproduce this problem reliably.

Thanks to a user who figured out all the build script stuff, the Docker package is now Alpine 3.19. The Docker package should have newer libraries and broader file support.

birthday and year summary

The first non-experimental beta of hydrus was released on December 14th, 2011. We are now going on twelve years.

Like many, I had an imperfect 2023. I've no complaints, but IRL problems from 2022 cut into my free time and energy, and I regret that it impacted my hydrus work time. I had hoped to move some larger projects forward this year, but I was mostly treading water with little features and optimisations. That said, looking at the changelog for the year reveals good progress nonetheless, including: multiple duplicate search and filter speed and accuracy improvements, and the 'one file in this search, the other in this search' system; significant Client API expansions, in good part thanks to a user, including the duplicates system, more page inspections, multiple local file domains, and http headers; new sidecar datatypes and string processing tools; improvements to 'related tags' search; much better transparency support, including 'system:has transparency'; more program stability, particularly with mpv; much much faster tag autocomplete results, and faster tag and file search cancelling; the inc/dec rating service; better file timestamp awareness and full editing capability; the SauceNAO-style image search under 'system:similar files'; blurhashes; more and better system predicate parsing, and natural system predicate parsing in the normal file search input; a background database table delete system that relieves huge jobs like 'delete the PTR'; more accurate Mr. Bones and File History, and both windows now taking any search; and multiple new file formats, like HEIF and gzip and Krita, and thumbnails and full rendering for several like PSD and PDF, again in good part thanks to a user, and then most recently the Ugoira and CBZ work.

I'm truly looking forward to the new year, and I plan to keep working and putting out releases every week. I deeply appreciate the feedback and help over the years. Thank you!

next week

I have only one more week in the year before my Christmas holiday, so I'll just do some simple cleanup and little fixes.

0 notes

Text

This Week in Rust 516

Hello and welcome to another issue of This Week in Rust! Rust is a programming language empowering everyone to build reliable and efficient software. This is a weekly summary of its progress and community. Want something mentioned? Tag us at @ThisWeekInRust on Twitter or @ThisWeekinRust on mastodon.social, or send us a pull request. Want to get involved? We love contributions.

This Week in Rust is openly developed on GitHub and archives can be viewed at this-week-in-rust.org. If you find any errors in this week's issue, please submit a PR.

Updates from Rust Community

Official

Announcing Rust 1.73.0

Polonius update

Project/Tooling Updates

rust-analyzer changelog #202

Announcing: pid1 Crate for Easier Rust Docker Images - FP Complete

bit_seq in Rust: A Procedural Macro for Bit Sequence Generation

tcpproxy 0.4 released

Rune 0.13

Rust on Espressif chips - September 29 2023

esp-rs quarterly planning: Q4 2023

Implementing the #[diagnostic] namespace to improve rustc error messages in complex crates

Observations/Thoughts

Safety vs Performance. A case study of C, C++ and Rust sort implementations

Raw SQL in Rust with SQLx

Thread-per-core

Edge IoT with Rust on ESP: HTTP Client

The Ultimate Data Engineering Chadstack. Running Rust inside Apache Airflow

Why Rust doesn't need a standard div_rem: An LLVM tale - CodSpeed

Making Rust supply chain attacks harder with Cackle

[video] Rust 1.73.0: Everything Revealed in 16 Minutes

Rust Walkthroughs

Let's Build A Cargo Compatible Build Tool - Part 5

How we reduced the memory usage of our Rust extension by 4x

Calling Rust from Python

Acceptance Testing embedded-hal Drivers

5 ways to instantiate Rust structs in tests

Research

Looking for Bad Apples in Rust Dependency Trees Using GraphQL and Trustfall

Miscellaneous

Rust, Open Source, Consulting - Interview with Matthias Endler

Edge IoT with Rust on ESP: Connecting WiFi

Bare-metal Rust in Android

[audio] Learn Rust in a Month of Lunches with Dave MacLeod

[video] Rust 1.73.0: Everything Revealed in 16 Minutes

[video] Rust 1.73 Release Train

[video] Why is the JavaScript ecosystem switching to Rust?

Crate of the Week

This week's crate is yarer, a library and command-line tool to evaluate mathematical expressions.

Thanks to Gianluigi Davassi for the self-suggestion!

Please submit your suggestions and votes for next week!

Call for Participation

Always wanted to contribute to open-source projects but did not know where to start? Every week we highlight some tasks from the Rust community for you to pick and get started!

Some of these tasks may also have mentors available, visit the task page for more information.

Ockam - Make ockam node delete (no args) interactive by asking the user to choose from a list of nodes to delete (tuify)

Ockam - Improve ockam enroll ----help text by adding doc comment for identity flag (clap command)

Ockam - Enroll "email: '+' character not allowed"

If you are a Rust project owner and are looking for contributors, please submit tasks here.

Updates from the Rust Project

384 pull requests were merged in the last week

formally demote tier 2 MIPS targets to tier 3

add tvOS to target_os for register_dtor

linker: remove -Zgcc-ld option

linker: remove unstable legacy CLI linker flavors

non_lifetime_binders: fix ICE in lint opaque-hidden-inferred-bound

add async_fn_in_trait lint

add a note to duplicate diagnostics

always preserve DebugInfo in DeadStoreElimination

bring back generic parameters for indices in rustc_abi and make it compile on stable

coverage: allow each coverage statement to have multiple code regions

detect missing => after match guard during parsing

diagnostics: be more careful when suggesting struct fields

don't suggest nonsense suggestions for unconstrained type vars in note_source_of_type_mismatch_constraint

dont call mir.post_mono_checks in codegen

emit feature gate warning for auto traits pre-expansion

ensure that ~const trait bounds on associated functions are in const traits or impls

extend impl's def_span to include its where clauses

fix detecting references to packed unsized fields

fix fast-path for try_eval_scalar_int

fix to register analysis passes with -Zllvm-plugins at link-time

for a single impl candidate, try to unify it with error trait ref

generalize small dominators optimization

improve the suggestion of generic_bound_failure

make FnDef 1-ZST in LLVM debuginfo

more accurately point to where default return type should go

move subtyper below reveal_all and change reveal_all

only trigger refining_impl_trait lint on reachable traits

point to full async fn for future

print normalized ty

properly export function defined in test which uses global_asm!()

remove Key impls for types that involve an AllocId

remove is global hack

remove the TypedArena::alloc_from_iter specialization

show more information when multiple impls apply

suggest pin!() instead of Pin::new() when appropriate

make subtyping explicit in MIR

do not run optimizations on trivial MIR

in smir find_crates returns Vec<Crate> instead of Option<Crate>

add Span to various smir types

miri-script: print which sysroot target we are building

miri: auto-detect no_std where possible

miri: continuation of #3054: enable spurious reads in TB

miri: do not use host floats in simd_{ceil,floor,round,trunc}

miri: ensure RET assignments do not get propagated on unwinding

miri: implement llvm.x86.aesni.* intrinsics

miri: refactor dlsym: dispatch symbols via the normal shim mechanism

miri: support getentropy on macOS as a foreign item

miri: tree Borrows: do not create new tags as 'Active'

add missing inline attributes to Duration trait impls

stabilize Option::as_(mut_)slice

reuse existing Somes in Option::(x)or

fix generic bound of str::SplitInclusive's DoubleEndedIterator impl

cargo: refactor(toml): Make manifest file layout more consitent

cargo: add new package cache lock modes

cargo: add unsupported short suggestion for --out-dir flag

cargo: crates-io: add doc comment for NewCrate struct

cargo: feat: add Edition2024

cargo: prep for automating MSRV management

cargo: set and verify all MSRVs in CI

rustdoc-search: fix bug with multi-item impl trait

rustdoc: rename issue-\d+.rs tests to have meaningful names (part 2)

rustdoc: Show enum discrimant if it is a C-like variant

rustfmt: adjust span derivation for const generics

clippy: impl_trait_in_params now supports impls and traits

clippy: into_iter_without_iter: walk up deref impl chain to find iter methods

clippy: std_instead_of_core: avoid lint inside of proc-macro

clippy: avoid invoking ignored_unit_patterns in macro definition

clippy: fix items_after_test_module for non root modules, add applicable suggestion

clippy: fix ICE in redundant_locals

clippy: fix: avoid changing drop order

clippy: improve redundant_locals help message

rust-analyzer: add config option to use rust-analyzer specific target dir

rust-analyzer: add configuration for the default action of the status bar click action in VSCode

rust-analyzer: do flyimport completions by prefix search for short paths

rust-analyzer: add assist for applying De Morgan's law to Iterator::all and Iterator::any

rust-analyzer: add backtick to surrounding and auto-closing pairs

rust-analyzer: implement tuple return type to tuple struct assist

rust-analyzer: ensure rustfmt runs when configured with ./

rust-analyzer: fix path syntax produced by the into_to_qualified_from assist

rust-analyzer: recognize custom main function as binary entrypoint for runnables

Rust Compiler Performance Triage

A quiet week, with few regressions and improvements.

Triage done by @simulacrum. Revision range: 9998f4add..84d44dd

1 Regressions, 2 Improvements, 4 Mixed; 1 of them in rollups

68 artifact comparisons made in total

Full report here

Approved RFCs

Changes to Rust follow the Rust RFC (request for comments) process. These are the RFCs that were approved for implementation this week:

No RFCs were approved this week.

Final Comment Period

Every week, the team announces the 'final comment period' for RFCs and key PRs which are reaching a decision. Express your opinions now.

RFCs

[disposition: merge] RFC: Remove implicit features in a new edition

Tracking Issues & PRs

[disposition: merge] Bump COINDUCTIVE_OVERLAP_IN_COHERENCE to deny + warn in deps

[disposition: merge] document ABI compatibility

[disposition: merge] Broaden the consequences of recursive TLS initialization

[disposition: merge] Implement BufRead for VecDeque<u8>

[disposition: merge] Tracking Issue for feature(file_set_times): FileTimes and File::set_times

[disposition: merge] impl Not, Bit{And,Or}{,Assign} for IP addresses

[disposition: close] Make RefMut Sync

[disposition: merge] Implement FusedIterator for DecodeUtf16 when the inner iterator does

[disposition: merge] Stabilize {IpAddr, Ipv6Addr}::to_canonical

[disposition: merge] rustdoc: hide #[repr(transparent)] if it isn't part of the public ABI

New and Updated RFCs

[new] Add closure-move-bindings RFC

[new] RFC: Include Future and IntoFuture in the 2024 prelude

Call for Testing

An important step for RFC implementation is for people to experiment with the implementation and give feedback, especially before stabilization. The following RFCs would benefit from user testing before moving forward:

No RFCs issued a call for testing this week.

If you are a feature implementer and would like your RFC to appear on the above list, add the new call-for-testing label to your RFC along with a comment providing testing instructions and/or guidance on which aspect(s) of the feature need testing.

Upcoming Events

Rusty Events between 2023-10-11 - 2023-11-08 🦀

Virtual

2023-10-11| Virtual (Boulder, CO, US) | Boulder Elixir and Rust

Monthly Meetup

2023-10-12 - 2023-10-13 | Virtual (Brussels, BE) | EuroRust

EuroRust 2023

2023-10-12 | Virtual (Nuremberg, DE) | Rust Nuremberg

Rust Nürnberg online

2023-10-18 | Virtual (Cardiff, UK)| Rust and C++ Cardiff

Operating System Primitives (Atomics & Locks Chapter 8)

2023-10-18 | Virtual (Vancouver, BC, CA) | Vancouver Rust

Rust Study/Hack/Hang-out

2023-10-19 | Virtual (Charlottesville, NC, US) | Charlottesville Rust Meetup

Crafting Interpreters in Rust Collaboratively

2023-10-19 | Virtual (Stuttgart, DE) | Rust Community Stuttgart

Rust-Meetup

2023-10-24 | Virtual (Berlin, DE) | OpenTechSchool Berlin

Rust Hack and Learn | Mirror

2023-10-24 | Virtual (Washington, DC, US) | Rust DC

Month-end Rusting—Fun with 🍌 and 🔎!

2023-10-31 | Virtual (Dallas, TX, US) | Dallas Rust

Last Tuesday

2023-11-01 | Virtual (Indianapolis, IN, US) | Indy Rust

Indy.rs - with Social Distancing

Asia

2023-10-11 | Kuala Lumpur, MY | GoLang Malaysia

Rust Meetup Malaysia October 2023 | Event updates Telegram | Event group chat

2023-10-18 | Tokyo, JP | Tokyo Rust Meetup

Rust and the Age of High-Integrity Languages

Europe

2023-10-11 | Brussels, BE | BeCode Brussels Meetup

Rust on Web - EuroRust Conference

2023-10-12 - 2023-10-13 | Brussels, BE | EuroRust

EuroRust 2023

2023-10-12 | Brussels, BE | Rust Aarhus

Rust Aarhus - EuroRust Conference

2023-10-12 | Reading, UK | Reading Rust Workshop

Reading Rust Meetup at Browns

2023-10-17 | Helsinki, FI | Finland Rust-lang Group

Helsinki Rustaceans Meetup

2023-10-17 | Leipzig, DE | Rust - Modern Systems Programming in Leipzig

SIMD in Rust

2023-10-19 | Amsterdam, NL | Rust Developers Amsterdam Group

Rust Amsterdam Meetup @ Terraform

2023-10-19 | Wrocław, PL | Rust Wrocław

Rust Meetup #35

2023-09-19 | Virtual (Washington, DC, US) | Rust DC

Month-end Rusting—Fun with 🍌 and 🔎!

2023-10-25 | Dublin, IE | Rust Dublin

Biome, web development tooling with Rust

2023-10-26 | Augsburg, DE | Rust - Modern Systems Programming in Leipzig

Augsburg Rust Meetup #3

2023-10-26 | Delft, NL | Rust Nederland

Rust at TU Delft

2023-11-07 | Brussels, BE | Rust Aarhus

Rust Aarhus - Rust and Talk beginners edition

North America

2023-10-11 | Boulder, CO, US | Boulder Rust Meetup

First Meetup - Demo Day and Office Hours

2023-10-12 | Lehi, UT, US | Utah Rust

The Actor Model: Fearless Concurrency, Made Easy w/Chris Mena

2023-10-13 | Cambridge, MA, US | Boston Rust Meetup

Kendall Rust Lunch

2023-10-17 | San Francisco, CA, US | San Francisco Rust Study Group

Rust Hacking in Person

2023-10-18 | Brookline, MA, US | Boston Rust Meetup

Boston University Rust Lunch

2023-10-19 | Mountain View, CA, US | Mountain View Rust Meetup

Rust Meetup at Hacker Dojo

2023-10-19 | Nashville, TN, US | Music City Rust Developers

Rust Goes Where It Pleases Pt2 - Rust on the front end!

2023-10-19 | Seattle, WA, US | Seattle Rust User Group

Seattle Rust User Group - October Meetup

2023-10-25 | Austin, TX, US | Rust ATX

Rust Lunch - Fareground

2023-10-25 | Chicago, IL, US | Deep Dish Rust

Rust Happy Hour

Oceania

2023-10-17 | Christchurch, NZ | Christchurch Rust Meetup Group

Christchurch Rust meetup meeting

2023-10-26 | Brisbane, QLD, AU | Rust Brisbane

October Meetup

If you are running a Rust event please add it to the calendar to get it mentioned here. Please remember to add a link to the event too. Email the Rust Community Team for access.

Jobs

Please see the latest Who's Hiring thread on r/rust

Quote of the Week

The Rust mission -- let you write software that's fast and correct, productively -- has never been more alive. So next Rustconf, I plan to celebrate:

All the buffer overflows I didn't create, thanks to Rust

All the unit tests I didn't have to write, thanks to its type system

All the null checks I didn't have to write thanks to Option and Result

All the JS I didn't have to write thanks to WebAssembly

All the impossible states I didn't have to assert "This can never actually happen"

All the JSON field keys I didn't have to manually type in thanks to Serde

All the missing SQL column bugs I caught at compiletime thanks to Diesel

All the race conditions I never had to worry about thanks to the borrow checker

All the connections I can accept concurrently thanks to Tokio

All the formatting comments I didn't have to leave on PRs thanks to Rustfmt

All the performance footguns I didn't create thanks to Clippy

– Adam Chalmers in their RustConf 2023 recap

Thanks to robin for the suggestion!

Please submit quotes and vote for next week!

This Week in Rust is edited by: nellshamrell, llogiq, cdmistman, ericseppanen, extrawurst, andrewpollack, U007D, kolharsam, joelmarcey, mariannegoldin, bennyvasquez.

Email list hosting is sponsored by The Rust Foundation

Discuss on r/rust

0 notes

Text

Docker Tag and Push Image to Hub | Docker Tagging Explained and Best Practices

Full Video Link: https://youtu.be/X-uuxvi10Cw Hi, a new #video on #DockerImageTagging is published on @codeonedigest #youtube channel. Learn TAGGING docker image. Different ways to TAG docker image #Tagdockerimage #pushdockerimagetodockerhubrepository #

Next step after building the docker image is to tag docker image. Image tagging is important to upload docker image to docker hub repository or azure container registry or elastic container registry etc. There are different ways to TAG docker image. Learn how to tag docker image? What are the best practices for docker image tagging? How to tag docker container image? How to tag and push docker…

View On WordPress

#docker#docker and Kubernetes#docker build tag#docker compose#docker image tagging#docker image tagging best practices#docker tag and push image to registry#docker tag azure container registry#docker tag command#docker tag image#docker tag push#docker tagging best practices#docker tags explained#docker tutorial#docker tutorial for beginners#how to tag and push docker image#how to tag existing docker image#how to upload image to docker hub repository#push docker image to docker hub repository#Tag docker image#tag docker image after build#what is docker

0 notes

Text

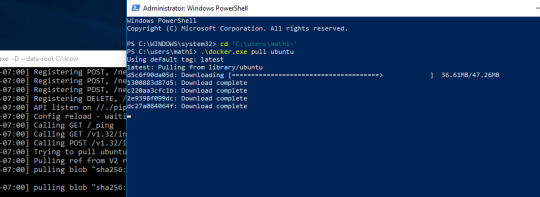

How to set up command-line access to Amazon Keyspaces (for Apache Cassandra) by using the new developer toolkit Docker image

Amazon Keyspaces (for Apache Cassandra) is a scalable, highly available, and fully managed Cassandra-compatible database service. Amazon Keyspaces helps you run your Cassandra workloads more easily by using a serverless database that can scale up and down automatically in response to your actual application traffic. Because Amazon Keyspaces is serverless, there are no clusters or nodes to provision and manage. You can get started with Amazon Keyspaces with a few clicks in the console or a few changes to your existing Cassandra driver configuration. In this post, I show you how to set up command-line access to Amazon Keyspaces by using the keyspaces-toolkit Docker image. The keyspaces-toolkit Docker image contains commonly used Cassandra developer tooling. The toolkit comes with the Cassandra Query Language Shell (cqlsh) and is configured with best practices for Amazon Keyspaces. The container image is open source and also compatible with Apache Cassandra 3.x clusters. A command line interface (CLI) such as cqlsh can be useful when automating database activities. You can use cqlsh to run one-time queries and perform administrative tasks, such as modifying schemas or bulk-loading flat files. You also can use cqlsh to enable Amazon Keyspaces features, such as point-in-time recovery (PITR) backups and assign resource tags to keyspaces and tables. The following screenshot shows a cqlsh session connected to Amazon Keyspaces and the code to run a CQL create table statement. Build a Docker image To get started, download and build the Docker image so that you can run the keyspaces-toolkit in a container. A Docker image is the template for the complete and executable version of an application. It’s a way to package applications and preconfigured tools with all their dependencies. To build and run the image for this post, install the latest Docker engine and Git on the host or local environment. The following command builds the image from the source. docker build --tag amazon/keyspaces-toolkit --build-arg CLI_VERSION=latest https://github.com/aws-samples/amazon-keyspaces-toolkit.git The preceding command includes the following parameters: –tag – The name of the image in the name:tag Leaving out the tag results in latest. –build-arg CLI_VERSION – This allows you to specify the version of the base container. Docker images are composed of layers. If you’re using the AWS CLI Docker image, aligning versions significantly reduces the size and build times of the keyspaces-toolkit image. Connect to Amazon Keyspaces Now that you have a container image built and available in your local repository, you can use it to connect to Amazon Keyspaces. To use cqlsh with Amazon Keyspaces, create service-specific credentials for an existing AWS Identity and Access Management (IAM) user. The service-specific credentials enable IAM users to access Amazon Keyspaces, but not access other AWS services. The following command starts a new container running the cqlsh process. docker run --rm -ti amazon/keyspaces-toolkit cassandra.us-east-1.amazonaws.com 9142 --ssl -u "SERVICEUSERNAME" -p "SERVICEPASSWORD" The preceding command includes the following parameters: run – The Docker command to start the container from an image. It’s the equivalent to running create and start. –rm –Automatically removes the container when it exits and creates a container per session or run. -ti – Allocates a pseudo TTY (t) and keeps STDIN open (i) even if not attached (remove i when user input is not required). amazon/keyspaces-toolkit – The image name of the keyspaces-toolkit. us-east-1.amazonaws.com – The Amazon Keyspaces endpoint. 9142 – The default SSL port for Amazon Keyspaces. After connecting to Amazon Keyspaces, exit the cqlsh session and terminate the process by using the QUIT or EXIT command. Drop-in replacement Now, simplify the setup by assigning an alias (or DOSKEY for Windows) to the Docker command. The alias acts as a shortcut, enabling you to use the alias keyword instead of typing the entire command. You will use cqlsh as the alias keyword so that you can use the alias as a drop-in replacement for your existing Cassandra scripts. The alias contains the parameter –v "$(pwd)":/source, which mounts the current directory of the host. This is useful for importing and exporting data with COPY or using the cqlsh --file command to load external cqlsh scripts. alias cqlsh='docker run --rm -ti -v "$(pwd)":/source amazon/keyspaces-toolkit cassandra.us-east-1.amazonaws.com 9142 --ssl' For security reasons, don’t store the user name and password in the alias. After setting up the alias, you can create a new cqlsh session with Amazon Keyspaces by calling the alias and passing in the service-specific credentials. cqlsh -u "SERVICEUSERNAME" -p "SERVICEPASSWORD" Later in this post, I show how to use AWS Secrets Manager to avoid using plaintext credentials with cqlsh. You can use Secrets Manager to store, manage, and retrieve secrets. Create a keyspace Now that you have the container and alias set up, you can use the keyspaces-toolkit to create a keyspace by using cqlsh to run CQL statements. In Cassandra, a keyspace is the highest-order structure in the CQL schema, which represents a grouping of tables. A keyspace is commonly used to define the domain of a microservice or isolate clients in a multi-tenant strategy. Amazon Keyspaces is serverless, so you don’t have to configure clusters, hosts, or Java virtual machines to create a keyspace or table. When you create a new keyspace or table, it is associated with an AWS Account and Region. Though a traditional Cassandra cluster is limited to 200 to 500 tables, with Amazon Keyspaces the number of keyspaces and tables for an account and Region is virtually unlimited. The following command creates a new keyspace by using SingleRegionStrategy, which replicates data three times across multiple Availability Zones in a single AWS Region. Storage is billed by the raw size of a single replica, and there is no network transfer cost when replicating data across Availability Zones. Using keyspaces-toolkit, connect to Amazon Keyspaces and run the following command from within the cqlsh session. CREATE KEYSPACE amazon WITH REPLICATION = {'class': 'SingleRegionStrategy'} AND TAGS = {'domain' : 'shoppingcart' , 'app' : 'acme-commerce'}; The preceding command includes the following parameters: REPLICATION – SingleRegionStrategy replicates data three times across multiple Availability Zones. TAGS – A label that you assign to an AWS resource. For more information about using tags for access control, microservices, cost allocation, and risk management, see Tagging Best Practices. Create a table Previously, you created a keyspace without needing to define clusters or infrastructure. Now, you will add a table to your keyspace in a similar way. A Cassandra table definition looks like a traditional SQL create table statement with an additional requirement for a partition key and clustering keys. These keys determine how data in CQL rows are distributed, sorted, and uniquely accessed. Tables in Amazon Keyspaces have the following unique characteristics: Virtually no limit to table size or throughput – In Amazon Keyspaces, a table’s capacity scales up and down automatically in response to traffic. You don’t have to manage nodes or consider node density. Performance stays consistent as your tables scale up or down. Support for “wide” partitions – CQL partitions can contain a virtually unbounded number of rows without the need for additional bucketing and sharding partition keys for size. This allows you to scale partitions “wider” than the traditional Cassandra best practice of 100 MB. No compaction strategies to consider – Amazon Keyspaces doesn’t require defined compaction strategies. Because you don’t have to manage compaction strategies, you can build powerful data models without having to consider the internals of the compaction process. Performance stays consistent even as write, read, update, and delete requirements change. No repair process to manage – Amazon Keyspaces doesn’t require you to manage a background repair process for data consistency and quality. No tombstones to manage – With Amazon Keyspaces, you can delete data without the challenge of managing tombstone removal, table-level grace periods, or zombie data problems. 1 MB row quota – Amazon Keyspaces supports the Cassandra blob type, but storing large blob data greater than 1 MB results in an exception. It’s a best practice to store larger blobs across multiple rows or in Amazon Simple Storage Service (Amazon S3) object storage. Fully managed backups – PITR helps protect your Amazon Keyspaces tables from accidental write or delete operations by providing continuous backups of your table data. The following command creates a table in Amazon Keyspaces by using a cqlsh statement with customer properties specifying on-demand capacity mode, PITR enabled, and AWS resource tags. Using keyspaces-toolkit to connect to Amazon Keyspaces, run this command from within the cqlsh session. CREATE TABLE amazon.eventstore( id text, time timeuuid, event text, PRIMARY KEY(id, time)) WITH CUSTOM_PROPERTIES = { 'capacity_mode':{'throughput_mode':'PAY_PER_REQUEST'}, 'point_in_time_recovery':{'status':'enabled'} } AND TAGS = {'domain' : 'shoppingcart' , 'app' : 'acme-commerce' , 'pii': 'true'}; The preceding command includes the following parameters: capacity_mode – Amazon Keyspaces has two read/write capacity modes for processing reads and writes on your tables. The default for new tables is on-demand capacity mode (the PAY_PER_REQUEST flag). point_in_time_recovery – When you enable this parameter, you can restore an Amazon Keyspaces table to a point in time within the preceding 35 days. There is no overhead or performance impact by enabling PITR. TAGS – Allows you to organize resources, define domains, specify environments, allocate cost centers, and label security requirements. Insert rows Before inserting data, check if your table was created successfully. Amazon Keyspaces performs data definition language (DDL) operations asynchronously, such as creating and deleting tables. You also can monitor the creation status of a new resource programmatically by querying the system schema table. Also, you can use a toolkit helper for exponential backoff. Check for table creation status Cassandra provides information about the running cluster in its system tables. With Amazon Keyspaces, there are no clusters to manage, but it still provides system tables for the Amazon Keyspaces resources in an account and Region. You can use the system tables to understand the creation status of a table. The system_schema_mcs keyspace is a new system keyspace with additional content related to serverless functionality. Using keyspaces-toolkit, run the following SELECT statement from within the cqlsh session to retrieve the status of the newly created table. SELECT keyspace_name, table_name, status FROM system_schema_mcs.tables WHERE keyspace_name = 'amazon' AND table_name = 'eventstore'; The following screenshot shows an example of output for the preceding CQL SELECT statement. Insert sample data Now that you have created your table, you can use CQL statements to insert and read sample data. Amazon Keyspaces requires all write operations (insert, update, and delete) to use the LOCAL_QUORUM consistency level for durability. With reads, an application can choose between eventual consistency and strong consistency by using LOCAL_ONE or LOCAL_QUORUM consistency levels. The benefits of eventual consistency in Amazon Keyspaces are higher availability and reduced cost. See the following code. CONSISTENCY LOCAL_QUORUM; INSERT INTO amazon.eventstore(id, time, event) VALUES ('1', now(), '{eventtype:"click-cart"}'); INSERT INTO amazon.eventstore(id, time, event) VALUES ('2', now(), '{eventtype:"showcart"}'); INSERT INTO amazon.eventstore(id, time, event) VALUES ('3', now(), '{eventtype:"clickitem"}') IF NOT EXISTS; SELECT * FROM amazon.eventstore; The preceding code uses IF NOT EXISTS or lightweight transactions to perform a conditional write. With Amazon Keyspaces, there is no heavy performance penalty for using lightweight transactions. You get similar performance characteristics of standard insert, update, and delete operations. The following screenshot shows the output from running the preceding statements in a cqlsh session. The three INSERT statements added three unique rows to the table, and the SELECT statement returned all the data within the table. Export table data to your local host You now can export the data you just inserted by using the cqlsh COPY TO command. This command exports the data to the source directory, which you mounted earlier to the working directory of the Docker run when creating the alias. The following cqlsh statement exports your table data to the export.csv file located on the host machine. CONSISTENCY LOCAL_ONE; COPY amazon.eventstore(id, time, event) TO '/source/export.csv' WITH HEADER=false; The following screenshot shows the output of the preceding command from the cqlsh session. After the COPY TO command finishes, you should be able to view the export.csv from the current working directory of the host machine. For more information about tuning export and import processes when using cqlsh COPY TO, see Loading data into Amazon Keyspaces with cqlsh. Use credentials stored in Secrets Manager Previously, you used service-specific credentials to connect to Amazon Keyspaces. In the following example, I show how to use the keyspaces-toolkit helpers to store and access service-specific credentials in Secrets Manager. The helpers are a collection of scripts bundled with keyspaces-toolkit to assist with common tasks. By overriding the default entry point cqlsh, you can call the aws-sm-cqlsh.sh script, a wrapper around the cqlsh process that retrieves the Amazon Keyspaces service-specific credentials from Secrets Manager and passes them to the cqlsh process. This script allows you to avoid hard-coding the credentials in your scripts. The following diagram illustrates this architecture. Configure the container to use the host’s AWS CLI credentials The keyspaces-toolkit extends the AWS CLI Docker image, making keyspaces-toolkit extremely lightweight. Because you may already have the AWS CLI Docker image in your local repository, keyspaces-toolkit adds only an additional 10 MB layer extension to the AWS CLI. This is approximately 15 times smaller than using cqlsh from the full Apache Cassandra 3.11 distribution. The AWS CLI runs in a container and doesn’t have access to the AWS credentials stored on the container’s host. You can share credentials with the container by mounting the ~/.aws directory. Mount the host directory to the container by using the -v parameter. To validate a proper setup, the following command lists current AWS CLI named profiles. docker run --rm -ti -v ~/.aws:/root/.aws --entrypoint aws amazon/keyspaces-toolkit configure list-profiles The ~/.aws directory is a common location for the AWS CLI credentials file. If you configured the container correctly, you should see a list of profiles from the host credentials. For instructions about setting up the AWS CLI, see Step 2: Set Up the AWS CLI and AWS SDKs. Store credentials in Secrets Manager Now that you have configured the container to access the host’s AWS CLI credentials, you can use the Secrets Manager API to store the Amazon Keyspaces service-specific credentials in Secrets Manager. The secret name keyspaces-credentials in the following command is also used in subsequent steps. docker run --rm -ti -v ~/.aws:/root/.aws --entrypoint aws amazon/keyspaces-toolkit secretsmanager create-secret --name keyspaces-credentials --description "Store Amazon Keyspaces Generated Service Credentials" --secret-string "{"username":"SERVICEUSERNAME","password":"SERVICEPASSWORD","engine":"cassandra","host":"SERVICEENDPOINT","port":"9142"}" The preceding command includes the following parameters: –entrypoint – The default entry point is cqlsh, but this command uses this flag to access the AWS CLI. –name – The name used to identify the key to retrieve the secret in the future. –secret-string – Stores the service-specific credentials. Replace SERVICEUSERNAME and SERVICEPASSWORD with your credentials. Replace SERVICEENDPOINT with the service endpoint for the AWS Region. Creating and storing secrets requires CreateSecret and GetSecretValue permissions in your IAM policy. As a best practice, rotate secrets periodically when storing database credentials. Use the Secrets Manager helper script Use the Secrets Manager helper script to sign in to Amazon Keyspaces by replacing the user and password fields with the secret key from the preceding keyspaces-credentials command. docker run --rm -ti -v ~/.aws:/root/.aws --entrypoint aws-sm-cqlsh.sh amazon/keyspaces-toolkit keyspaces-credentials --ssl --execute "DESCRIBE Keyspaces" The preceding command includes the following parameters: -v – Used to mount the directory containing the host’s AWS CLI credentials file. –entrypoint – Use the helper by overriding the default entry point of cqlsh to access the Secrets Manager helper script, aws-sm-cqlsh.sh. keyspaces-credentials – The key to access the credentials stored in Secrets Manager. –execute – Runs a CQL statement. Update the alias You now can update the alias so that your scripts don’t contain plaintext passwords. You also can manage users and roles through Secrets Manager. The following code sets up a new alias by using the keyspaces-toolkit Secrets Manager helper for passing the service-specific credentials to Secrets Manager. alias cqlsh='docker run --rm -ti -v ~/.aws:/root/.aws -v "$(pwd)":/source --entrypoint aws-sm-cqlsh.sh amazon/keyspaces-toolkit keyspaces-credentials --ssl' To have the alias available in every new terminal session, add the alias definition to your .bashrc file, which is executed on every new terminal window. You can usually find this file in $HOME/.bashrc or $HOME/bash_aliases (loaded by $HOME/.bashrc). Validate the alias Now that you have updated the alias with the Secrets Manager helper, you can use cqlsh without the Docker details or credentials, as shown in the following code. cqlsh --execute "DESCRIBE TABLE amazon.eventstore;" The following screenshot shows the running of the cqlsh DESCRIBE TABLE statement by using the alias created in the previous section. In the output, you should see the table definition of the amazon.eventstore table you created in the previous step. Conclusion In this post, I showed how to get started with Amazon Keyspaces and the keyspaces-toolkit Docker image. I used Docker to build an image and run a container for a consistent and reproducible experience. I also used an alias to create a drop-in replacement for existing scripts, and used built-in helpers to integrate cqlsh with Secrets Manager to store service-specific credentials. Now you can use the keyspaces-toolkit with your Cassandra workloads. As a next step, you can store the image in Amazon Elastic Container Registry, which allows you to access the keyspaces-toolkit from CI/CD pipelines and other AWS services such as AWS Batch. Additionally, you can control the image lifecycle of the container across your organization. You can even attach policies to expiring images based on age or download count. For more information, see Pushing an image. Cheat sheet of useful commands I did not cover the following commands in this blog post, but they will be helpful when you work with cqlsh, AWS CLI, and Docker. --- Docker --- #To view the logs from the container. Helpful when debugging docker logs CONTAINERID #Exit code of the container. Helpful when debugging docker inspect createtablec --format='{{.State.ExitCode}}' --- CQL --- #Describe keyspace to view keyspace definition DESCRIBE KEYSPACE keyspace_name; #Describe table to view table definition DESCRIBE TABLE keyspace_name.table_name; #Select samples with limit to minimize output SELECT * FROM keyspace_name.table_name LIMIT 10; --- Amazon Keyspaces CQL --- #Change provisioned capacity for tables ALTER TABLE keyspace_name.table_name WITH custom_properties={'capacity_mode':{'throughput_mode': 'PROVISIONED', 'read_capacity_units': 4000, 'write_capacity_units': 3000}} ; #Describe current capacity mode for tables SELECT keyspace_name, table_name, custom_properties FROM system_schema_mcs.tables where keyspace_name = 'amazon' and table_name='eventstore'; --- Linux --- #Line count of multiple/all files in the current directory find . -type f | wc -l #Remove header from csv sed -i '1d' myData.csv About the Author Michael Raney is a Solutions Architect with Amazon Web Services. https://aws.amazon.com/blogs/database/how-to-set-up-command-line-access-to-amazon-keyspaces-for-apache-cassandra-by-using-the-new-developer-toolkit-docker-image/

1 note

·

View note

Text

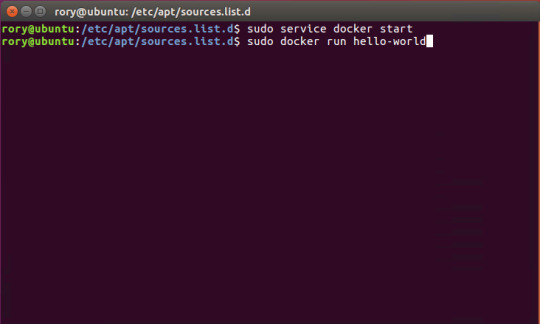

How to Install and Use Docker on CentOS

Docker is a containerization technology that allows you to quickly build, test and deploy applications as portable, self-sufficient containers that can run virtually anywhere.

The container is a way to package software along with binaries and settings required to make the software that runs isolated within an operating system.

In this tutorial, we will install Docker CE on CentOS 7 and explore the basic Docker commands and concepts. Let’s GO !

Prerequisites

Before proceeding with this tutorial, make sure that you have installed a CentOS 7 server (You may want to see our tutorial : How to install a CentOS 7 image )

Install Docker on CentOS

Although the Docker package is available in the official CentOS 7 repository, it may not always be the latest version. The recommended approach is to install Docker from the Docker’s repositories.

To install Docker on your CentOS 7 server follow the steps below:

1. Start by updating your system packages and install the required dependencies:

sudo yum update

sudo yum install yum-utils device-mapper-persistent-data lvm2

2. Next, run the following command which will add the Docker stable repository to your system:

sudo yum-config-manager --add-repo https://download.docker.com/linux/centos/docker-ce.repo

3. Now that the Docker repository is enabled, install the latest version of Docker CE (Community Edition) using yum by typing:

sudo yum install docker-ce

4. Once the Docker package is installed, start the Docker daemon and enable it to automatically start at boot time:

sudo systemctl start dockersudo systemctl enable docker

5. To verify that the Docker service is running type:

sudo systemctl status docker

6. The output should look something like this:

● docker.service - Docker Application Container Engine Loaded: loaded (/usr/lib/systemd/system/docker.service; enabled; vendor preset: disabled) Active: active (running) since Wed 2018-10-31 08:51:20 UTC; 7s ago Docs: https://docs.docker.com Main PID: 2492 (dockerd) CGroup: /system.slice/docker.service ├─2492 /usr/bin/dockerd └─2498 docker-containerd --config /var/run/docker/containerd/containerd.toml

At the time of writing, the current stable version of Docker is, 18.06.1, to print the Docker version type:

docker -v

Docker version 18.06.1-ce, build e68fc7a

Executing the Docker Command Without Sudo

By default managing, Docker requires administrator privileges. If you want to run Docker commands as a non-root user without prepending sudo you need to add your user to the docker group which is created during the installation of the Docker CE package. You can do that by typing:

sudo usermod -aG docker $USER

$USER is an environnement variable that holds your username.

Log out and log back in so that the group membership is refreshed.

To verify Docker is installed successfully and that you can run docker commands without sudo, issue the following command which will download a test image, run it in a container, print a “Hello from Docker” message and exit:

docker container run hello-world

The output should look like the following:

Unable to find image 'hello-world:latest' locally latest: Pulling from library/hello-world 9bb5a5d4561a: Pull complete Digest: sha256:f5233545e43561214ca4891fd1157e1c3c563316ed8e237750d59bde73361e77 Status: Downloaded newer image for hello-world:latest Hello from Docker! This message shows that your installation appears to be working correctly.

Docker command line interface

Now that we have a working Docker installation, let’s go over the basic syntax of the docker CLI.

The docker command line take the following form:

docker [option] [subcommand] [arguments]

You can list all available commands by typing docker with no parameters:

docker

If you need more help on any [subcommand], just type:

docker [subcommand] --help

Docker Images

A Docker image is made up of a series of layers representing instructions in the image’s Dockerfile that make up an executable software application. An image is an immutable binary file including the application and all other dependencies such as binaries, libraries, and instructions necessary for running the application. In short, a Docker image is essentially a snapshot of a Docker container.

The Docker Hub is cloud-based registry service which among other functionalities is used for keeping the Docker images either in a public or private repository.

To search the Docker Hub repository for an image just use the search subcommand. For example, to search for the CentOS image, run:

docker search centos

The output should look like the following:

NAME DESCRIPTION STARS OFFICIAL AUTOMATED centos The official build of CentOS. 4257 [OK] ansible/centos7-ansible Ansible on Centos7 109 [OK] jdeathe/centos-ssh CentOS-6 6.9 x86_64 / CentOS-7 7.4.1708 x86_… 94 [OK] consol/centos-xfce-vnc Centos container with "headless" VNC session… 52 [OK] imagine10255/centos6-lnmp-php56 centos6-lnmp-php56 40 [OK] tutum/centos Simple CentOS docker image with SSH access 39

As you can see the search results prints a table with five columns, NAME, DESCRIPTION, STARS, OFFICIAL and AUTOMATED. The official image is an image that Docker develops in conjunction with upstream partners.

If we want to download the official build of CentOS 7, we can do that by using the image pull subcommand:

docker image pull centos

Using default tag: latest latest: Pulling from library/centos 469cfcc7a4b3: Pull complete Digest: sha256:989b936d56b1ace20ddf855a301741e52abca38286382cba7f44443210e96d16 Status: Downloaded newer image for centos:latest

Depending on your Internet speed, the download may take a few seconds or a few minutes. Once the image is downloaded we can list the images with:

docker image ls

The output should look something like the following:

REPOSITORY TAG IMAGE ID CREATED SIZE hello-world latest e38bc07ac18e 3 weeks ago 1.85kB centos latest e934aafc2206 4 weeks ago 199MB

If for some reason you want to delete an image you can do that with the image rm [image_name] subcommand:

docker image rm centos

Untagged: centos:latest Untagged: centos@sha256:989b936d56b1ace20ddf855a301741e52abca38286382cba7f44443210e96d16 Deleted: sha256:e934aafc22064b7322c0250f1e32e5ce93b2d19b356f4537f5864bd102e8531f Deleted: sha256:43e653f84b79ba52711b0f726ff5a7fd1162ae9df4be76ca1de8370b8bbf9bb0

Docker Containers

An instance of an image is called a container. A container represents a runtime for a single application, process, or service.

It may not be the most appropriate comparison but if you are a programmer you can think of a Docker image as class and Docker container as an instance of a class.

We can start, stop, remove and manage a container with the docker container subcommand.

The following command will start a Docker container based on the CentoOS image. If you don’t have the image locally, it will download it first:

docker container run centos

At first sight, it may seem to you that nothing happened at all. Well, that is not true. The CentOS container stops immediately after booting up because it does not have a long-running process and we didn’t provide any command, so the container booted up, ran an empty command and then exited.

The switch -it allows us to interact with the container via the command line. To start an interactive container type:

docker container run -it centos /bin/bash

As you can see from the output once the container is started the command prompt is changed which means that you’re now working from inside the container:

[root@719ef9304412 /]#

To list running containers: , type:

docker container ls

CONTAINER ID IMAGE COMMAND CREATED STATUS PORTS NAMES 79ab8e16d567 centos "/bin/bash" 22 minutes ago Up 22 minutes ecstatic_ardinghelli

If you don’t have any running containers the output will be empty.

To view both running and stopped containers, pass it the -a switch:

docker container ls -a

CONTAINER ID IMAGE COMMAND CREATED STATUS PORTS NAMES 79ab8e16d567 centos "/bin/bash" 22 minutes ago Up 22 minutes ecstatic_ardinghelli c55680af670c centos "/bin/bash" 30 minutes ago Exited (0) 30 minutes ago modest_hawking c6a147d1bc8a hello-world "/hello" 20 hours ago Exited (0) 20 hours ago sleepy_shannon

To delete one or more containers just copy the container ID (or IDs) from above and paste them after the container rm subcommand:

docker container rm c55680af670c

Conclusion

You have learned how to install Docker on your CentOS 7 machine and how to download Docker images and manage Docker containers.

This tutorial barely scratches the surface of the Docker ecosystem. In some of our next articles, we will continue to dive into other aspects of Docker. To learn more about Docker check out the official Docker documentation.

If you have any questions or remark, please leave a comment .

2 notes

·

View notes

Text

docker commands cheat sheet free EEC#

💾 ►►► DOWNLOAD FILE 🔥🔥🔥🔥🔥 docker exec -it /bin/sh. docker restart. Show running container stats. Docker Cheat Sheet All commands below are called as options to the base docker command. for more information on a particular command. To enter a running container, attach a new shell process to a running container called foo, use: docker exec -it foo /bin/bash . Images are just. Reference - Best Practices. Creates a mount point with the specified name and marks it as holding externally mounted volumes from native host or other containers. FROM can appear multiple times within a single Dockerfile in order to create multiple images. The tag or digest values are optional. If you omit either of them, the builder assumes a latest by default. The builder returns an error if it cannot match the tag value. Normal shell processing does not occur when using the exec form. There can only be one CMD instruction in a Dockerfile. If the user specifies arguments to docker run then they will override the default specified in CMD. To include spaces within a LABEL value, use quotes and backslashes as you would in command-line parsing. The environment variables set using ENV will persist when a container is run from the resulting image. Match rules. Prepend exec to get around this drawback. It can be used multiple times in the one Dockerfile. Multiple variables may be defined by specifying ARG multiple times. It is not recommended to use build-time variables for passing secrets like github keys, user credentials, etc. Build-time variable values are visible to any user of the image with the docker history command. The trigger will be executed in the context of the downstream build, as if it had been inserted immediately after the FROM instruction in the downstream Dockerfile. Any build instruction can be registered as a trigger. Triggers are inherited by the "child" build only. In other words, they are not inherited by "grand-children" builds. After a certain number of consecutive failures, it becomes unhealthy. If a single run of the check takes longer than timeout seconds then the check is considered to have failed. It takes retries consecutive failures of the health check for the container to be considered unhealthy. The command's exit status indicates the health status of the container. Allows an alternate shell be used such as zsh , csh , tcsh , powershell , and others.

1 note

·

View note

Text

docker commands cheat sheet 100% working 4RXD#

💾 ►►► DOWNLOAD FILE 🔥🔥🔥🔥🔥 docker exec -it /bin/sh. docker restart. Show running container stats. Docker Cheat Sheet All commands below are called as options to the base docker command. for more information on a particular command. To enter a running container, attach a new shell process to a running container called foo, use: docker exec -it foo /bin/bash . Images are just. Reference - Best Practices. Creates a mount point with the specified name and marks it as holding externally mounted volumes from native host or other containers. FROM can appear multiple times within a single Dockerfile in order to create multiple images. The tag or digest values are optional. If you omit either of them, the builder assumes a latest by default. The builder returns an error if it cannot match the tag value. Normal shell processing does not occur when using the exec form. There can only be one CMD instruction in a Dockerfile. If the user specifies arguments to docker run then they will override the default specified in CMD. To include spaces within a LABEL value, use quotes and backslashes as you would in command-line parsing. The environment variables set using ENV will persist when a container is run from the resulting image. Match rules. Prepend exec to get around this drawback. It can be used multiple times in the one Dockerfile. Multiple variables may be defined by specifying ARG multiple times. It is not recommended to use build-time variables for passing secrets like github keys, user credentials, etc. Build-time variable values are visible to any user of the image with the docker history command. The trigger will be executed in the context of the downstream build, as if it had been inserted immediately after the FROM instruction in the downstream Dockerfile. Any build instruction can be registered as a trigger. Triggers are inherited by the "child" build only. In other words, they are not inherited by "grand-children" builds. After a certain number of consecutive failures, it becomes unhealthy. If a single run of the check takes longer than timeout seconds then the check is considered to have failed. It takes retries consecutive failures of the health check for the container to be considered unhealthy. The command's exit status indicates the health status of the container. Allows an alternate shell be used such as zsh , csh , tcsh , powershell , and others.

1 note

·

View note

Text

docker commands cheat sheet trainer NKD!

💾 ►►► DOWNLOAD FILE 🔥🔥🔥🔥🔥 docker exec -it /bin/sh. docker restart. Show running container stats. Docker Cheat Sheet All commands below are called as options to the base docker command. for more information on a particular command. To enter a running container, attach a new shell process to a running container called foo, use: docker exec -it foo /bin/bash . Images are just. Reference - Best Practices. Creates a mount point with the specified name and marks it as holding externally mounted volumes from native host or other containers. FROM can appear multiple times within a single Dockerfile in order to create multiple images. The tag or digest values are optional. If you omit either of them, the builder assumes a latest by default. The builder returns an error if it cannot match the tag value. Normal shell processing does not occur when using the exec form. There can only be one CMD instruction in a Dockerfile. If the user specifies arguments to docker run then they will override the default specified in CMD. To include spaces within a LABEL value, use quotes and backslashes as you would in command-line parsing. The environment variables set using ENV will persist when a container is run from the resulting image. Match rules. Prepend exec to get around this drawback. It can be used multiple times in the one Dockerfile. Multiple variables may be defined by specifying ARG multiple times. It is not recommended to use build-time variables for passing secrets like github keys, user credentials, etc. Build-time variable values are visible to any user of the image with the docker history command. The trigger will be executed in the context of the downstream build, as if it had been inserted immediately after the FROM instruction in the downstream Dockerfile. Any build instruction can be registered as a trigger. Triggers are inherited by the "child" build only. In other words, they are not inherited by "grand-children" builds. After a certain number of consecutive failures, it becomes unhealthy. If a single run of the check takes longer than timeout seconds then the check is considered to have failed. It takes retries consecutive failures of the health check for the container to be considered unhealthy. The command's exit status indicates the health status of the container. Allows an alternate shell be used such as zsh , csh , tcsh , powershell , and others.

1 note

·

View note

Text

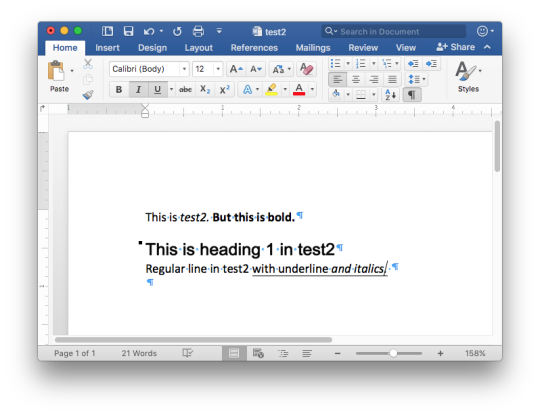

In this tutorial, I will show you how to set up a Docker environment for your Django project when still in the development phase. Although I am using Ubuntu 18.04, the steps remain the same for whatever Linux distro you use with exception of Docker and docker-compose installation. In any typical software development project, we usually have a process of development usually starting from the developer premise to VCS to production. We will try to stick to the rules so that you understand where each DevOps tools come in. DevOps is all about eliminating the yesteryears phrase; “But it works on my laptop!”. Docker containerization creates the production environment for your application so that it can be deployed anywhere, Develop once, deploy anywhere!. Install Docker Engine You need a Docker runtime engine installed on your server/Desktop. Our Docker installation guides should be of great help. How to install Docker on CentOS / Debian / Ubuntu Environment Setup I have set up a GitHub repo for this project called django-docker-dev-app. Feel free to fork it or clone/download it. First, create the folder to hold your project. My folder workspace is called django-docker-dev-app. CD into the folder then opens it in your IDE. Dockerfile In a containerized environment, all applications live in a container. Containers themselves are made up of several images. You can create your own image or use other images from Docker Hub. A Dockerfile is Docker text document Docker reads to automatically create/build an image. Our Dockerfile will list all the dependencies required by our project. Create a file named Dockerfile. $ nano Dockerfile In the file type the following: #base image FROM python:3.7-alpine #maintainer LABEL Author="CodeGenes" # The enviroment variable ensures that the python output is set straight # to the terminal with out buffering it first ENV PYTHONBUFFERED 1 #copy requirements file to image COPY ./requirements.txt /requirements.txt #let pip install required packages RUN pip install -r requirements.txt #directory to store app source code RUN mkdir /app #switch to /app directory so that everything runs from here WORKDIR /app #copy the app code to image working directory COPY ./app /app #create user to run the app(it is not recommended to use root) #we create user called user with -D -> meaning no need for home directory RUN adduser -D user #switch from root to user to run our app USER user Create the requirements.txt file and add your projects requirements. Django>=2.1.3,=3.9.0, sh -c "python manage.py runserver 0.0.0.0:8000" Note the YAML file format!!! We have one service called ddda (Django Docker Dev App). We are now ready to build our service: $ docker-compose build After a successful build you see: Successfully tagged djangodockerdevapp_ddda:latest It means that our image is called djangodockerdevapp_ddda:latest and our service is called ddda Now note that we have not yet created our Django project! If you followed the above procedure successfully then Congrats, you have set Docker environment for your project!. Next, start your development by doing the following: Create the Django project: $ docker-compose run ddda sh -c “django-admin.py startproject app .” To run your container: $ docker-compose up Then visit http://localhost:8000/ Make migrations $ docker-compose exec ddda python manage.py migrate From now onwards you will run Django commands like the above command, adding your command after the service name ddda. That’s it for now. I know it I simple and there are a lot of features we have not covered like a database but this is a start! The project is available on GitHub here.

0 notes

Text

This Week in Rust 516

Hello and welcome to another issue of This Week in Rust! Rust is a programming language empowering everyone to build reliable and efficient software. This is a weekly summary of its progress and community. Want something mentioned? Tag us at @ThisWeekInRust on Twitter or @ThisWeekinRust on mastodon.social, or send us a pull request. Want to get involved? We love contributions.

This Week in Rust is openly developed on GitHub and archives can be viewed at this-week-in-rust.org. If you find any errors in this week's issue, please submit a PR.

Updates from Rust Community

Official

Announcing Rust 1.73.0

Polonius update

Project/Tooling Updates

rust-analyzer changelog #202

Announcing: pid1 Crate for Easier Rust Docker Images - FP Complete

bit_seq in Rust: A Procedural Macro for Bit Sequence Generation

tcpproxy 0.4 released

Rune 0.13

Rust on Espressif chips - September 29 2023

esp-rs quarterly planning: Q4 2023

Implementing the #[diagnostic] namespace to improve rustc error messages in complex crates

Observations/Thoughts

Safety vs Performance. A case study of C, C++ and Rust sort implementations

Raw SQL in Rust with SQLx

Thread-per-core

Edge IoT with Rust on ESP: HTTP Client

The Ultimate Data Engineering Chadstack. Running Rust inside Apache Airflow

Why Rust doesn't need a standard div_rem: An LLVM tale - CodSpeed

Making Rust supply chain attacks harder with Cackle

[video] Rust 1.73.0: Everything Revealed in 16 Minutes

Rust Walkthroughs

Let's Build A Cargo Compatible Build Tool - Part 5

How we reduced the memory usage of our Rust extension by 4x

Calling Rust from Python

Acceptance Testing embedded-hal Drivers

5 ways to instantiate Rust structs in tests

Research

Looking for Bad Apples in Rust Dependency Trees Using GraphQL and Trustfall

Miscellaneous

Rust, Open Source, Consulting - Interview with Matthias Endler

Edge IoT with Rust on ESP: Connecting WiFi

Bare-metal Rust in Android

[audio] Learn Rust in a Month of Lunches with Dave MacLeod

[video] Rust 1.73.0: Everything Revealed in 16 Minutes

[video] Rust 1.73 Release Train

[video] Why is the JavaScript ecosystem switching to Rust?

Crate of the Week

This week's crate is yarer, a library and command-line tool to evaluate mathematical expressions.

Thanks to Gianluigi Davassi for the self-suggestion!

Please submit your suggestions and votes for next week!

Call for Participation

Always wanted to contribute to open-source projects but did not know where to start? Every week we highlight some tasks from the Rust community for you to pick and get started!

Some of these tasks may also have mentors available, visit the task page for more information.

Ockam - Make ockam node delete (no args) interactive by asking the user to choose from a list of nodes to delete (tuify)

Ockam - Improve ockam enroll ----help text by adding doc comment for identity flag (clap command)

Ockam - Enroll "email: '+' character not allowed"

If you are a Rust project owner and are looking for contributors, please submit tasks here.

Updates from the Rust Project

384 pull requests were merged in the last week

formally demote tier 2 MIPS targets to tier 3

add tvOS to target_os for register_dtor

linker: remove -Zgcc-ld option

linker: remove unstable legacy CLI linker flavors

non_lifetime_binders: fix ICE in lint opaque-hidden-inferred-bound

add async_fn_in_trait lint

add a note to duplicate diagnostics

always preserve DebugInfo in DeadStoreElimination

bring back generic parameters for indices in rustc_abi and make it compile on stable

coverage: allow each coverage statement to have multiple code regions

detect missing => after match guard during parsing

diagnostics: be more careful when suggesting struct fields

don't suggest nonsense suggestions for unconstrained type vars in note_source_of_type_mismatch_constraint

dont call mir.post_mono_checks in codegen

emit feature gate warning for auto traits pre-expansion

ensure that ~const trait bounds on associated functions are in const traits or impls

extend impl's def_span to include its where clauses

fix detecting references to packed unsized fields

fix fast-path for try_eval_scalar_int

fix to register analysis passes with -Zllvm-plugins at link-time

for a single impl candidate, try to unify it with error trait ref

generalize small dominators optimization

improve the suggestion of generic_bound_failure

make FnDef 1-ZST in LLVM debuginfo

more accurately point to where default return type should go

move subtyper below reveal_all and change reveal_all

only trigger refining_impl_trait lint on reachable traits

point to full async fn for future

print normalized ty

properly export function defined in test which uses global_asm!()

remove Key impls for types that involve an AllocId

remove is global hack

remove the TypedArena::alloc_from_iter specialization

show more information when multiple impls apply

suggest pin!() instead of Pin::new() when appropriate

make subtyping explicit in MIR

do not run optimizations on trivial MIR

in smir find_crates returns Vec<Crate> instead of Option<Crate>

add Span to various smir types

miri-script: print which sysroot target we are building

miri: auto-detect no_std where possible

miri: continuation of #3054: enable spurious reads in TB

miri: do not use host floats in simd_{ceil,floor,round,trunc}

miri: ensure RET assignments do not get propagated on unwinding

miri: implement llvm.x86.aesni.* intrinsics

miri: refactor dlsym: dispatch symbols via the normal shim mechanism

miri: support getentropy on macOS as a foreign item

miri: tree Borrows: do not create new tags as 'Active'

add missing inline attributes to Duration trait impls

stabilize Option::as_(mut_)slice

reuse existing Somes in Option::(x)or

fix generic bound of str::SplitInclusive's DoubleEndedIterator impl

cargo: refactor(toml): Make manifest file layout more consitent

cargo: add new package cache lock modes

cargo: add unsupported short suggestion for --out-dir flag

cargo: crates-io: add doc comment for NewCrate struct

cargo: feat: add Edition2024

cargo: prep for automating MSRV management

cargo: set and verify all MSRVs in CI

rustdoc-search: fix bug with multi-item impl trait

rustdoc: rename issue-\d+.rs tests to have meaningful names (part 2)

rustdoc: Show enum discrimant if it is a C-like variant

rustfmt: adjust span derivation for const generics

clippy: impl_trait_in_params now supports impls and traits

clippy: into_iter_without_iter: walk up deref impl chain to find iter methods

clippy: std_instead_of_core: avoid lint inside of proc-macro

clippy: avoid invoking ignored_unit_patterns in macro definition

clippy: fix items_after_test_module for non root modules, add applicable suggestion

clippy: fix ICE in redundant_locals

clippy: fix: avoid changing drop order

clippy: improve redundant_locals help message

rust-analyzer: add config option to use rust-analyzer specific target dir

rust-analyzer: add configuration for the default action of the status bar click action in VSCode

rust-analyzer: do flyimport completions by prefix search for short paths

rust-analyzer: add assist for applying De Morgan's law to Iterator::all and Iterator::any

rust-analyzer: add backtick to surrounding and auto-closing pairs

rust-analyzer: implement tuple return type to tuple struct assist

rust-analyzer: ensure rustfmt runs when configured with ./

rust-analyzer: fix path syntax produced by the into_to_qualified_from assist

rust-analyzer: recognize custom main function as binary entrypoint for runnables

Rust Compiler Performance Triage

A quiet week, with few regressions and improvements.

Triage done by @simulacrum. Revision range: 9998f4add..84d44dd

1 Regressions, 2 Improvements, 4 Mixed; 1 of them in rollups

68 artifact comparisons made in total

Full report here

Approved RFCs

Changes to Rust follow the Rust RFC (request for comments) process. These are the RFCs that were approved for implementation this week:

No RFCs were approved this week.

Final Comment Period

Every week, the team announces the 'final comment period' for RFCs and key PRs which are reaching a decision. Express your opinions now.

RFCs

[disposition: merge] RFC: Remove implicit features in a new edition

Tracking Issues & PRs

[disposition: merge] Bump COINDUCTIVE_OVERLAP_IN_COHERENCE to deny + warn in deps

[disposition: merge] document ABI compatibility

[disposition: merge] Broaden the consequences of recursive TLS initialization

[disposition: merge] Implement BufRead for VecDeque<u8>

[disposition: merge] Tracking Issue for feature(file_set_times): FileTimes and File::set_times

[disposition: merge] impl Not, Bit{And,Or}{,Assign} for IP addresses

[disposition: close] Make RefMut Sync

[disposition: merge] Implement FusedIterator for DecodeUtf16 when the inner iterator does

[disposition: merge] Stabilize {IpAddr, Ipv6Addr}::to_canonical

[disposition: merge] rustdoc: hide #[repr(transparent)] if it isn't part of the public ABI

New and Updated RFCs

[new] Add closure-move-bindings RFC

[new] RFC: Include Future and IntoFuture in the 2024 prelude

Call for Testing

An important step for RFC implementation is for people to experiment with the implementation and give feedback, especially before stabilization. The following RFCs would benefit from user testing before moving forward:

No RFCs issued a call for testing this week.

If you are a feature implementer and would like your RFC to appear on the above list, add the new call-for-testing label to your RFC along with a comment providing testing instructions and/or guidance on which aspect(s) of the feature need testing.

Upcoming Events

Rusty Events between 2023-10-11 - 2023-11-08 🦀

Virtual

2023-10-11| Virtual (Boulder, CO, US) | Boulder Elixir and Rust

Monthly Meetup

2023-10-12 - 2023-10-13 | Virtual (Brussels, BE) | EuroRust

EuroRust 2023

2023-10-12 | Virtual (Nuremberg, DE) | Rust Nuremberg

Rust Nürnberg online

2023-10-18 | Virtual (Cardiff, UK)| Rust and C++ Cardiff

Operating System Primitives (Atomics & Locks Chapter 8)

2023-10-18 | Virtual (Vancouver, BC, CA) | Vancouver Rust

Rust Study/Hack/Hang-out

2023-10-19 | Virtual (Charlottesville, NC, US) | Charlottesville Rust Meetup

Crafting Interpreters in Rust Collaboratively

2023-10-19 | Virtual (Stuttgart, DE) | Rust Community Stuttgart

Rust-Meetup

2023-10-24 | Virtual (Berlin, DE) | OpenTechSchool Berlin

Rust Hack and Learn | Mirror

2023-10-24 | Virtual (Washington, DC, US) | Rust DC

Month-end Rusting—Fun with 🍌 and 🔎!

2023-10-31 | Virtual (Dallas, TX, US) | Dallas Rust

Last Tuesday

2023-11-01 | Virtual (Indianapolis, IN, US) | Indy Rust

Indy.rs - with Social Distancing

Asia

2023-10-11 | Kuala Lumpur, MY | GoLang Malaysia

Rust Meetup Malaysia October 2023 | Event updates Telegram | Event group chat

2023-10-18 | Tokyo, JP | Tokyo Rust Meetup

Rust and the Age of High-Integrity Languages

Europe

2023-10-11 | Brussels, BE | BeCode Brussels Meetup

Rust on Web - EuroRust Conference

2023-10-12 - 2023-10-13 | Brussels, BE | EuroRust

EuroRust 2023

2023-10-12 | Brussels, BE | Rust Aarhus

Rust Aarhus - EuroRust Conference

2023-10-12 | Reading, UK | Reading Rust Workshop

Reading Rust Meetup at Browns

2023-10-17 | Helsinki, FI | Finland Rust-lang Group

Helsinki Rustaceans Meetup

2023-10-17 | Leipzig, DE | Rust - Modern Systems Programming in Leipzig

SIMD in Rust

2023-10-19 | Amsterdam, NL | Rust Developers Amsterdam Group

Rust Amsterdam Meetup @ Terraform

2023-10-19 | Wrocław, PL | Rust Wrocław

Rust Meetup #35

2023-09-19 | Virtual (Washington, DC, US) | Rust DC

Month-end Rusting—Fun with 🍌 and 🔎!

2023-10-25 | Dublin, IE | Rust Dublin

Biome, web development tooling with Rust