#node js web application development

Explore tagged Tumblr posts

Text

1 note

·

View note

Text

In 2024, which is more efficient—Ruby or Node.js?

When choosing the appropriate technology stack in the ever-changing field of software development, efficiency is a critical consideration. In 2024, performance, scalability, and developer productivity continue to be the primary factors influencing the choice between Node.js and Ruby. Although they have both grown in popularity over time, which one is currently the most efficient on the market?

Node.js is now widely used for enterprise-level platforms and lightweight web apps alike, and it is synonymous with speed and scalability. Conversely, Ruby is well-known for its robust Rails framework and its user-friendly syntax, which facilitate quick prototyping.

To determine which technology will be the most efficient in 2024 and beyond, we will examine the details of each one in terms of performance, scalability, concurrency management, and developer productivity in this blog.

Understanding Node.js

The year 2009 saw the creation of Node.js. The techniques for developing server-side applications weren't very appealing until that point. Using the V8 JavaScript engine in Chrome provides an open-source runtime environment for developing networking and server-side applications. Node.js differs from other frameworks in that it can manage multiple connections at once without requiring the creation of threads for every request thanks to its non-blocking, event-driven architecture.

Effective Node.js Features

Because Node.js is event-driven and asynchronous, it doesn't have to wait for tasks to finish. Let's say it is reading a file from a disk, in which case Node.js can handle more queries. Because of this, the processing time is almost zero. This makes Node Js Development Services India suitable for real-time applications, such as chat apps and online gaming.

Node.js employs a single-threaded paradigm. Node.js operates on a single thread, in contrast to many traditional server-side languages. Node.js reduces the overhead of thread-to-thread context change in this way. It operates very efficiently because of the nature of its operations, particularly when handling I/O-bound applications.

Google's in-house V8 engine is responsible for translating the language into machine code. The majority of applications built using Node.js operate faster because this has evolved as one of the fastest server-side technologies available today.

Node.js's Common Use Cases in 2024

One of the most widely used options for microservices, APIs, and scalable network applications is still Node.js. Many use it for real-time applications like live streaming—like Netflix—online collaboration tools, and high-traffic websites because of its exceptional capacity to manage numerous concurrent users.

Understanding Ruby

Designed to be simple and productive, Yukihiro Matsumoto created Ruby, a dynamic, object-oriented, general-purpose programming language, in 1995. When Ruby on Rails was introduced to the online development scene in 2004 as a framework for web development, it took off. Ruby on Rails enabled developers to create web apps at a remarkable rate by favoring convention over configuration.

Effective Features of Ruby: Ease of use and developer satisfaction: Ruby is an elegant language to read, which increases developers' output. Because it can accomplish more with less functionality—similar to other languages—while requiring less code, it can complete development cycles faster, which has an indirect impact on the project's overall efficiency.

It's important to note that Ruby's metaprogramming enables programmers to create reusable, flexible code. Ruby becomes extremely efficient for a particular class of applications, notably those with a lot of business logic, thanks to this functionality, which can simplify repetitive activities and streamline complex operations.

As a full-stack framework, Rails offers all the tools required to develop online applications with databases at their core. Its built-in tools and libraries significantly cut down on development time, making it a cost-effective option for startups and businesses looking to release their products as soon as feasible.

Popular Ruby Use Cases by 2024

Ruby is still excellent in 2024 in fields where quick prototyping and development are essential. Ruby on Rails is a popular programming language used by content management systems, e-commerce platforms like Shopify, and customer-facing apps with intricate business logic due to its ease of use and speed of development.

Read More:- JavaScript Revolution: Node.js in Back-End Development

Ruby vs. Node.js: A Comparison of Performance

When evaluating the effectiveness of Node.js and Ruby, a few factors related to speed, concurrency, scalability, and resource utilization are taken into account.

The speed

In general, Node Js Development Services India is faster than most other backend technologies available, and they outperform Ruby in this aspect as well. Running JavaScript on Node.js with the support of a V8 engine is incredibly quick. Node.js increases overall speed by executing multiple requests simultaneously without waiting for each one to finish, adhering to the non-blocking and asynchronous approach paradigm.

Ruby offers a more convenient development environment than Node.js, but it is slower in pure realization. Ruby's comparatively slow performance can be attributed to its GIL. GIL essentially prevents many threads from running at the same time. It reveals its shortcomings in situations like these where speed is a concern and great performance is required.

Parallel Processing

Because Node.js has a single-threaded event loop, it can effectively manage hundreds of concurrent connections. For applications like live chat and video conferencing systems that depend on real-time data processing or streaming, Node.js is therefore more convenient.

Ruby allows for multithreading and concurrency, albeit it has several limitations. This is due to Ruby's GIL, which hinders real parallel execution and may significantly slow down managing numerous concurrent connections. Although Ruby has been improved in more recent iterations to handle concurrency, Node.js performs better than Ruby in this regard.

The capacity to scale

Now, in terms of scalability, the event-driven, non-blocking architecture of Node Js Development Services India makes them excellent. This indicates that the system responds quickly and can manage heavy loads without crashing, which is ideal for large-scale applications. This is one of the main justifications for Node.js's adoption by businesses like Uber and LinkedIn.

Ruby is scalable, but only to the extent that it needs more memory and CPU to manage the demand. Ruby's multi-threading architecture can grow, but again, this will come with more system overhead. Additionally, Ruby will be more appropriate for mid-sized or small applications in 2024 as opposed to large-scale apps that need millions of connections.

Resource Usage Node.js is lightweight on memory use and CPU usage, hence favored in locations where resource consumption is a factor. Owing to its non-blocking, event-driven operation method, it can manage a large volume of requests at a relatively low resource consumption.

Although Ruby's memory usage has been reduced in recent years, it is still higher than Node.js, particularly in applications with sophisticated logic or high traffic.

Productivity of Developers and Ecosystem

Efficiency also includes developer productivity, which is a crucial component of the equation and goes beyond performance measures. The total efficiency of the technological stack improves with developers' ability to create and manage apps more quickly and effectively.

Node.js: the Developer Ecosystem and npm

Node.js contains one of the largest package managers, npm, or Node Package Manager. Its more than a million packages let developers have nearly every answer for a problem they run into, which eventually cuts down on development time. When combined with NPM, the modularity and flexibility of Top Node Js Development Services allow the developer to swiftly incorporate new features into their applications.

Developers used to working with synchronous languages may not be accustomed to Node.js's event-driven, non-blocking architecture. While there may be a modest learning curve compared to some other frameworks, mastering it will pay off in the form of quickly constructing and effectively managing high-performance applications.

Ruby: Gems and Rails

With the Rails framework and its package management RubyGems, Ruby boasts a robust ecosystem as well. Ruby on Rails encourages convention over configuration; it gives you pre-packaged solutions and conventions for structures, which significantly speeds up development. It's not necessary to start from scratch every time; development can happen quickly and efficiently.

Ruby has a relatively short learning curve when used with Rails. Rapid onboarding of new developers contributes to the high productivity level of the Ruby ecosystem. However, for applications requiring complicated logic or high scalability, Ruby's performance restrictions may result in slowness.

Updates and Assistance from the Community

Because active communities result in more regular updates and refinements, the strength of a language's user base will ultimately impact the language's long-term efficiency. The most significant thing is that all improvements are made on the fly.

2024: The Node.js Community

When it comes to platform upgrades, features, and performance improvements, the Node.js community is among the most active. The Node.js community has worked hard to increase the scalability and security of its applications.

With thousands of contributors delivering changes that optimize the core platform and its surrounding ecosystem, the Node.js community is as robust as ever in 2024.

2024's Ruby Community

Ruby aficionados are still highly active in contributing to the language to keep it relevant in today's advancements, despite the relatively modest size of the community. Ruby has significantly improved in terms of performance in recent years. This includes the release of Ruby 3.0, which is considerably faster and focuses on concurrency and speed improvements. While the Ruby community is focused on ensuring developer satisfaction, they are also actively exploring ways to enhance performance.

Use Cases and Practical Illustrations

The next section discusses particular sectors and use cases where Node.js and Ruby demonstrate their efficacy by 2024.

Node.js in 2024

Real-time apps: Node.js is the greatest choice for real-time apps due to its non-blocking architecture. Node.js is used by Trello and Slack because of its ability to manage several users and interactions at once.

Streaming Services: Netflix has been utilizing Node.js for a long time because of the high number of concurrent streams it can handle and the requirement for low streaming latency.

Ruby in 2024

E-Commerce Platforms: Shopify keeps utilizing Ruby because of its ease of use and capacity for quick development. Ruby developers can quickly iterate and implement new features thanks to the Rails framework.

Content Management Systems: Ruby's efficiency can handle complicated business logic, as demonstrated by Basecamp, which was created in the language.

Read More:- Scalable Solutions: Integrating .NET and Node.js for Software Development

Last Word

In terms of efficiency in 2024, Node.js beats the competition in tasks requiring parallelism, scalability, and sheer performance. Its event-driven and asynchronous architecture is by design ideal for real-time applications running under high load.

However, Ruby is more productive for developers and allows for faster prototyping, making it an excellent choice for projects where speed of development is more critical than peak performance. Although Ruby's performance has increased over time, Node.js continues to outperform it in terms of concurrency management and scalability.

To put it briefly, the best people to create high-performance, scalable, and resource-efficient apps are those at Node Js Development firm India. When it comes to quick development and complex business logic for sectors like content management and e-commerce, iRuby continues to lead.

Which piece of technology would you personally find more useful for the projects you are working on right now? Talk to our staff and share the requirements for your project. Whether you're interested in Ruby or Top Node Js Development Services, we would be happy to help you take full advantage of these technologies. Consult with the QWI specialists, and check out our blogs for comparisons of the newest developments in development trends and tech stacks.

#software development company#software development company india#web application development services#web and app development company#node js app development company in india#custom nodejs development company in india#node js web development company in india

0 notes

Text

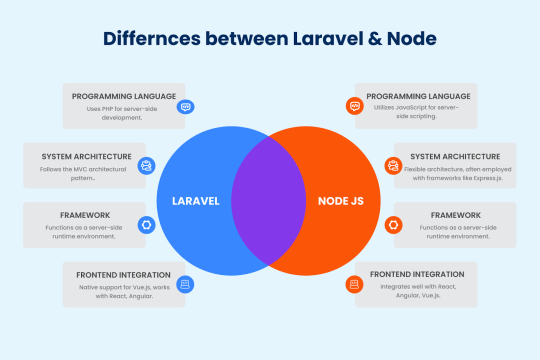

Node.js Vs Laravel: Choosing the Right Web Framework

Difference Between Laravel And Node.Js

Differences Between Laravel & Node

Language

Node.js: Utilizes JavaScript, a versatile, high-level language that can be used for both client-side and server-side development. This makes the development easy. Laravel: Uses PHP, a server-side scripting language specifically designed for web development. PHP has a rich history and is widely used in traditional web applications.

Architecture

Node.js: It does not enforce a specific architecture, allowing flexibility. Middleware architecture is generally used. Laravel: Adheres to the MVC (Model-View-Controller) architecture, which promotes clear separation of demands and goals, which enhances maintainability and scalability.

Framework

Node.js: Acts as a runtime environment, enabling JavaScript to be executed on the server-side. It is commonly used with frameworks like Express.js. Laravel: A full-featured server-side framework that provides a robust structure and built-in tools for web development, including routing, authentication, and ORM (Object-Relational Mapping).

Strengths

Node.js: Node.js is lightweight and high-performance, using an efficient model to handle many tasks simultaneously, making it ideal for real-time apps and high user concurrency. Additionally, it allows developers to use JavaScript for both frontend and backend, streamlining the development process. Laravel: Laravel provides comprehensive built-in features, including Eloquent ORM, Blade templating, and powerful CLI tools to simplify tasks. It also emphasizes elegant syntax, making the codebase easy to read and maintain.

ReadMore

#werbooz#mobile application development#webdevelopement#custom web development#website design services#Node js Laravel#Node Js vs Laravel

1 note

·

View note

Text

#php web development company#Web Application Development#Mobile App Development#node js web development company#web design and development agency#web design and development companies#web design and development company in india#custom web design and development services#web design and development company in usa#best web design and development#custom web design and development

0 notes

Text

Node.js Development Company in India - Dahooks

Node.js has emerged as one of the most popular and widely used platforms for web application development. With its efficient and scalable features, Node.js has become the go-to choice for businesses across the globe. In India, one company stands out for its expertise and excellence in Node.js development - Dahooks.

Dahooks, a leading Node.js development company based in India, has been instrumental in delivering cutting-edge solutions to businesses of all sizes. With their team's deep understanding of the latest industry trends and technologies, Dahooks has established itself as a trusted name in the field of Node.js development.

One of the key advantages of choosing Dahooks as your Node.js development partner is their extensive experience in handling complex projects. The team at Dahooks has successfully delivered numerous projects across diverse sectors such as e-commerce, healthcare, finance, media, and more. Their expertise in working with Node.js allows them to build high-performance, scalable, and robust applications that meet clients' specific requirements.

Dahooks ensures that each project undergoes a well-defined and strategic development process. They start by thoroughly analyzing the client's business goals, target audience, and existing infrastructure. This allows them to design a customized Node.js development plan that aligns with the client's objectives. The team at Dahooks strongly believes in the agile development approach, which ensures that the client remains involved throughout the project lifecycle. This promotes transparency, collaboration, and enables faster delivery of high-quality solutions.

When it comes to technical expertise, Dahooks boasts of a highly skilled and talented team of Node.js developers. These professionals possess in-depth knowledge of Node.js and its related technologies, enabling them to build feature-rich and scalable applications. They are proficient in utilizing frameworks such as Express.js, Socket.io, and Nest.js to develop robust backends, real-time applications, and APIs. With their expertise in front-end technologies such as React, Angular, and Vue.js, Dahooks can provide seamless integration between the frontend and backend, delivering a superior user experience.

Client satisfaction is one of the core values at Dahooks. They strive to understand their clients' specific needs and deliver solutions that exceed expectations. The company has earned a reputation for its timely project delivery, cost-effective services, and round-the-clock customer support. Dahooks' dedicated professionals ensure that each client receives personalized attention and is kept updated on the progress of their project.

In addition to their expertise in Node.js development, Dahooks also offers a wide range of complementary services. These include mobile app development, web design, cloud deployment, DevOps consulting, and more. This comprehensive suite of services makes Dahooks a one-stop destination for businesses looking for end-to-end solutions.

Dahooks' success stories and satisfied client testimonials speak volumes about the quality of their services. Their portfolio showcases a diverse range of innovative and successful projects across various industries. The company's strong commitment to excellence has earned them recognition as a top Node.js development company in India.

If you are looking for a reliable and experienced partner for your Node.js development needs in India, Dahooks is undoubtedly the company to consider. With their proven track record, technical expertise, and customer-centric approach, Dahooks is well-equipped to handle even the most complex projects. Trust Dahooks to bring your ideas to life and unlock the true potential of Node.js for your business. Contact Us Let's Talk Business! Visit: https://dahooks.com/web-dev Mail At: [email protected] A-83 Sector 63 Noida (UP) 201301, INDIA +91–7827150289 Suite D800 25420 Kuykendahl Rd, Tomball, TX 77375, United States +1 302–219–0001

#Node.js Development Company#Node JS Web Development#Node JS web application#Node.js development services#Hire Node.js Developers#Node JS Development Agency#Node JS application development#Custom Node.js Development#Node.js Mobile Application Development#node js development company in india#node js development company in usa

0 notes

Text

The primary purpose of using modules in Node.js is to help organize code into smaller, more manageable pieces.

1 note

·

View note

Text

Explore the #Node.js vs #Python comparison to make the right choice for your #web #app #development. Learn about their strengths, and weaknesses, and find the best #technology for your project in this #comprehensive guide.

#python#web development#node js#hire nodejs developers#node js developers#node js development company#technology#node js application

1 note

·

View note

Text

https://www.appinessworld.com/blogs/web-scraping-using-node-js/

0 notes

Text

Employee Management System

A web-based employee management application that facilitates managers and supervisors in scheduling people based on operational demands.

Industry: Customer Services

Tech stack: DHTMLX, JavaScript, Node.js, Webix

#outsourcing#software development#web development#staff augmentation#it staff augmentation#custom software development#custom software solutions#it staffing company#it staff offshoring#custom software#javascript#node.js#nodejs#node js development services#node js developers#node js development company#node js application#node#webixcustomization#webix

0 notes

Text

Idiosys USA is a leading minnesota-based web development agency, providing the best standard web development, app development, digital marketing, and software development consulting services in Minnesota and all over the United States. We have a team of 50+ skilled IT professionals to provide world-class IT support to all sizes of industries in different domains. We are a leading Minnesota web design company that works for healthcare-based e-commerce, finance organisations business websites, the News Agency website and mobile applications, travel and tourism solutions, transport and logistics management systems, and e-commerce applications. Our team is skilled in the latest technologies like React, Node JS, Angular, and Next JS. We also worked with open-source PHP frameworks like Laravel, Yii2, and others. At Idiosys USA, you will get complete web development solutions. We have some custom solutions for different businesses, but our expertise is in custom website development according to clients requirements. We believe that we are best in cities.

#web development agency#website development company in usa#software development consulting#minnesota web design#hire web developer#hire web designer#web developer minneapolis#minneapolis web development#website design company#web development consulting#web development minneapolis#minnesota web developers#web design company minneapolis

2 notes

·

View notes

Text

#web application development services#offshore outsourcing software company#best software development services in india#web application development services in india#cms web development services#top mobile app development companies#dedicated development team#enterprise software development company in india#enterprise mobile app#react native mobile app development#react native app development india#nodejs development agency#node js software development agency

0 notes

Text

DigitIndus Technologies Private Limited

DigitIndus Technologies Private Limited is one of the best emerging Digital Marketing and IT Company in Tricity (Mohali, Chandigarh, and Panchkula). We provide cost effective solutions to grow your business. DigitIndus Technologies provides Digital Marketing, Web Designing, Web Development, Mobile Development, Training and Internships

Digital Marketing, Mobile Development, Web Development, website development, software development, Internship, internship with stipend, Six Months Industrial Training, Three Weeks industrial Training, HR Internship, CRM, ERP, PHP Training, SEO Training, Graphics Designing, Machine Learning, Data Science Training, Web Development, data science with python, machine learning with python, MERN Stack training, MEAN Stack training, logo designing, android development, android training, IT consultancy, Business Consultancy, Full Stack training, IOT training, Java Training, NODE JS training, React Native, HR Internship, Salesforce, DevOps, certificates for training, certification courses, Best six months training in chandigarh,Best six months training in mohali, training institute

Certification of Recognition by StartupIndia-Government of India

DigitIndus Technologies Private Limited incorporated as a Private Limited Company on 10-01-2024, is recognized as a startup by the Department for Promotion of Industry and Internal Trade. The startup is working in 'IT Services' Industry and 'Web Development' sector. DPIIT No: DIPP156716

Services Offered

Mobile Application Development

Software development

Digital Marketing

Internet Branding

Web Development

Website development

Graphics Designing

Salesforce development

Six months Internships with job opportunities

Six Months Industrial Training

Six weeks Industrial Training

ERP development

IT consultancy

Business consultancy

Logo designing

Full stack development

IOT

Certification courses

Technical Training

2 notes

·

View notes

Text

JavaScript Frameworks

Step 1) Polyfill

Most JS frameworks started from a need to create polyfills. A Polyfill is a js script that add features to JavaScript that you expect to be standard across all web browsers. Before the modern era; browsers lacked standardization for many different features between HTML/JS/and CSS (and still do a bit if you're on the bleeding edge of the W3 standards)

Polyfill was how you ensured certain functions were available AND worked the same between browsers.

JQuery is an early Polyfill tool with a lot of extra features added that makes JS quicker and easier to type, and is still in use in most every website to date. This is the core standard of frameworks these days, but many are unhappy with it due to performance reasons AND because plain JS has incorporated many features that were once unique to JQuery.

JQuery still edges out, because of the very small amount of typing used to write a JQuery app vs plain JS; which saves on time and bandwidth for small-scale applications.

Many other frameworks even use JQuery as a base library.

Step 2) Encapsulated DOM

Storing data on an element Node starts becoming an issue when you're dealing with multiple elements simultaneously, and need to store data as close as possible to the DOMNode you just grabbed from your HTML, and probably don't want to have to search for it again.

Encapsulation allows you to store your data in an object right next to your element so they're not so far apart.

HTML added the "data-attributes" feature, but that's more of "loading off the hard drive instead of the Memory" situation, where it's convenient, but slow if you need to do it multiple times.

Encapsulation also allows for promise style coding, and functional coding. I forgot the exact terminology used,but it's where your scripting is designed around calling many different functions back-to-back instead of manipulating variables and doing loops manually.

Step 3) Optimization

Many frameworks do a lot of heavy lifting when it comes to caching frequently used DOM calls, among other data tools, DOM traversal, and provides standardization for commonly used programming patterns so that you don't have to learn a new one Everytime you join a new project. (you will still have to learn a new one if you join a new project.)

These optimizations are to reduce reflowing/redrawing the page, and to reduce the plain JS calls that are performance reductive. A lot of these optimatizations done, however, I would suspect should just be built into the core JS engine.

(Yes I know it's vanilla JS, I don't know why plain is synonymous with Vanilla, but it feels weird to use vanilla instead of plain.)

Step 4) Custom Element and component development

This was a tool to put XML tags or custom HTML tags on Page that used specific rules to create controls that weren't inherent to the HTML standard. It also helped linked multiple input and other data components together so that the data is centrally located and easy to send from page to page or page to server.

Step 5) Back-end development

This actually started with frameworks like PHP, ASP, JSP, and eventually resulted in Node.JS. these were ways to dynamically generate a webpage on the server in order to host it to the user. (I have not seen a truly dynamic webpage to this day, however, and I suspect a lot of the optimization work is actually being lost simply by programmers being over reliant on frameworks doing the work for them. I have made this mistake. That's how I know.)

The backend then becomes disjointed from front-end development because of the multitude of different languages, hence Node.JS. which creates a way to do server-side scripting in the same JavaScript that front-end developers were more familiar with.

React.JS and Angular 2.0 are more of back end frameworks used to generate dynamic web-page without relying on the User environment to perform secure transactions.

Step 6) use "Framework" as a catch-all while meaning none of these;

Polyfill isn't really needed as much anymore unless your target demographic is an impoverished nation using hack-ware and windows 95 PCs. (And even then, they could possible install Linux which can use modern lightweight browsers...)

Encapsulation is still needed, as well as libraries that perform commonly used calculations and tasks, I would argue that libraries aren't going anywhere. I would also argue that some frameworks are just bloat ware.

One Framework I was researching ( I won't name names here) was simply a remapping of commands from a Canvas Context to an encapsulated element, and nothing more. There was literally more comments than code. And by more comments, I mean several pages of documentation per 3 lines of code.

Custom Components go hand in hand with encapsulation, but I suspect that there's a bit more than is necessary with these pieces of frameworks, especially on the front end. Tho... If it saves a lot of repetition, who am I to complain?

Back-end development is where things get hairy, everything communicates through HTTP and on the front end the AJAX interface. On the back end? There's two ways data is given, either through a non-html returning web call, *or* through functions that do a lot of heavy lifting for you already.

Which obfuscates how the data is used.

But I haven't really found a bad use of either method. But again; I suspect many things about performance impacts that I can't prove. Specifically because the tools in use are already widely accepted and used.

But since I'm a lightweight reductionist when it comes to coding. (Except when I'm not because use-cases exist) I can't help but think most every framework work, both front-end and Back-end suffers from a lot of bloat.

And that bloat makes it hard to select which framework would be the match for the project you're working on. And because of that; you could find yourself at the tail end of a development cycle realizing; You're going to have to maintain this as is, in the exact wrong solution that does not fit the scope of the project in anyway.

Well. That's what junior developers are for anyway...

2 notes

·

View notes

Text

Hire Node js Developer | Hire Node js Programmers in USA

Bring your web application to life with Node.js developers from the USA.

#hire Node JS programmers#hire node js programmers usa#dedicated node js programmers#hire node js developer#node js developers for hire

0 notes

Text

Unlock the Power of Real-Time Web Apps with Professional Node JS Development Services

In the rapidly evolving digital ecosystem, building high-performance, scalable, and secure applications is no longer optional—it's essential. Businesses need robust backend architectures that can handle concurrent connections, process large data streams, and deliver fast user experiences. This is where Node JS development services come into play.

Whether you're a startup looking to launch your MVP or an enterprise aiming to modernize your web infrastructure, leveraging Node JS can be a game-changer. At Orbit Edge Tech, we specialize in offering tailored Node JS development services that align with your business objectives and deliver tangible results.

What is Node.js and Why is It Popular? Node.js is an open-source, cross-platform JavaScript runtime environment that executes JavaScript code outside a web browser. Built on Google Chrome's V8 engine, Node.js enables developers to use JavaScript to write server-side code, creating dynamic and scalable applications. Its non-blocking I/O and event-driven architecture make Node.js perfect for real-time applications like chat apps, collaborative tools, streaming platforms, and e-commerce systems. Key Benefits of Using Node.js: High Performance: Executes code faster due to the V8 engine.

Scalability: Ideal for microservices and APIs.

Unified Language Stack: JavaScript on both front-end and back-end.

Real-Time Capabilities: WebSocket and event-based communication make it suitable for real-time apps.

Large Ecosystem: Over 1 million packages on NPM (Node Package Manager).

Why Choose Orbit Edge Tech for Your Node JS Development Services? At Orbit Edge Tech, we bring over a decade of experience in building secure, high-performance Node.js applications for clients across the globe. Our team of certified developers understands the intricacies of Node.js and has hands-on experience in various industry verticals like eCommerce, healthcare, logistics, education, fintech, and more. We don’t believe in one-size-fits-all. Every business is unique, and so are its challenges. That’s why our Node JS development services are highly customized to meet your specific needs. Our Node.js Expertise Includes: Custom Node.js Application Development Get end-to-end Node.js solutions tailored to your requirements—be it a microservice backend or a full-stack application.

API & Web Services Development We build secure and scalable RESTful and GraphQL APIs to power your mobile and web apps.

Real-Time Application Development From messaging apps to live streaming and IoT dashboards, we create apps that demand instant data communication.

Node.js Migration Services Migrate your existing applications to Node.js seamlessly, with minimal downtime and maximum compatibility.

Support & Maintenance Ensure the smooth running of your applications with our comprehensive support and ongoing performance monitoring.

Use Cases: What Can You Build with Node.js?

Real-Time Chat Applications Build chat apps with real-time communication and synchronization capabilities using WebSockets and Node.js’ event-driven model.

Streaming Services Stream audio, video, and other media in real-time with a robust and efficient back-end powered by Node.js.

E-commerce Platforms Power your online store with fast-loading APIs, secure payment gateways, and scalable microservices.

Single Page Applications (SPAs) Develop dynamic, user-friendly single-page apps using Node.js with React or Angular for seamless performance.

Data-Intensive Dashboards Handle large volumes of data efficiently with fast backend processing and real-time updates.

Our Development Process At Orbit Edge Tech, we follow a proven 6-phase development approach: Planning & Strategy: We understand your goals and define a roadmap.

UI/UX Design: Our designers create intuitive and engaging interfaces.

Development: Node.js experts write clean, efficient, and modular code.

Testing & QA: Every line of code is rigorously tested for bugs and performance.

Deployment: Seamless release across environments.

Support & Optimization: Continuous monitoring and improvements.

Tech Stack We Use with Node.js Node.js works exceptionally well with a variety of modern tools and frameworks. At Orbit Edge Tech, we combine Node.js with: Front-end: React.js, Angular, Vue.js

Databases: MongoDB, PostgreSQL, MySQL, Redis

DevOps: Docker, Kubernetes, Jenkins, GitHub Actions

Cloud Platforms: AWS, Google Cloud, Microsoft Azure

Testing Tools: Mocha, Chai, Jest, Supertest

This allows us to build highly scalable, secure, and future-proof applications for our clients.

Why Node.js is Ideal for Modern Businesses In today’s competitive digital landscape, speed and scalability are everything. Node.js allows businesses to develop apps faster without compromising on performance or user experience. Here are some compelling reasons why more companies are choosing Node JS development services: Faster Time to Market With reusable components and ready-made modules, development time is significantly reduced.

Cost Efficiency Full-stack JavaScript developers can handle both front-end and back-end, reducing hiring needs.

SEO-Friendly Fast load times and server-side rendering improve search engine rankings.

Community Support A large community of developers and contributors ensures constant innovation and problem-solving.

Client Success Story One of our global retail clients approached us to overhaul their legacy PHP-based backend. We migrated them to a modern Node.js + React stack. The results? 65% faster page loads

99.9% uptime with real-time stock sync

30% increase in online conversions

Seamless integration with 3rd party tools (Stripe, Firebase, etc.)

Conclusion Investing in professional Node JS development services is a smart move for businesses looking to build scalable, high-performance, and real-time applications. At Orbit Edge Tech, our mission is to empower businesses with cutting-edge technology, and Node.js is at the heart of that mission. Whether you're looking to build an app from scratch, revamp an existing product, or simply need backend support, our experts are ready to deliver. Get in touch with Orbit Edge Tech today and discuss how our Node JS development services can drive your business forward.

Frequently Asked Questions (FAQs) Q1. What is Node.js used for? Node.js is commonly used for building scalable server-side applications, including web apps, APIs, real-time applications like chat or streaming apps, and more. Its event-driven model is perfect for high-traffic environments.

Q2. Is Node.js better than PHP or Python? Node.js is better suited for real-time applications and handling concurrent connections. While PHP and Python have their advantages, Node.js excels in speed, scalability, and using JavaScript across the entire stack.

Q3. How much do Node JS development services cost? The cost depends on project complexity, required features, and the team’s experience. At Orbit Edge Tech, we offer flexible pricing models tailored to your budget and goals.

Q4. Do you offer support after deployment? Yes, we provide comprehensive post-deployment support, including monitoring, performance optimization, bug fixes, and feature updates to ensure your application runs smoothly.

Q5. Can you integrate Node.js with my existing front-end? Absolutely. Node.js works well with popular front-end frameworks like React, Angular, and Vue.js. Our team can help seamlessly integrate the backend with your current or new front-end stack.

0 notes

Text

Top 10 Tools Every Web Developer Should Know

Web development in 2025 is fast, collaborative, and constantly evolving. Whether you're a beginner or a professional, using the right tools can make or break your development workflow. From writing clean code to deploying powerful applications, having a smart toolkit helps you stay productive, efficient, and competitive.

At Web Era Solutions, we believe that staying ahead in the web development game starts with mastering the essentials. In this blog, we’ll share the top 10 tools every web developer should know — and why they matter.

1. Visual Studio Code (VS Code)

One of the most widely used code editors for web developers is Visual Studio Code. It's lightweight, fast, and highly customizable with thousands of extensions. It supports HTML, CSS, JavaScript, PHP, Python, and more.

Why It’s Great:

IntelliSense for smart code suggestions

Git integration

Extensions for everything from React to Tailwind CSS

Perfect for front-end and full-stack development projects.

2. Git & GitHub

Version control is a must for any developer. Git helps you track changes in your code, while GitHub allows collaboration, storage, and deployment.

Why It’s Great:

Manage code history easily

Work with teams on the same codebase

Deploy projects using GitHub Pages

Essential for modern collaborative web development.

3. Chrome DevTools

Built right into Google Chrome, Chrome DevTools helps you inspect code, debug JavaScript, analyze page load speed, and test responsive design.

Why It’s Great:

Real-time DOM editing

CSS debugging

Network activity analysis

Crucial for performance optimization and debugging.

4. Node.js & NPM

Node.js allows developers to run JavaScript on the server-side, while NPM (Node Package Manager) provides access to thousands of libraries and tools.

Why It’s Great:

Build full-stack web apps using JavaScript

Access to powerful development packages

Efficient and scalable

Ideal for back-end development and building fast APIs.

5. Figma

While not a coding tool, Figma is widely used for UI/UX design and prototyping. It allows developers and designers to collaborate in real-time.

Why It’s Great:

Cloud-based interface

Easy developer handoff

Responsive design previews

smoothly connects the design and development phases.

6. Bootstrap

Bootstrap is a front-end framework that helps you build responsive, mobile-first websites quickly using pre-designed components and a 12-column grid system.

Why It’s Great:

Saves time with ready-to-use elements

Built-in responsiveness

Well-documented and easy to learn

Perfect for developers who want to speed up the front-end process.

7. Postman

Postman is a tool for testing and developing APIs (Application Programming Interfaces). It’s especially useful for back-end developers and full-stack devs.

Why It’s Great:

Easy API testing interface

Supports REST, GraphQL, and SOAP

Automation and collaboration features

Critical for building and testing web services.

8. Webpack

Webpack is a powerful module bundler that compiles JavaScript modules into a single file and optimizes assets like images, CSS, and HTML.

Why It’s Great:

Speeds up page load time

Optimizes resource management

Integrates with most modern frameworks

Essential for large-scale projects with complex codebases.

9. Tailwind CSS

Tailwind CSS is a utility-first CSS framework that lets you style directly in your markup. It's becoming increasingly popular for its flexibility and speed.

Why It’s Great:

Eliminates custom CSS files

Makes design consistent and scalable

Works great with React, Vue, and other JS frameworks

Favored by developers for clean and fast front-end styling.

10. Netlify

A cutting-edge platform for front-end apps and static websites is Netlify. It facilitates continuous integration and streamlines the deployment procedure.

Why It’s Great:

One-click deployments

Free hosting for small projects

Built-in CI/CD and custom domain setup

Great for deploying portfolio websites and client projects.

Final Thoughts

Mastering these tools gives you a strong foundation as a web developer in 2025. Whether you're coding solo, working in a team, or launching your startup, these tools can dramatically improve your development process and help deliver better results faster.

At Web Era Solutions, we use these tools daily to build high-performance websites, scalable web applications, and full-stack solutions for businesses of all sizes. If you're looking to build a powerful digital presence, we’re here to help.

Ready to Build Something Amazing?

Whether you're a business owner, entrepreneur, or aspiring developer, Web Era Solutions offers professional web development services in Delhi and across India.

Contact us today to discuss your project or learn more about the tools we use to build modern, high-performance websites.

For a free consultation, give us a call right now or send us a mail!

0 notes