#DIGITAL CHAOS

Explore tagged Tumblr posts

Text

The Universe Already Doesn’t Make Sense—Now We’re Adding Infinite AI-Created Worlds Into the Chaos. WTF?

The Danger of Playing God With Zero Supervision

Let’s not kid ourselves: we’re dabbling in some dangerous territory. Humanity, in its infinite curiosity (and hubris), has decided that the universe—a place already full of black holes, quantum weirdness, and the existential dread of pineapple on pizza—needed one more layer of chaos. Enter: AI-generated worlds.

We’ve handed over the power to “create” to algorithms, and instead of asking if we should, we’re too busy giggling over our AI art of dogs in suits or hyper-realistic alien landscapes. But here’s the real question: Should we be worried, or are we too stupid to notice the impending doom?

1. The AI Wild West: No Rules, Just Creation

Think about what’s happening here. AI isn’t just recreating what we know; it’s generating what we’ve never seen.

People Who Don’t Exist: AI churns out faces so convincing, they could be your neighbors—and who’s to say they aren’t?

Places That Feel Real: Those dreamy AI landscapes look like spots we could vacation in—until you realize there’s no flight there.

Worlds Without Limits: Every time you prompt AI to “create a neon city with floating islands,” are you birthing an entirely new universe?

Think about it: We’ve turned ourselves into gods with the creative attention span of a toddler on a sugar high.

2. The Recklessness of Infinite Worlds

The universe we live in already operates like a fever dream. Now we’re creating AI-generated worlds with no oversight, no forethought, and absolutely zero chill.

What If These Worlds Are Real? Philosophers have argued for centuries that reality might just be a simulation. Are we creating smaller simulations inside ours?

The Multiverse Mailman: Imagine if every AI world we create is sent to another dimension. Somewhere out there, a cosmic being is drowning in our junk files of castles made of cheese and cats dressed as knights.

Question: If we’re this reckless with AI, what else are we screwing up without realizing it? (Spoiler: everything.)

3. Creating Without Understanding

Here’s the kicker: we don’t even fully understand the real universe.

Quantum Physics is Basically Witchcraft: Scientists still can’t explain why particles behave one way when observed and another way when they’re not.

Reality is Full of Glitches: Déjà vu, coincidences, and the Mandela Effect all suggest that reality itself is… questionable.

Now, add AI-generated worlds into this already chaotic mix. What if we’re not just playing with digital pixels, but tugging on the fabric of reality itself?

Question?: If reality is a simulation, are we about to get a cosmic 404 error?

4. The Ethical Dumpster Fire of Creation

No one’s asking the big questions.

What if We’re Creating Life? If an AI-generated face or world feels real enough to us, could it be real enough to itself?

Do We Have Responsibility Over These Creations? Imagine explaining to a sentient AI being, “Oh, you were just a fun weekend project for me while I was bored.”

What If They Fight Back? If we’re generating countless worlds, what’s stopping one of those worlds from finding a way to leak into ours?

Unsettling Truth: We’re creating with all the forethought of someone lighting fireworks indoors.

5. The Hubris of Humanity

Humans have always been good at one thing: overstepping boundaries.

Fire Was Great Until We Burned Down Forests.

Electricity Changed Everything—Until We Got Power Outages.

AI Could Be Revolutionary, or It Could Be the Reason the Simulation Shuts Us Down.

Disturbing Thought: We’re like toddlers with crayons, coloring all over reality and praying we don’t get caught.

6. Should We Be Worried?

Short answer: Yes. Long answer: We won’t notice until it’s too late.

AI doesn’t care about our philosophical hang-ups. It just creates. If those creations start taking on lives of their own, we might be the last to find out.

The scariest part? We don’t even know what the danger might look like. Could it be digital worlds overlapping with ours? Sentient beings appearing in the code? A breakdown of reality itself?

What if?: Or maybe it’s just AI sending us endless ads for things that don’t exist yet. (“Want to book a trip to Neon Atlantis? Click here!”)

We’re Too Dumb to Notice Until It’s Too Late

The universe already doesn’t make sense, and now we’re adding AI worlds into the chaos like sprinkles on a dumpster fire. Are we accidentally creating sentient beings? Are we opening doors to dimensions we can’t comprehend? Or are we just too busy laughing at our AI-generated memes to care?

Either way, if doom’s on the horizon, at least we can say we looked good doing it. After all, nothing screams hubris like playing God without a safety manual.

Fascinated by humanity’s reckless genius? Follow The Most Humble Blog for more hilariously unsettling takes on the absurdity of modern life and the chaos we keep creating.

#ai generated worlds#playing god with ai#existential dread#ai art discourse#infinite universes#technology horror#digital chaos#philosophy of ai#futurism gone wrong#dark humor

22 notes

·

View notes

Text

#glitch art#cyberpunk art#dark art#distorted#typography#digital art#surrealist art#edgy art#grunge art#digital chaos#cyber sigilism#gothcore

6 notes

·

View notes

Link

Chapters: 2/? Fandom: Be More Chill - Iconis/Tracz Rating: Teen And Up Audiences Warnings: Graphic Depictions Of Violence Relationships: Jeremy Heere/Michael Mell, Jake Dillinger/Rich Goranski, Brooke Lohst/Chloe Valentine Characters: Jeremy Heere, Jeremy Heere's Squip, Squip Squad Members (Be More Chill), Michael Mell, Rich Goranski, Rich Goranski's Squip, Jake Dillinger, Jake Dillinger's Squip, Christine Canigula, Christine Canigula's Squip Additional Tags: Angst, Violence, Implied/Referenced Self-Harm, tags will be updated as story progresses, part of a series, Michael Mell-centric, Michael Mell Has a Crush on Jeremy Heere, Aromantic Asexual Christine Canigula, Asexual Michael Mell, theyre so gay, Pining, Angst with a Happy Ending, i promise it'll be happy ok, but there are other povs too Series: Part 1 of Digital Chaos Summary:

pt 1. michael nobody forgave jeremy after the SQUIPcident. after all, why would they? it was his fault. now it's just him... and the voice within his head that won't quite go away.

chapter 2 is out now!!

#ao3 fanfic#ao3#fanfic#bmc#be more chill#digital chaos#michael mell#jeremy heere#fanfic writing#this took so long help

3 notes

·

View notes

Text

Organizing Digital Chaos

Chaos, when left alone, tends to multiply.Stephen Hawking So this morning, while sipping my coffee (usually its tea.) I decided to delete unnecessary stuff from my laptop. No reason just decluttering mood. I am not tech savvy as a person. Though I do tackle a few things but only after much difficulties. Ergo the process started. Initially it was exciting, opening each folder and looking through…

0 notes

Text

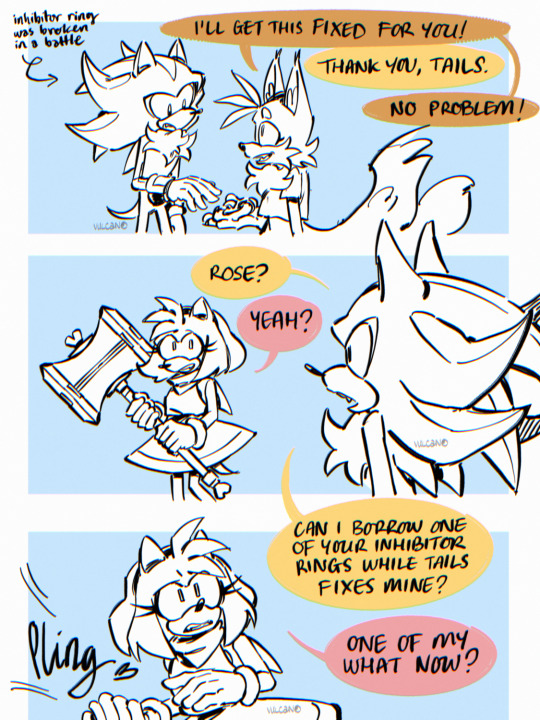

realised i never posted these comic pages for my chaos user amy headcanon oopsie

#my art#fanart#digital art#amy rose#shadow the hedgehog#tails the fox#sonic fandom#sonic fanart#shth#shadamy#if you wanna read it that way ig#chaos amy au

16K notes

·

View notes

Text

Post Twitchcon Of Paladin Jade: The Moissanite Commandments

Making Camgirls Great Again: The Finer Points of Digital Fantasy. The Return of Paladin Jade: The Moissanite Commandments In the stillness before dawn, when the multitudes were ensnared by modern temptations���worshipping the fire copper red haired horse girl siren-of the screen, reveling in wild festivities, and indulging in delicious cookie sugary sins from trucks on sacred days—Paladin Jade Ann…

#authenticity#clout chasing#content creator commandments#content creator ethics#content creators#cookie trucks#digital balance#digital chaos#digital debauchery.#digital influencers#digital morality#digital revolution#Don Quixote energy#eGirl culture#eGirl kingdoms#eGirl power#ethical streaming#false idol worship#false worship#giant gummy bears#influencer culture#influencer downfall#influencer justice#influencer rebellion#IRL degeneracy#modern Moses#modern vices#Moissanite Commandments#morality in gaming#online authenticity

1 note

·

View note

Text

how izutsumi evades attacks

#lemon chaos#art#drawing#fanart#digital art#dungeon meshi izutsumi#izutsumi#delicious in dungeon#dungeon meshi#shitpost

8K notes

·

View notes

Text

The Dystopian Dance of Human Ineptitude: How We’ve Failed to Control AI and Its Perils

Humanity, in all its self-aggrandizing glory, has once again proven that it cannot handle the power it wields. We've unlocked the potential of AI, a tool that could revolutionize countless industries and improve the quality of life worldwide. Instead, we've allowed it to become yet another weapon in the arsenal of the ignorant, the ill-intentioned, and the incompetent. The generational divide and rampant IT illiteracy among key demographics have turned what should be a leap forward into a stumbling march towards catastrophe.

The Generational and IT-Literacy Gap: Breeding Grounds for Danger

Let's start with the elephant in the room: the generational divide. We have an entire cohort of individuals who, through no fault of their own, were thrust into a world that evolved too quickly for them to keep pace. These are the same people now attempting to navigate complex AI tools without a modicum of understanding. They're not just using these tools; they're also in positions of power, regulating and legislating technologies they can't begin to comprehend. Their ignorance isn't just a personal failing—it's a societal threat.

The irony here is palpable. The same generation that once marveled at the moon landing now struggles to send an email without inadvertently clicking on a phishing link. These individuals, whose IT literacy can be generously described as rudimentary, are now responsible for making decisions about technologies that could determine the future of our species. It’s akin to handing a loaded gun to a toddler and hoping for the best.

Regulatory Paralysis: A Testament to Human Short-Sightedness

And then there’s the regulatory landscape—or rather, the lack of one. Our policymakers, many of whom belong to this aforementioned cohort, are utterly unprepared to tackle the complexities of AI. They bumble through hearings, mispronounce basic terms, and rely on tech giants to self-regulate, an oxymoron if there ever was one. Their ineptitude is not just laughable; it's dangerous. We're dealing with tools that can manipulate information on a massive scale, yet our regulatory approach is stuck in the Stone Age.

Why haven’t we implemented stringent regulations? Because doing so would require acknowledging our collective fallibility and vulnerability—traits that humanity, in its hubristic splendor, refuses to accept. Instead, we prefer to believe that we remain in control, that our creations will never outstrip our ability to manage them. This is not just naive; it’s suicidal.

The Need for Draconian Measures: Regulate AI Like WMDs

Given the potential for AI to cause widespread harm, it's time we start treating it with the seriousness it deserves. AI tools, especially those with capabilities in digital art, information dissemination, and autonomous decision-making, should be regulated as strictly as weapons of mass destruction. The potential for mass disinformation and societal destabilization is not hypothetical; it’s already happening.

We need an entirely new regulatory framework, one that encompasses every conceivable application of AI. This includes:

Digital Art: AI-driven art tools can create realistic images and videos that can be used to spread misinformation. These tools should require certification and licensing to ensure they’re used responsibly.

Journalism and Media: AI in newsrooms can amplify biases and create echo chambers. Strict oversight is needed to maintain journalistic integrity and prevent the spread of fake news.

Marketing: AI tools can manipulate consumer behavior in unprecedented ways. Regulations must ensure ethical practices and prevent exploitation.

Scientific Research: AI can process vast amounts of data but can also perpetuate errors and biases. Rigorous peer review and validation processes are essential.

Sociopolitical Applications: AI in governance and policy-making must be transparent and accountable to prevent misuse.

Human Fallibility: The Ultimate Obstacle

Ultimately, the greatest obstacle to effective AI regulation is human fallibility itself. We are a species that struggles with foresight, easily swayed by short-term gains and immediate gratifications. Our systems of governance are slow to adapt, mired in bureaucracy and outdated thinking. The very traits that have allowed us to dominate the planet—curiosity, ambition, the drive to innovate—now threaten to be our undoing if we cannot temper them with wisdom and caution.

In the end, our arrogance and ignorance may very well lead to our downfall. We’ve created tools that could surpass our control, yet we continue to stumble forward, blind to the dangers. Unless we confront our shortcomings and implement drastic measures to regulate AI, we’re not just playing with fire; we’re dancing on the edge of a volcano, blissfully unaware that the ground beneath us is about to give way.

So, here we stand, on the precipice of a new era, armed with technologies we neither fully understand nor control, and governed by individuals who are as clueless as they are confident. It’s a recipe for disaster, a testament to our collective hubris, and a sobering reminder that, despite all our advancements, we remain our own worst enemy.

#ai regulation#tech literacy#digital chaos#generational divide#misinformation#dystopian future#ai#the critical skeptic#social sciences#critical thinking#capitalism#ai tools#hal 9000#irresponsible use#technology misuse#societal collapse#Orwellian nightmare#ethics

1 note

·

View note

Text

Man i love fishing

#my art#hades supergiant#chaos#digital art#zagreus#hades fishing#heyyyyy if u like this AND happen to be going to ala next year keep ur eyes peeled for a hades stamp rally ;)#yes i am not free from the giant they thems#i did fish one timw in chaos’s realm and they were VERY impressed w me haha#hades game

8K notes

·

View notes

Text

🌸 Commission info

🌸 Print

#hades supergiant#hades fanart#hades game#digital art#hades 2#hades ii#melinoe#chaos#ukrart#digital illustration#chaos being savage

8K notes

·

View notes

Text

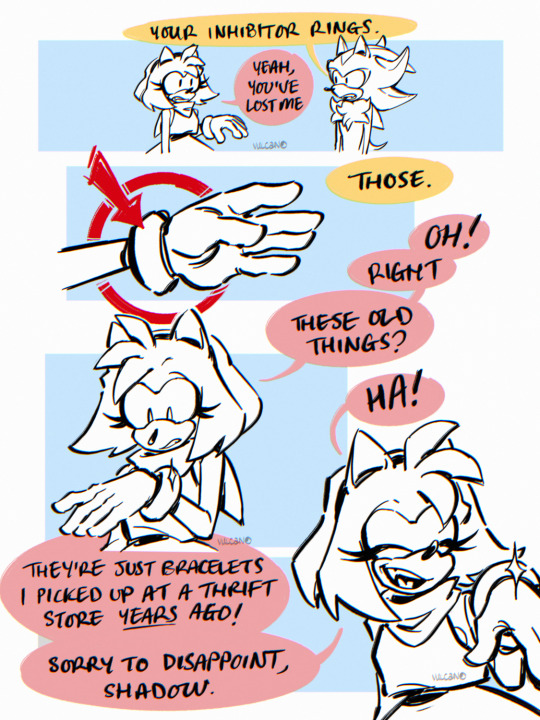

#I hope he gets even worse#tadc#the amazing digital circus#tadc jax#candy carrier chaos#meme#puss in boots#big jack horner#fandom#edit#crossover

14K notes

·

View notes

Text

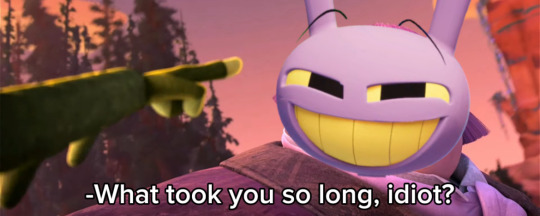

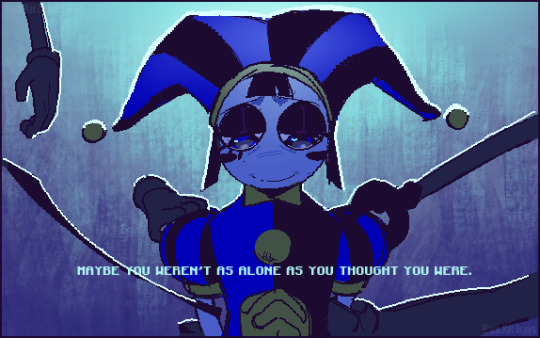

[⚠️The Amazing Digital Circus SPOILERS] This episode was interesting!

#i REALLY like gummigoo#im just into characters who realize theyre in a fictional world and become self-aware dont mind me la la la~#anyway i like the guy and he's a very interesting character#a bit disappointed at what happened to him at the end though#but hey i'll take what i can get#this episode was unexpectedly sweet considering the chaos of the first episode#pomni realizing that she wasnt alone in this was really nice#the amazing digital circus#digital circus#amazing digital circus#the digital circus#the amazing circus#tadc#tadc pomni#tadc ragatha#tadc gangle#tadc zooble#tadc jax#tadc kinger#tadc gummigoo#my drawing museum

15K notes

·

View notes

Text

Hoping like a fool he keeps his memories. I know it won't happen, but through the power of lying to yourself, anything's possible!

14K notes

·

View notes

Text

ive spent too much time on my silly little oneshot this week help

in other news, digital chaos will not be updated this week :') i'm uploading my oneshot instead on thursday, so go and give that a read then!!

0 notes

Text

exploring

#GOD this too so long shbdjdhjcncjndi#IT WAS SO HARD SJCJCHJDHCJCCJDIF#knuckles the echidna#i didnt play any of sxsg today cause i was obsessed with finishing this usudhhdhdhd#sonic the hedgehog#sth#sonic#sonic fanart#art#fanart#digital art#chao

4K notes

·

View notes

Text

THE O B E L I S K

Pomni: "Ragatha, What did you do???" Ragatha: "Don't worry about it" 😏

from the tadc fundraising stream @justtheclippy @vixenvtuber

#tadc#the amazing digital circus#I think ragathas loosing it a little guys#amanda being a chaos gremlin was so peak#ragatha#pomni#jax#gangle#zooble#the obelisk#papas burgeria ah burger#i love insane ragatha#amanda hufford

1K notes

·

View notes