#what even is a consistent artstyle and workflow

Explore tagged Tumblr posts

Text

late guapoduo wedding fanart

finally gay and happy

#qsmp#qsmp fanart#qsmp cellbit#qsmp roier#guapoduo#spiderbit#what are backgrounds lmao#what even is a consistent artstyle and workflow#anyway#my beloveds#im so happy for them#i have so much qsmp fanart i want to make and neither the skill nor the speed to do any of it ueueueueueueue#hidden's cringeposting tag for the sake of organization

168 notes

·

View notes

Text

“Along For The Ride”, a reasonably complex demo

It's been a while since I've been anticipating people finally seeing one of my demos like I was anticipating people to see "Along For The Ride", not only because it ended up being a very personal project in terms of feel, but also because it was one of those situations where to me it felt like I was genuinely throwing it all the complexity I've ever did in a demo, and somehow keeping the whole thing from falling apart gloriously.

youtube

The final demo.

I'm quite happy with the end result, and I figured it'd be interesting to go through all the technology I threw at it to make it happen in a fairly in-depth manner, so here it goes.

(Note that I don't wanna go too much into the "artistic" side of things; I'd prefer if the demo would speak for itself on that front.)

The starting point

I've started work on what I currently consider my main workhorse for demomaking back in 2012, and have been doing incremental updates on it since. By design the system itself is relatively dumb and feature-bare: its main trick is the ability to load effects, evaluate animation splines, and then render everything - for a while this was more than enough.

Around the summer of 2014, Nagz, IR and myself started working on a demo that eventually became "Háromnegyed Tíz", by our newly formed moniker, "The Adjective". It was for this demo I started experimenting with something that I felt was necessary to be able to follow IR's very post-production heavy artstyle: I began looking into creating a node-based compositing system.

I was heavily influenced by the likes of Blackmagic Fusion: the workflow of being able to visually see where image data is coming and going felt very appealing to me, and since it was just graphs, it didn't feel very complicated to implement either. I had a basic system up and running in a week or two, and the ability to just quickly throw in effects when an idea came around eventually paid off tenfold when it came to the final stage of putting the demo together.

The initial node graph system for Háromnegyed Tíz.

The remainder of the toolset remained relatively consistent over the years: ASSIMP is still the core model loader of the engine, but I've tweaked a few things over time so that every incoming model that arrives gets automatically converted to its own ".assbin" (a name that never stops being funny) format, something that's usually considerably more compact and faster to load than formats like COLLADA or FBX. Features like skinned animation were supported starting with "Signal Lost", but were never spectacularly used - still, it was a good feeling to be able to work with an engine that had it in case we needed it.

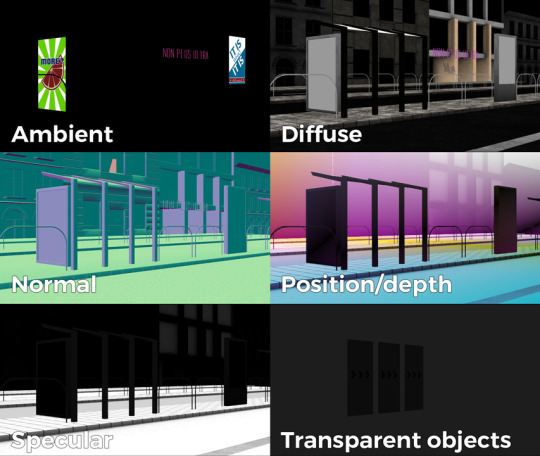

Deferred rendering

During the making of "Sosincs Vége" in 2016, IR came up with a bunch of scenes that felt like they needed to have an arbitrary number of lightsources to be effecive; to this end I looked into whether I was able to add deferred rendering to the toolset. This turned out to be a bit fiddly (still is) but ultimately I was able to create a node type called the "G-buffer", which was really just a chunk of textures together, and use that as the basis for two separate nodes: one that renders the scenegraph into the buffer, and another that uses the buffer contents to light the final image.

The contents of a G-buffer; there's also additional information in the alpha channels.

Normally, most deferred renderers go with the tile-based approach, where they divide the screen into 16x16 or 32x32 tiles and run the lights only on the tiles they need to run them on. I decided to go with a different approach, inspired by the spotlight rendering in Grand Theft Auto V: Because I was mostly using point- and spot-lights, I was able to control the "extent" of the lights and had a pretty good idea whether each pixel was lit or not based on its position relative to the light source. By this logic, e.g. for pointlights if I rendered a sphere into the light position, with the radius of what I considered to be the farthest extent of the light, the rendered sphere would cover all the pixels on screen covered by that light. This means if I ran a shader on each of those pixels, and used the contents of the G-buffer as input, I would be able to calculate independent lighting on each pixel for each light, since lights are additive anyway. The method needed some trickery (near plane clipping, sphere mesh resolution, camera being near the sphere edge or inside the sphere), but with some magic numbers and some careful technical artistry, none of this was a problem.

The downside of this method was that the 8-bit channel resolution of a normal render target was no longer enough, but this turned out to be a good thing: By using floating point render targets, I was able to adapt to a high-dynamic range, linear-space workflow that ultimately made the lighting much easier to control, with no noticable loss in speed. Notably, however, I skipped a few demos until I was able to add the shadow routines I had to the deferred pipeline - this was mostly just a question of data management inside the graph, and the current solution is still something I'm not very happy with, but for the time being I think it worked nicely; starting with "Elégtelen" I began using variance shadowmaps to get an extra softness to shadows when I need it, and I was able to repurpose that in the deferred renderer as well.

The art pipeline

After doing "The Void Stared Into Me Waiting To Be Paid Its Dues" I've began to re-examine my technical artist approach; it was pretty clear that while I knew how the theoreticals of a specular/glossiness-based rendering engine worked, I wasn't necessarily ready to be able to utilize the technology as an artist. Fortunately for me, times changed and I started working at a more advanced games studio where I was able to quietly pay closer attention to what the tenured, veteran artists were doing for work, what tools they use, how they approach things, and this introduced me to Substance Painter.

I've met Sebastien Deguy, the CEO of Allegorithmic, the company who make Painter, way back both at the FMX film festival and then in 2008 at NVScene, where we talked a bit about procedural textures, since they were working on a similar toolset at the time; at the time I obviously wasn't competent enough to deal with these kind of tools, but when earlier this year I watched a fairly thorough tutorial / walkthrough about Painter, I realized maybe my approach of trying to hand-paint textures was outdated: textures only ever fit correctly to a scene if you can make sure you can hide things like your UV seams, or your UV scaling fits the model - things that don't become apparent until you've saved the texture and it's on the mesh.

Painter, with its non-linear approach, goes ahead of all that and lets you texture meshes procedurally in triplanar space - that way, if you unwrapped your UVs correctly, your textures never really stretch or look off, especially because you can edit them in the tool already. Another upside is that you can tailor Painter to your own workflow - I was fairly quickly able to set up a preset to my engine that was able to produce diffuse, specular, normal and emissive maps with a click of a button (sometimes with AO baked in, if I wanted it!), and even though Painter uses an image-based lighting approach and doesn't allow you to adjust the material settings per-textureset (or I haven't yet found it where), the image in Painter was usually a fairly close representation to what I saw in-engine. Suddenly, texturing became fun again.

An early draft of the bus stop scene in Substance Painter.

Depth of field

DOF is one of those effects that is nowadays incredibly prevalent in modern rendering, and yet it's also something that's massively overused, simply because people who use it use it because it "looks cool" and not because they saw it in action or because they want to communicate something with it. Still, for a demo this style, I figured I should revamp my original approach.

The original DOF I wrote for Signal Lost worked decently well for most cases, but continued to produce artifacts in the near field; inspired by both the aforementioned GTAV writeup as well as Metal Gear Solid V, I decided to rewrite my DOF ground up, and split the rendering between the near and far planes of DOF; blur the far field with a smart mask that keeps the details behind the focal plane, blur the near plane "as is", and then simply alphablend both layers on top of the original image. This gave me a flexible enough effect that it even coaxed me to do a much-dreaded focal plane shift in the headphones scene, simply because it looked so nice I couldn't resist.

The near- and far-fields of the depth of field effect.

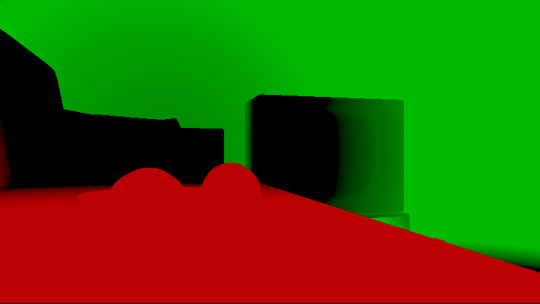

Screen-space reflections

Over the summer we did a fairly haphazard Adjective demo again called "Volna", and when IR delivered the visuals for it, it was very heavy on raytraced reflections he pulled out of (I think) 3ds max. Naturally, I had to put an axe to it very quickly, but I also started thinking if we can approximate "scene-wide" reflections in a fairly easy manner. BoyC discovered screen-space reflections a few years ago as a fairly cheap way to prettify scenes, and I figured with the engine being deferred (i.e. all data being at hand), it shouldn't be hard to add - and it wasn't, although for Volna, I considerably misconfigured the effect which resulted in massive framerate loss.

The idea behind SSR is that a lot of the time, reflections in demos or video games are reflecting something that's already on screen and quite visible, so instead of the usual methods (like rendering twice for planar reflections or using a cubemap), we could just take the normal at every pixel, and raymarch our way to the rendered image, and have a rough approximation as to what would reflect there.

The logic is, in essence to use the surface normal and camera position to calculate a reflection vector and then start a raymarch from that point and walk until you decide you've found something that may be reflecting on the object; this decision is mostly depth based, and can be often incorrect, but you can mitigate it by fading off the color depending on a number of factors like whether you are close to the edge of the image or whether the point is way too far from the reflecting surface. This is often still incorrect and glitchy, but since a lot of the time reflections are just "candy", a grainy enough normalmap will hide most of your mistakes quite well.

Screen-space reflections on and off - I opted for mostly just a subtle use, because I felt otherwise it would've been distracting.

One important thing that Smash pointed out to me while I was working on this and was having problems is that you should treat SSR not as a post-effect, but as lighting, and as such render it before the anti-aliasing pass; this will make sure that the reflections themselves get antialiased as well, and don't "pop off" the object.

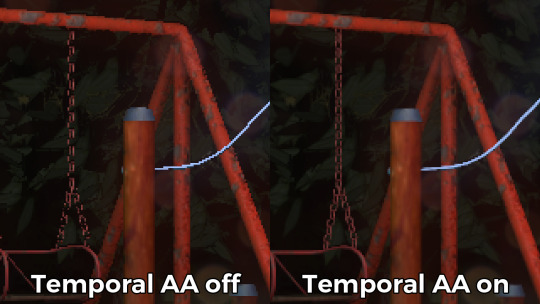

Temporal antialiasing

Over the last 5 years I've been bearing the brunt of complaints that the aliasing in my demos is unbearable - I personally rarely ever minded the jaggy edges, since I got used to them, but I decided since it's a demo where every pixel counts, I'll look into solutions to mitigate this. In some previous work, I tried using FXAA, but it never quite gave me the results I wanted, so remembering a conversation I had with Abductee at one Revision, I decided to read up a bit on temporal antialiasing.

The most useful resource I found was Bart Wroński's post about their use of TAA/TSSAA (I'm still not sure what the difference is) in one of the Assassin's Creed games. At its most basic, the idea behind temporal antialiasing is that instead of scaling up your resolution to, say, twice or four times, you take those sub-pixels, and accumulate them over time: the way to do this would be shake the camera slightly each frame - not too much, less than a quarter-pixel is enough just to have the edges alias slightly differently each frame - and then average these frames together over time. This essentially gives you a supersampled image (since every frame is slightly different when it comes to the jagged edges) but with little to no rendering cost. I've opted to use 5 frames, with the jitter being in a quincunx pattern, with a random quarter-pixel shake added to each frame - this resulted in most edges being beautifully smoothed out, and I had to admit the reasonably little time investment was worth the hassle.

Anti-aliasing on and off.

The problem of course, is that this works fine for images that don't move all that much between frames (not a huge problem in our case since the demo was very stationary), but anything that moves significantly will leave a big motion trail behind it. The way to mitigate would be to do a reprojection and distort your sampling of the previous frame based on the motion vectors of the current one, but I had no capacity or need for this and decided to just not do it for now: the only scene that had any significant motion was the cat, and I simply turned off AA on that, although in hindsight I could've reverted back to FXAA in that particular scenario, I just simply forgot. [Update, January 2019: This has been bugging me so I fixed this in the latest version of the ZIP.]

There were a few other issues: for one, even motion vectors won't be able to notice e.g. an animated texture, and both the TV static and the rain outside the room were such cases. For the TV, the solution was simply to add an additional channel to the GBuffer which I decided to use as a "mask" where the TAA/TSSAA wouldn't be applied - this made the TV texture wiggle but since it was noisy anyway, it was impossible to notice. The rain was considerably harder to deal with and because of the prominent neon signs behind it, the wiggle was very noticable, so instead what I ended up doing is simply render the rain into a separate 2D matte texture but masked by the scene's depth buffer, do the temporal accumulation without it (i.e. have the antialiased scene without rain), and then composite the matte texture into the rendered image; this resulted in a slight aliasing around the edge of the windows, but since the rain was falling fast enough, again, it was easy to get away with it.

The node graph for hacking the rainfall to work with the AA code.

Transparency

Any render coder will tell you that transparency will continue to throw a wrench into any rendering pipeline, simply because it's something that has to respect depth for some things, but not for others, and the distinction where it should or shouldn't is completely arbitrary, especially when depth-effects like the above mentioned screen-space reflections or depth of field are involved.

I decided to, for the time being, sidestep the issue, and simply render the transparent objects as a last forward-rendering pass using a single light into a separate pass (like I did with the rain above) honoring the depth buffer, and then composite them into the frame. It wasn't a perfect solution, but most of the time transparent surfaces rarely pick up lighting anyway, so it worked for me.

Color-grading and image mastering

I was dreading this phase because this is where it started to cross over from programming to artistry; as a first step, I added a gamma ramp to the image to convert it from linear to sRGB. Over the years I've been experimenting with a lot of tonemap filters, but in this particular case a simple 2.2 ramp got me the result that felt had the most material to work with going into color grading.

I've been watching Zoom work with Conspiracy intros for a good 15 years now, and it wasn't really until I had to build the VR version of "Offscreen Colonies" when I realized what he really does to get his richer colors: most of his scenes are simply grayscale with a bit of lighting, and he blends a linear gradient over them to manually add colour to certain parts of the image. Out of curiousity I tried this method (partly out of desperation, I admit), and suddenly most of my scenes began coming vibrantly to life. Moving this method from a bitmap editor to in-engine was trivial and luckily enough my old friend Blackpawn has a collection of well known Photoshop/Krita/etc. blend mode algorithms that I was able to lift.

Once the image was coloured, I stayed in the bitmap editor and applied some basic colour curve / level adjustment to bring out some colours that I felt got lost when using the gradient; I then applied the same filters on a laid out RGB cube, and loaded that cube back into the engine as a colour look-up table for a final colour grade.

Color grading.

Optimizations

There were two points in the process where I started to notice problems with performance: After the first few scenes added, the demo ran relatively fine in 720p, but began to dramatically lose speed if I switched to 1080p. A quick look with GPU-Z and the tool's internal render target manager showed that the hefty use of GPU memory for render targets quickly exhausted 3GB of VRAM. I wasn't surprised by this: my initial design for render target management for the node graph was always meant to be temporary, as I was using the nodes as "value types" and allocating a target for each. To mitigate this I spent an afternoon designing what I could best describe as a dependency graph, to make sure that render targets that are not needed for a particular render are reused as the render goes on - this got my render target use down to about 6-7 targets in total for about a hundred nodes.

The final node graph for the demo: 355 nodes.

Later, as I was adding more scenes (and as such, more nodes), I realized the more nodes I kept adding, the more sluggish the demo (and the tool) got, regardless of performance - clearly, I had a CPU bottleneck somewhere. As it turned out after a bit of profiling, I added some code to save on CPU traversal time a few demos ago, but after a certain size this code itself became a problem, so I had to re-think a bit, and I ended up simply going for the "dirty node" technique where nodes that explicitly want to do something mark their succeeding nodes to render, and thus entire branches of nodes never get evaluated when they don't need to. This got me back up to the coveted 60 frames per second again.

A final optimization I genuinely wanted to do is crunch the demo down to what I felt to be a decent size, around 60-ish megabytes: The competition limit was raised to 128MB, but I felt my demo wasn't really worth that much size, and I felt I had a chance of going down to 60 without losing much of the quality - this was mostly achieved by just converting most diffuse/specular (and even some normal) textures down to fairly high quality JPG, which was still mostly smaller than PNG; aside from a few converter setting mishaps and a few cases where the conversion revealed some ugly artifacts, I was fairly happy with the final look, and I was under the 60MB limit I wanted to be.

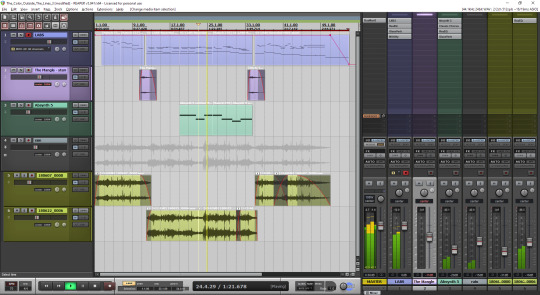

Music

While this post mostly deals with graphics, I'd be remiss to ignore the audio which I also spent a considerable time on: because of the sparse nature of the track, I didn't need to put a lot of effort in to engineering the track, but I also needed to make sure the notes sounded natural enough - I myself don't actually play keyboards and my MIDI keyboard (a Commodore MK-10) is not pressure sensitive, so a lot of the phrases were recorded in parts, and I manually went through each note to humanize the velocities to how I played them. I didn't process the piano much; I lowered the highs a bit, and because the free instrument I was using, Spitfire Audio's Soft Piano, didn't have a lot of release, I also added a considerable amount of reverb to make it blend more into the background.

For ambient sounds, I used both Native Instruments' Absynth, as well as Sound Guru's Mangle, the latter of which I used to essentially take a chunk out of a piano note and just add infinite sustain to it. For the background rain sound, I recorded some sounds myself over the summer (usually at 2AM) using a Tascam DR-40 handheld recorder; on one occasion I stood under the plastic awning in front of our front door to record a more percussive sound of the rain knocking on something, which I then lowpass filtered to make it sound like it's rain on a window - this eventually became the background sound for the mid-section.

I've done almost no mixing and mastering on the song; aside from shaping the piano and synth tones a bit to make them sound the way I wanted, the raw sparse timbres to me felt very pleasing and I didn't feel the sounds were fighting each other in space, so I've done very little EQing; as for mastering, I've used a single, very conservatively configured instance of BuzMaxi just to catch and soft-limit any of the peaks coming from the piano dynamics and to raise the track volume to where all sounds were clearly audible.

The final arrangement of the music in Reaper.

Minor tricks

Most of the demo was done fairly easily within the constraints of the engine, but there were a few fun things that I decided to hack around manually, mostly for effect.

The headlights in the opening scene are tiny 2D quads that I copied out of a photo and animated to give some motion to the scene.

The clouds in the final scene use a normal map and a hand-painted gradient; the whole scene interpolates between two lighting conditions, and two different color grading chains.

The rain layer - obviously - is just a multilayered 2D effect using a texture I created from a particle field in Fusion.

Stuff that didn't make it or went wrong

I've had a few things I had in mind and ended up having to bin along the way:

I still want to have a version of the temporal AA that properly deghosts animated objects; the robot vacuum cleaner moved slow enough to get away with it, but still.

The cat is obviously not furry; I have already rigged and animated the model by the time I realized that some fur cards would've helped greatly with the aliasing of the model, but by that time I didn't feel like redoing the whole thing all over again, and I was running out of time.

There's considerable amount of detail in the room scene that's not shown because of the lighting - I set the room up first, and then opted for a more dramatic lighting that ultimately hid a lot of the detail that I never bothered to arrange to more visible places.

In the first shot of the room scene, the back wall of the TV has a massive black spot on it that I have no idea where it's coming from, but I got away with it.

I spent an evening debugging why the demo was crashing on NVIDIA when I realized I was running out of the 2GB memory space; toggling the Large Address Aware flag always felt a bit like defeat, but it was easier than compiling a 64-bit version.

A really stupid problem materialized after the party, where both CPDT and Zoom reported that the demo didn't work on their ultrawide (21:9) monitors: this was simply due to the lack of pillarbox support because I genuinely didn't think that would ever be needed (at the time I started the engine I don't think I even had a 1080p monitor) - this was a quick fix and the currently distributed ZIP now features that fix.

Acknowledgements

While I've did the demo entirely myself, I've received some help from other places: The music was heavily inspired by the work of Exist Strategy, while the visuals were inspired by the work of Yaspes, IvoryBoy and the Europolis scenes in Dreamfall Chapters. While I did most of all graphics myself, one of the few things I got from online was a "lens dirt pack" from inScape Digital, and I think the dirt texture in the flowerpot I ended up just googling, because it was late and I didn't feel like going out for more photos. I'd also need to give credit to my audio director at work, Prof. Stephen Baysted, who pointed me at the piano plugin I ended up using for the music, and to Reid who provided me with ample amounts of cat-looking-out-of-window videos for animation reference.

Epilogue

Overall I'm quite happy with how everything worked out (final results and reaction notwithstanding), and I'm also quite happy that I managed to produce myself a toolset that "just works". (For the most part.)

One of the things that I've been talking to people about it is postmortem is how people were not expecting the mix of this particular style, which is generally represented in demos with 2D drawings or still images or photos slowly crossfading, instead using elaborate 3D and rendering. To me, it just felt like one of those interesting juxtapositions where the technology behind a demo can be super complex, but at the same time the demo isn't particularly showy or flashy; where the technology behind the demo does a ton of work but forcefully stays in the background to allow you to immerse in the demo itself. To me that felt very satisfactory both as someone trying to make a work of art that has something to say, but also as an engineer who tries to learn and do interesting things with all the technology around us.

What's next, I'm not sure yet.

3 notes

·

View notes