#Gartner Hype Cycle

Explore tagged Tumblr posts

Text

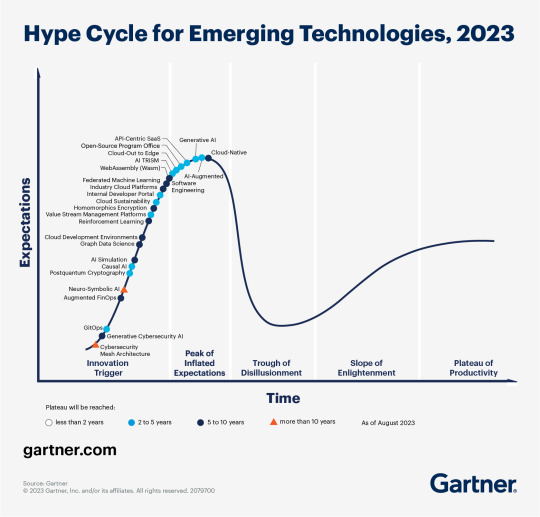

The AI hype bubble is the new crypto hype bubble

Back in 2017 Long Island Ice Tea — known for its undistinguished, barely drinkable sugar-water — changed its name to “Long Blockchain Corp.” Its shares surged to a peak of 400% over their pre-announcement price. The company announced no specific integrations with any kind of blockchain, nor has it made any such integrations since.

If you’d like an essay-formatted version of this post to read or share, here’s a link to it on pluralistic.net, my surveillance-free, ad-free, tracker-free blog:

https://pluralistic.net/2023/03/09/autocomplete-worshippers/#the-real-ai-was-the-corporations-that-we-fought-along-the-way

LBCC was subsequently delisted from NASDAQ after settling with the SEC over fraudulent investor statements. Today, the company trades over the counter and its market cap is $36m, down from $138m.

https://cointelegraph.com/news/textbook-case-of-crypto-hype-how-iced-tea-company-went-blockchain-and-failed-despite-a-289-percent-stock-rise

The most remarkable thing about this incredibly stupid story is that LBCC wasn’t the peak of the blockchain bubble — rather, it was the start of blockchain’s final pump-and-dump. By the standards of 2022’s blockchain grifters, LBCC was small potatoes, a mere $138m sugar-water grift.

They didn’t have any NFTs, no wash trades, no ICO. They didn’t have a Superbowl ad. They didn’t steal billions from mom-and-pop investors while proclaiming themselves to be “Effective Altruists.” They didn’t channel hundreds of millions to election campaigns through straw donations and other forms of campaing finance frauds. They didn’t even open a crypto-themed hamburger restaurant where you couldn’t buy hamburgers with crypto:

https://robbreport.com/food-drink/dining/bored-hungry-restaurant-no-cryptocurrency-1234694556/

They were amateurs. Their attempt to “make fetch happen” only succeeded for a brief instant. By contrast, the superpredators of the crypto bubble were able to make fetch happen over an improbably long timescale, deploying the most powerful reality distortion fields since Pets.com.

Anything that can’t go on forever will eventually stop. We’re told that trillions of dollars’ worth of crypto has been wiped out over the past year, but these losses are nowhere to be seen in the real economy — because the “wealth” that was wiped out by the crypto bubble’s bursting never existed in the first place.

Like any Ponzi scheme, crypto was a way to separate normies from their savings through the pretense that they were “investing” in a vast enterprise — but the only real money (“fiat” in cryptospeak) in the system was the hardscrabble retirement savings of working people, which the bubble’s energetic inflaters swapped for illiquid, worthless shitcoins.

We’ve stopped believing in the illusory billions. Sam Bankman-Fried is under house arrest. But the people who gave him money — and the nimbler Ponzi artists who evaded arrest — are looking for new scams to separate the marks from their money.

Take Morganstanley, who spent 2021 and 2022 hyping cryptocurrency as a massive growth opportunity:

https://cointelegraph.com/news/morgan-stanley-launches-cryptocurrency-research-team

Today, Morganstanley wants you to know that AI is a $6 trillion opportunity.

They’re not alone. The CEOs of Endeavor, Buzzfeed, Microsoft, Spotify, Youtube, Snap, Sports Illustrated, and CAA are all out there, pumping up the AI bubble with every hour that god sends, declaring that the future is AI.

https://www.hollywoodreporter.com/business/business-news/wall-street-ai-stock-price-1235343279/

Google and Bing are locked in an arms-race to see whose search engine can attain the speediest, most profound enshittification via chatbot, replacing links to web-pages with florid paragraphs composed by fully automated, supremely confident liars:

https://pluralistic.net/2023/02/16/tweedledumber/#easily-spooked

Blockchain was a solution in search of a problem. So is AI. Yes, Buzzfeed will be able to reduce its wage-bill by automating its personality quiz vertical, and Spotify’s “AI DJ” will produce slightly less terrible playlists (at least, to the extent that Spotify doesn’t put its thumb on the scales by inserting tracks into the playlists whose only fitness factor is that someone paid to boost them).

But even if you add all of this up, double it, square it, and add a billion dollar confidence interval, it still doesn’t add up to what Bank Of America analysts called “a defining moment — like the internet in the ’90s.” For one thing, the most exciting part of the “internet in the ‘90s” was that it had incredibly low barriers to entry and wasn’t dominated by large companies — indeed, it had them running scared.

The AI bubble, by contrast, is being inflated by massive incumbents, whose excitement boils down to “This will let the biggest companies get much, much bigger and the rest of you can go fuck yourselves.” Some revolution.

AI has all the hallmarks of a classic pump-and-dump, starting with terminology. AI isn’t “artificial” and it’s not “intelligent.” “Machine learning” doesn’t learn. On this week’s Trashfuture podcast, they made an excellent (and profane and hilarious) case that ChatGPT is best understood as a sophisticated form of autocomplete — not our new robot overlord.

https://open.spotify.com/episode/4NHKMZZNKi0w9mOhPYIL4T

We all know that autocomplete is a decidedly mixed blessing. Like all statistical inference tools, autocomplete is profoundly conservative — it wants you to do the same thing tomorrow as you did yesterday (that’s why “sophisticated” ad retargeting ads show you ads for shoes in response to your search for shoes). If the word you type after “hey” is usually “hon” then the next time you type “hey,” autocomplete will be ready to fill in your typical following word — even if this time you want to type “hey stop texting me you freak”:

https://blog.lareviewofbooks.org/provocations/neophobic-conservative-ai-overlords-want-everything-stay/

And when autocomplete encounters a new input — when you try to type something you’ve never typed before — it tries to get you to finish your sentence with the statistically median thing that everyone would type next, on average. Usually that produces something utterly bland, but sometimes the results can be hilarious. Back in 2018, I started to text our babysitter with “hey are you free to sit” only to have Android finish the sentence with “on my face” (not something I’d ever typed!):

https://mashable.com/article/android-predictive-text-sit-on-my-face

Modern autocomplete can produce long passages of text in response to prompts, but it is every bit as unreliable as 2018 Android SMS autocomplete, as Alexander Hanff discovered when ChatGPT informed him that he was dead, even generating a plausible URL for a link to a nonexistent obit in The Guardian:

https://www.theregister.com/2023/03/02/chatgpt_considered_harmful/

Of course, the carnival barkers of the AI pump-and-dump insist that this is all a feature, not a bug. If autocomplete says stupid, wrong things with total confidence, that’s because “AI” is becoming more human, because humans also say stupid, wrong things with total confidence.

Exhibit A is the billionaire AI grifter Sam Altman, CEO if OpenAI — a company whose products are not open, nor are they artificial, nor are they intelligent. Altman celebrated the release of ChatGPT by tweeting “i am a stochastic parrot, and so r u.”

https://twitter.com/sama/status/1599471830255177728

This was a dig at the ���stochastic parrots” paper, a comprehensive, measured roundup of criticisms of AI that led Google to fire Timnit Gebru, a respected AI researcher, for having the audacity to point out the Emperor’s New Clothes:

https://www.technologyreview.com/2020/12/04/1013294/google-ai-ethics-research-paper-forced-out-timnit-gebru/

Gebru’s co-author on the Parrots paper was Emily M Bender, a computational linguistics specialist at UW, who is one of the best-informed and most damning critics of AI hype. You can get a good sense of her position from Elizabeth Weil’s New York Magazine profile:

https://nymag.com/intelligencer/article/ai-artificial-intelligence-chatbots-emily-m-bender.html

Bender has made many important scholarly contributions to her field, but she is also famous for her rules of thumb, which caution her fellow scientists not to get high on their own supply:

Please do not conflate word form and meaning

Mind your own credulity

As Bender says, we’ve made “machines that can mindlessly generate text, but we haven’t learned how to stop imagining the mind behind it.” One potential tonic against this fallacy is to follow an Italian MP’s suggestion and replace “AI” with “SALAMI” (“Systematic Approaches to Learning Algorithms and Machine Inferences”). It’s a lot easier to keep a clear head when someone asks you, “Is this SALAMI intelligent? Can this SALAMI write a novel? Does this SALAMI deserve human rights?”

Bender’s most famous contribution is the “stochastic parrot,” a construct that “just probabilistically spits out words.” AI bros like Altman love the stochastic parrot, and are hellbent on reducing human beings to stochastic parrots, which will allow them to declare that their chatbots have feature-parity with human beings.

At the same time, Altman and Co are strangely afraid of their creations. It’s possible that this is just a shuck: “I have made something so powerful that it could destroy humanity! Luckily, I am a wise steward of this thing, so it’s fine. But boy, it sure is powerful!”

They’ve been playing this game for a long time. People like Elon Musk (an investor in OpenAI, who is hoping to convince the EU Commission and FTC that he can fire all of Twitter’s human moderators and replace them with chatbots without violating EU law or the FTC’s consent decree) keep warning us that AI will destroy us unless we tame it.

There’s a lot of credulous repetition of these claims, and not just by AI’s boosters. AI critics are also prone to engaging in what Lee Vinsel calls criti-hype: criticizing something by repeating its boosters’ claims without interrogating them to see if they’re true:

https://sts-news.medium.com/youre-doing-it-wrong-notes-on-criticism-and-technology-hype-18b08b4307e5

There are better ways to respond to Elon Musk warning us that AIs will emulsify the planet and use human beings for food than to shout, “Look at how irresponsible this wizard is being! He made a Frankenstein’s Monster that will kill us all!” Like, we could point out that of all the things Elon Musk is profoundly wrong about, he is most wrong about the philosophical meaning of Wachowksi movies:

https://www.theguardian.com/film/2020/may/18/lilly-wachowski-ivana-trump-elon-musk-twitter-red-pill-the-matrix-tweets

But even if we take the bros at their word when they proclaim themselves to be terrified of “existential risk” from AI, we can find better explanations by seeking out other phenomena that might be triggering their dread. As Charlie Stross points out, corporations are Slow AIs, autonomous artificial lifeforms that consistently do the wrong thing even when the people who nominally run them try to steer them in better directions:

https://media.ccc.de/v/34c3-9270-dude_you_broke_the_future

Imagine the existential horror of a ultra-rich manbaby who nominally leads a company, but can’t get it to follow: “everyone thinks I’m in charge, but I’m actually being driven by the Slow AI, serving as its sock puppet on some days, its golem on others.”

Ted Chiang nailed this back in 2017 (the same year of the Long Island Blockchain Company):

There’s a saying, popularized by Fredric Jameson, that it’s easier to imagine the end of the world than to imagine the end of capitalism. It’s no surprise that Silicon Valley capitalists don’t want to think about capitalism ending. What’s unexpected is that the way they envision the world ending is through a form of unchecked capitalism, disguised as a superintelligent AI. They have unconsciously created a devil in their own image, a boogeyman whose excesses are precisely their own.

https://www.buzzfeednews.com/article/tedchiang/the-real-danger-to-civilization-isnt-ai-its-runaway

Chiang is still writing some of the best critical work on “AI.” His February article in the New Yorker, “ChatGPT Is a Blurry JPEG of the Web,” was an instant classic:

[AI] hallucinations are compression artifacts, but — like the incorrect labels generated by the Xerox photocopier — they are plausible enough that identifying them requires comparing them against the originals, which in this case means either the Web or our own knowledge of the world.

https://www.newyorker.com/tech/annals-of-technology/chatgpt-is-a-blurry-jpeg-of-the-web

“AI” is practically purpose-built for inflating another hype-bubble, excelling as it does at producing party-tricks — plausible essays, weird images, voice impersonations. But as Princeton’s Matthew Salganik writes, there’s a world of difference between “cool” and “tool”:

https://freedom-to-tinker.com/2023/03/08/can-chatgpt-and-its-successors-go-from-cool-to-tool/

Nature can claim “conversational AI is a game-changer for science” but “there is a huge gap between writing funny instructions for removing food from home electronics and doing scientific research.” Salganik tried to get ChatGPT to help him with the most banal of scholarly tasks — aiding him in peer reviewing a colleague’s paper. The result? “ChatGPT didn’t help me do peer review at all; not one little bit.”

The criti-hype isn’t limited to ChatGPT, of course — there’s plenty of (justifiable) concern about image and voice generators and their impact on creative labor markets, but that concern is often expressed in ways that amplify the self-serving claims of the companies hoping to inflate the hype machine.

One of the best critical responses to the question of image- and voice-generators comes from Kirby Ferguson, whose final Everything Is a Remix video is a superb, visually stunning, brilliantly argued critique of these systems:

https://www.youtube.com/watch?v=rswxcDyotXA

One area where Ferguson shines is in thinking through the copyright question — is there any right to decide who can study the art you make? Except in some edge cases, these systems don’t store copies of the images they analyze, nor do they reproduce them:

https://pluralistic.net/2023/02/09/ai-monkeys-paw/#bullied-schoolkids

For creators, the important material question raised by these systems is economic, not creative: will our bosses use them to erode our wages? That is a very important question, and as far as our bosses are concerned, the answer is a resounding yes.

Markets value automation primarily because automation allows capitalists to pay workers less. The textile factory owners who purchased automatic looms weren’t interested in giving their workers raises and shorting working days. ‘ They wanted to fire their skilled workers and replace them with small children kidnapped out of orphanages and indentured for a decade, starved and beaten and forced to work, even after they were mangled by the machines. Fun fact: Oliver Twist was based on the bestselling memoir of Robert Blincoe, a child who survived his decade of forced labor:

https://www.gutenberg.org/files/59127/59127-h/59127-h.htm

Today, voice actors sitting down to record for games companies are forced to begin each session with “My name is ______ and I hereby grant irrevocable permission to train an AI with my voice and use it any way you see fit.”

https://www.vice.com/en/article/5d37za/voice-actors-sign-away-rights-to-artificial-intelligence

Let’s be clear here: there is — at present — no firmly established copyright over voiceprints. The “right” that voice actors are signing away as a non-negotiable condition of doing their jobs for giant, powerful monopolists doesn’t even exist. When a corporation makes a worker surrender this right, they are betting that this right will be created later in the name of “artists’ rights” — and that they will then be able to harvest this right and use it to fire the artists who fought so hard for it.

There are other approaches to this. We could support the US Copyright Office’s position that machine-generated works are not works of human creative authorship and are thus not eligible for copyright — so if corporations wanted to control their products, they’d have to hire humans to make them:

https://www.theverge.com/2022/2/21/22944335/us-copyright-office-reject-ai-generated-art-recent-entrance-to-paradise

Or we could create collective rights that belong to all artists and can’t be signed away to a corporation. That’s how the right to record other musicians’ songs work — and it’s why Taylor Swift was able to re-record the masters that were sold out from under her by evil private-equity bros::

https://doctorow.medium.com/united-we-stand-61e16ec707e2

Whatever we do as creative workers and as humans entitled to a decent life, we can’t afford drink the Blockchain Iced Tea. That means that we have to be technically competent, to understand how the stochastic parrot works, and to make sure our criticism doesn’t just repeat the marketing copy of the latest pump-and-dump.

Today (Mar 9), you can catch me in person in Austin at the UT School of Design and Creative Technologies, and remotely at U Manitoba’s Ethics of Emerging Tech Lecture.

Tomorrow (Mar 10), Rebecca Giblin and I kick off the SXSW reading series.

Image: Cryteria (modified) https://commons.wikimedia.org/wiki/File:HAL9000.svg

CC BY 3.0 https://creativecommons.org/licenses/by/3.0/deed.en

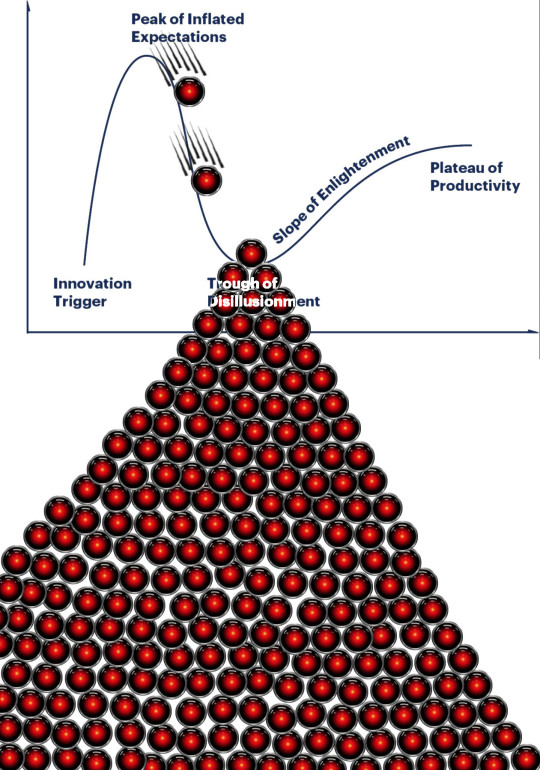

[Image ID: A graph depicting the Gartner hype cycle. A pair of HAL 9000's glowing red eyes are chasing each other down the slope from the Peak of Inflated Expectations to join another one that is at rest in the Trough of Disillusionment. It, in turn, sits atop a vast cairn of HAL 9000 eyes that are piled in a rough pyramid that extends below the graph to a distance of several times its height.]

#pluralistic#ai#ml#machine learning#artificial intelligence#chatbot#chatgpt#cryptocurrency#gartner hype cycle#hype cycle#trough of disillusionment#crypto#bubbles#bubblenomics#criti-hype#lee vinsel#slow ai#timnit gebru#emily bender#paperclip maximizers#enshittification#immortal colony organisms#blurry jpegs#charlie stross#ted chiang

2K notes

·

View notes

Text

Kissflow was named twice in a row as a Sample Vendor for Citizen Developers technology in the Gartner® Hype Cycle™, 2024 Reports

PHILIPPINES – Kissflow, an easy-to-use, low-code platform, has been identified as a Sample Vendor for Citizen Developers technology in three Gartner® Hype Cycle™, 2024 reports. Hype Cycle for the Future of Work, 2024 Hype Cycle for Workforce Transformation, 2024 Hype Cycle for Digital Workplace Applications, 2024 Gartner Hype Cycles provide a graphic representation of the maturity and…

0 notes

Text

ok, it was easier than I expect to find it because I didn't remember it's actually an official thing: it's called a Gartner Hype Cycle.

right now we are in the "negative press begins" (all the Google AI searches).

btw, this is not the first time AI has been through this cycle, it happened a few years back with the chatbots. suddenly they were all the hype, every digital agency was trying to sell one to their clients because "wow! look at this AI beating world chess champions at their game!".

At that time too the algorithms were advertised as "they can learn" but truth was they were not, not really. also, they're not learning right now either, they're just mapping recurring actions and what responses you didn't correct / felt the need to ask for more clarification / clicked, putting those ones as the first options to give when a pattern appears.

if there are more advanced (and actual) AIs out there, they're still in labs and not share with the public.

Latest tech pet peeve is the use of the term "AI" to refer to basically anything that does any amount of automation or uses computers in any way

#gartner hype cycle#artificial intelligence#it come without saying that artificial intelligence is not that intelligent#it's still just a collection of many algorithms that work together

12K notes

·

View notes

Text

Gartner's Hype Cycle Versus Seth Godin's Dip

On which path is your current ProcureTech implementation heading?

QUESTION 1: What is the relationship between Gartner’s Hype Cycle and Godin’s The Dip? QUESTION 2: How does understanding this relationship impact ProcureTech’s implementation and success? Here is the video for Gartner’s Hype Cycle. When I watched it, the first thing that came to mind was Seth Godin’s The Dip. The Hype Cycle Image Here is the video for Seth Godin’s The Dip. The Dip…

0 notes

Text

AI consumer journeys and the need for human friction...

Eradicating Pain Points does provide a competitive edge. AI is driving this change and making consumer experiences seamless by removing human interactions altogether. With proliferation of new AI based tools, I wonder if we can predict how this will shape the future of consumer technology. This reminds of the Gartner Hype Cycle and I almost wonder if we will now be entering the “Trough of disillusionment”, where we can weed out the good use cases and consumer experiences from the bad. Once the dust settles however, I’m sure AI would make a lot of our daily interactions with products more efficient.

But what does this mean for society? As social beings are we ready to give up the small interactions that make otherwise mundane tasks/ chores more special. I wanted to honor the memory of shopkeeper back home in New Delhi, India. He unfortunately passed away during the CoVID pandemic. Only when I went into the store and saw his picture up on the wall did it hit me. I knew nothing about this man behind the counter but every interaction I had with him brought a sense of comfort and joy. Knowing he would always greet me with a smile, when I was ready to buy stationery for the upcoming school year or always providing a small gift after the interaction was complete, it was an inefficient transaction but something I really looked forward to.

That being said, we must embrace what the future holds for us, and my biggest takeaway from the AI revolution is that it is yet to unfold. Predicting the future based on nascent and unproven technologies at scale like the Apple Vision Pro are only glimpses of how things will be shaped for consumer tech. While companies are quick to give up the ‘human’ element, I’m unsure if society is ready to accept – willingly or unwillingly, a future where we no longer interact with human beings. I believe there will be better hybrid solutions to the AI embrace but If I am wrong, then Disney predicted it - the future looks like WALL-E.

3 notes

·

View notes

Text

Milkshake Duck

the idea:

milkshake duck has become a shorthand for someone who gains prominence, usually on the internet for meme content. then after increased public visibility and scrutiny, they put their foot in their mouth and/or do something problematic, eliciting a follow-up public backlash. this is can be, but isn't necessarily, related to the gartner hype cycle and hype backlash.

12 notes

·

View notes

Text

It’s no surprise that AI has replaced Big Data as the enterprise technology industry’s favorite new buzzword. After all, there’s a reason it appears on Gartner’s Hype Cycle for Emerging Technologies. While progress was slow in the first few decades, AI’s pace of development has accelerated rapidly over the past decade. Some say AI will enhance humanity and perhaps even make us immortal; others, more pessimistic, believe AI will lead to conflict and may even automate society, resulting in job losses.

0 notes

Text

EdgeTI Recognized in the Gartner Hype Cycle for Revenue and Sales Technology, 2024

EdgeTI Recognized in the Gartner Hype Cycle for Revenue and Sales Technology, 2024 edgeTI Digital Twin Platform Brings Sales and Revenue Data Together Coherently Arlington, Virginia–(Newsfile Corp. – December 17, 2024) – Edge Total Intelligence Inc. (TSXV: CTRL) (OTCQB: UNFYF) (FSE: Q5I) (“edgeTI”, “Company”), a leading provider of real-time digital twin software, announces the Company was…

0 notes

Text

This day in history

There are only five more days left in my Kickstarter for the audiobook of The Bezzle, the sequel to Red Team Blues, narrated by @wilwheaton! You can pre-order the audiobook and ebook, DRM free, as well as the hardcover, signed or unsigned. There's also bundles with Red Team Blues in ebook, audio or paperback.

#20yrsago NYT discovers the “Plam Pilot” phenomenon https://memex.craphound.com/2004/01/28/nyt-discovers-the-plam-pilot-phenomenon/

#20yrsago Irish ISP will disconnect Internet users after three unsubstantiated copyright claims https://memex.craphound.com/2009/01/28/irish-isp-will-disconnect-internet-users-after-three-unsubstantiated-copyright-claims/

#15yrsago Ryanair will fine passengers who board with too much carry-on https://gadling.com/2009/01/22/ryanair-to-ticket-passengers-who-try-to-cheat-the-baggage-system/

#15yrsago BBC promises to put 200,000 publicly owned oil paintings online by 2012 https://www.theguardian.com/media/2009/jan/28/bbc-digitalmedia

#10yrsago Gartner Hype Cycle on the Gartner Hype Cycle https://twitter.com/philgyford/status/427840025544650753

#10yrsago Makerspaces and libraries: two great tastes that taste great together https://medium.com/the-magazine/shifting-from-shelves-to-snowflakes-d2a360c7ac7b

#10yrsago Pope Francis on the Internet and communication https://www.hyperorg.com/blogger/2014/01/27/a-gift-from-god/

#10yrsago UK National Museum of Computing trustees publish damning letter about treatment by Bletchley Park trust https://web.archive.org/web/20140130143734/https://www.tnmoc.org/news/news-releases/deciphering-discontent-statement-tnmoc-trustees

#10yrsago What is exposed about you and your friends when you login with Facebook https://twitter.com/TheBakeryLDN/status/427531934294880256

#10yrsago 890 word Daily Mail immigrant panic story contains 13 vile lies https://web.archive.org/web/20140126081130/http://britishinfluence.org/13-reasons-taking-daily-mail-press-complaints-commission/

#5yrsago Bride attains virality by adding pockets to her dress and those of her bridesmaids https://metro.co.uk/2019/01/27/bride-added-pockets-wedding-dress-bridesmaids-dresses-8398183/

#5yrsago Grifter steals dead peoples’ houses in gentrifying Philadelphia by forging deed transfers, then flipping them https://www.inquirer.com/news/a/house-sales-fraud-theft-philadelphia-real-estate-dead-owners-william-johnson-20190124.html

#5yrsago Megathread of Facebook’s terrible, horrible, no-good eternity https://brucesterling.tumblr.com/post/182371861433/all-things-facebook

#5yrsago How Facebook tracks Android users, even those without Facebook accounts https://www.youtube.com/watch?v=y0vlD7r-kTc

#5yrsago Video and audio from my closing keynote at Friday’s Grand Re-Opening of the Public Domain https://archive.org/details/ClosingKeynoteForGrandReopeningOfThePublicDomainCoryDoctorowAtInternetArchive_201901

Berliners: Otherland has added a second date (Jan 28 - THIS SUNDAY!) for my book-talk after the first one sold out - book now!

Back the Kickstarter for the audiobook of The Bezzle here!

Image: Sam Valadi (modified) https://www.flickr.com/photos/132084522@N05/17086570218/

CC BY 2.0: https://creativecommons.org/licenses/by/2.0/

2 notes

·

View notes

Text

ThreatBook Honored as a Sample Vendor in Gartner® Hype Cycle™ for Security Operations

http://dlvr.it/TDCNyd

0 notes

Text

ThreatBook Honored as a Sample Vendor in Gartner® Hype Cycle™ for Security Operations

http://dlvr.it/TDCNTV

0 notes

Text

How Useful Is The Gartner Hype Cycle for Procurement and Sourcing Solutions

I have only one question left to ask? If Gartner was your barber, would you keep going back to them for the same results as above?

“The Gartner Hype Cycle for Procurement and Sourcing Solutions helps companies understand the maturity and potential of different procurement technologies over time. First introduced to the procurement space around the early 2000s, it categorizes technologies into different phases of the hype cycle: “Innovation Trigger,” “Peak of Inflated Expectations,” “Trough of Disillusionment,” “Slope of…

0 notes

Text

ThreatBook Honored as a Sample Vendor in Gartner® Hype Cycle™ for Security Operations

http://dlvr.it/TDCL0d

0 notes

Text

ThreatBook Honored as a Sample Vendor in Gartner® Hype Cycle™ for Security Operations

http://dlvr.it/TDCL0g

0 notes

Text

Kissflow identified as a Sample Vendor for No-Code Platforms technology in three Gartner® Hype Cycle™, 2024 Reports

PHILIPPINES – September 3, 2024– Kissflow has been recognized as a Sample Vendor for no-code platforms technology in three Gartner® Hype Cycle™, 2024 Reports. Kissflow is an easy-to-use, low-code platform for custom application development tailored to business operations and listed in the following reports: 1. Hype Cycle for the Future of Work, 2024 2. Hype Cycle for Digital Workplace…

0 notes