#Business AI

Explore tagged Tumblr posts

Text

Copilot vs. ChatGPT vs. Gemini: Which One is Best AI Chatbot for 2025?

AI chatbots are transforming business productivity in 2025. In this blog by JRS Dynamics, we compare the top three AI tools—Microsoft Copilot, ChatGPT, and Google Gemini—based on features, use cases, and business benefits. Whether you're managing ERP, CRM, or daily tasks, find out which AI assistant is right for you.

🔗 Read full blog: https://jrsdynamics.com/copilot-vs-chatgpt-vs-gemini/

#AI#Chatbot#Microsoft Copilot#ChatGPT#Google Gemini#AI Assistant#Business AI#ERP#2025 AI Tools#CRM#aitools#ai generated

1 note

·

View note

Text

ChatGPT User Statistics and Market Performance: February 2025 Update

Learn here ChatGPT User Statistics, Number of Users Globally and Market Performance 2025 (updates) Updates May 2025 Top AI Statistics and Market Growth Insights (2025 Edition) 1. The Soaring Value of the Global AI Market (GrandViewResearch) As of 2025, the global artificial intelligence (AI) market is valued at an impressive $600 billion, reflecting a monumental rise of $400 billion since…

#AI adoption#AI chatbot#AI growth#AI in business#AI statistics#business AI#ChatGPT#ChatGPT Plus#ChatGPT Pro#ChatGPT users#Generative AI#mobile apps#OpenAI#OpenAI revenue#Statistics#technology#User Demographics

0 notes

Text

SAP Business AI: Revolutionizing the Future of Business Operations

In today’s fast-paced business environment, staying competitive requires adopting the latest technologies. SAP Business AI is one such innovation that’s transforming how organizations operate. By integrating artificial intelligence (AI) into SAP’s enterprise resource planning (ERP) systems, businesses are unlocking new levels of efficiency, productivity, and insight.

In this blog, we will explore what SAP Business AI is, how it works, and why it’s an essential tool for businesses seeking to improve their operations and decision-making.

What is SAP Business AI?

SAP Business AI is an advanced suite of artificial intelligence tools built into SAP's Business Technology Platform (BTP). It combines machine learning, natural language processing, and predictive analytics with existing SAP solutions to automate tasks, analyze data, and enhance decision-making. Essentially, it’s designed to help businesses make smarter decisions by using the power of AI to process large volumes of data and deliver actionable insights in real time.

Unlike traditional AI systems, SAP Business AI integrates seamlessly with existing SAP products like SAP S/4HANA or SAP SuccessFactors, making it easier for businesses to adopt and leverage AI technology without overhauling their entire infrastructure.

How Does SAP Business AI Work?

SAP Business AI works by analyzing vast amounts of data from various business processes and applying advanced machine learning algorithms to generate insights, predictions, and recommendations. It does so through these key steps:

Data Integration: SAP Business AI integrates with your existing SAP systems, pulling in data from different sources such as customer interactions, sales reports, or inventory data.

Predictive Analytics: Using machine learning, the system identifies patterns and trends within the data, helping businesses forecast future events like customer demand or market conditions.

Automation: SAP Business AI automates routine tasks like invoicing, customer support, and inventory management, improving overall operational efficiency.

Real-Time Insights: The platform provides actionable insights in real time, helping businesses make timely decisions based on the most up-to-date data.

Benefits of SAP Business AI

There’s a reason why SAP Business AI is rapidly gaining traction among businesses worldwide. Here are some of the most compelling benefits:

1. Improved Decision-Making

AI-driven insights enable better decision-making by analyzing historical and real-time data. Whether it's predicting market trends, evaluating customer preferences, or managing resources, SAP Business AI provides recommendations that lead to smarter business choices.

2. Enhanced Efficiency

By automating repetitive tasks like data entry, invoicing, or stock management, businesses can free up employees to focus on more strategic tasks. This leads to greater efficiency and cost savings.

3. Cost Reduction

By streamlining operations and minimizing human error, businesses can lower their operational costs. SAP Business AI helps identify areas of inefficiency, enabling companies to optimize processes and reduce unnecessary expenses.

4. Personalized Customer Experience

With its ability to analyze customer data, SAP Business AI helps businesses deliver personalized experiences. This can include tailored marketing messages, personalized product recommendations, and a more engaging customer service experience.

5. Faster Response Times

Real-time data processing allows businesses to make quicker decisions. Whether it’s responding to supply chain disruptions or addressing customer concerns, businesses can act faster and more effectively.

Applications of SAP Business AI in Business Operations

The versatility of SAP Business AI makes it applicable across various industries. Here are a few examples of how businesses can use it:

1. Supply Chain Optimization

Managing a supply chain involves dealing with multiple variables such as suppliers, logistics, and production schedules. SAP Business AI uses predictive analytics to forecast potential delays, optimize inventory, and ensure that products reach customers on time.

2. Sales and Marketing

Sales teams can leverage SAP Business AI to identify high-potential leads, track customer behaviors, and optimize marketing campaigns. With data-backed insights, sales and marketing teams can better target their efforts and increase revenue.

3. Human Resources

For HR departments, SAP Business AI simplifies tasks like recruitment, employee engagement, and performance analysis. The platform can also predict employee turnover, helping HR teams take proactive measures to retain talent.

4. Financial Management

In finance, SAP Business AI helps streamline tasks such as invoicing, budget forecasting, and expense management. It also provides real-time financial insights that support better decision-making and help businesses stay on top of cash flow.

Challenges of Adopting SAP Business AI

While SAP Business AI offers several benefits, there are a few challenges businesses might face:

1. Complexity of Implementation

Integrating AI with existing systems can be complex, especially for businesses without prior experience in AI or advanced data analytics. Companies may need to invest in training or hire experts to ensure a smooth implementation process.

2. Data Quality

The effectiveness of AI systems relies heavily on the quality of the data being fed into them. If the data is outdated, unstructured, or incomplete, it may result in inaccurate insights.

3. Cost

Though SAP Business AI provides significant long-term value, the initial investment required for implementation might be high, especially for smaller businesses. However, cloud-based options are available, making the technology more accessible.

Conclusion

SAP Business AI is a powerful tool that’s transforming how businesses operate. By integrating AI capabilities into SAP’s existing software, businesses can automate tasks, enhance decision-making, and gain a competitive edge. Whether you’re looking to improve efficiency, reduce costs, or offer a personalized customer experience, SAP Business AI is a game-changer that can help you achieve your goals.

As AI continues to evolve, its role in business operations will only grow. By embracing SAP Business AI, businesses can stay ahead of the curve and position themselves for future success in an increasingly digital world.

0 notes

Text

#I'm serious stop doing it#theyre scraping fanfics and other authors writing#'oh but i wanna rp with my favs' then learn to write#studios wanna use ai to put writers AND artists out of business stop feeding the fucking machine!!!!

166K notes

·

View notes

Text

Explore the Big Rocks in AI—from big business expansions to open-source breakthroughs—and anchor ourselves amidst rapid change. #breath #globalchange

#agentic AI#AI#Artificial Intelligence#business AI#ChatGPT#Cloud Computing#Community#DeepSeek#Digital transformation#Dopamine#Drive-Thru Automation#Ecological Overshoot#Edge Computing#Fast Food#Future of Work#global economy#Google Cloud#Innovation#McDonald’s#Meta#Mistral AI#Open Source#OpenAI#productivity#Robotics#Sustainability#Tech Trends#technology

1 note

·

View note

Text

youtube

#ai revolution#ai#ai in business#ai business transformation#business automation#business#10 chatgpt business ideas to transform your future#ai for business#ai tools for business#ai solutions for businesses 2024#grow your business#best ai for business#business innovation#ai business automation#ai automation business#2024 money making revolution with ai your ultimate guide#business strategy#ai and business#business ai#ai business opportunities 2024#Youtube

0 notes

Text

Over 20k ChatGPT Prompts for Business Mastery 2024

Discover powerful ChatGPT prompts for marketing, sales, customer support & more! Elevate your business with AI in 2024

ChatGPT can automate tasks, generate creative content, and offer valuable insights, making it a valuable asset across various departments. This article explores how you can leverage ChatGPT business prompts to enhance efficiency and achieve your goals in 2024. Why Use ChatGPT for Business?ChatGPT Prompts for MarketingChatGPT Prompts for SalesChatGPT Prompts for Customer SupportOther Business…

View On WordPress

0 notes

Text

*raises my hand to ask a question* what if we collectively refused to refer to AI as 'AI'? it's not artificial intelligence, artificial intelligence doesn't currently exist, it's just algorithms that use stolen input to reinforce prejudice. what if we protested by using a more accurate name? just spitballing here but what about Automated Biased Output (ABO for short)

31K notes

·

View notes

Text

DanDaDan - See You Tomorrow!🌸

What a wonderful rollercoaster ride of a series! It was hard to figure out what to make but I thought to reference the “goodbye or goodbye?” moment. It is too cute❤️🌸

#dandadan#dandadan fanart#artists on tumblr#procreate#art#digital watercolor#fanart#digital fanart#small business#small artist#dandadan art#dandadan momo#dandadan okarun#okarun#momo ayase#momo#momokarun#digital artwork#anti ai#anime fanart#anime#anime art#anime and manga#animecore#anime style#dandadan anime#shonen#otaku#otakucore#otakugirl

677 notes

·

View notes

Text

"this new generation is the dumbest and laziest ever because ai is ruining people's ability to learn!!! Why are you using ai to write emails and cover letters when you could instead LEARN this beautiful, important and valuable skill instead of growing lazy and getting ai slop to slop it for you?" oh my god. oooooh my god. oh my goooooood.

#yeah fucking cover letters and business emails a dying art of divine human communication ruined by ai#''hiring managers love me so much because I handwrite my cover letters like a human'' hiring managers actually don't care if you live or di#tumblr hate posting

1K notes

·

View notes

Text

AI and the fatfinger economy

I'm on a 20+ city book tour for my new novel PICKS AND SHOVELS. Catch me at NEW ZEALAND'S UNITY BOOKS in WELLINGTON TODAY (May 3). More tour dates (Pittsburgh, PDX, London, Manchester) here.

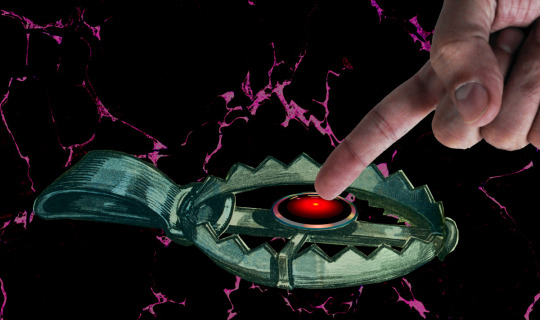

Have you noticed that all the buttons you click most frequently to invoke routine, useful functions in your device have been moved, and their former place is now taken up by a curiously butthole-esque icon that summons an unwanted AI?

https://velvetshark.com/ai-company-logos-that-look-like-buttholes

These traps for the unwary aren't accidental, but neither are they placed there solely because tech companies think that if they can trick you into using their AI, you'll be so impressed that you'll become a regular user. To understand why you find yourself repeatedly fatfingering your way into an unwanted AI interaction – and why those interactions are so hard to exit – you have to understand something about both the macro- and microeconomics of high-growth tech companies.

Growth is a heady advantage for tech companies, and not because of an ideological commitment to "growth at all costs," but because companies with growth stocks enjoy substantial, material benefits. A growth stock trades at a higher "price to earnings ratio" ("P:E") than a "mature" stock. Because of this, there are a lot of actors in the economy who will accept shares in a growing company as though they were cash (indeed, some might prefer shares to cash). This means that a growing company can outbid their rivals when acquiring other companies and/or hiring key personnel, because they can bid with shares (which they get by typing zeroes into a spreadsheet), while their rivals need cash (which they can only get by selling things or borrowing money).

The problem is that all growth ends. Google has a 90% share of the search market. Google isn't going to appreciably increase the number of searchers, short of desperate gambits like raising a billion new humans to maturity and convincing them to become Google users (this is the strategy behind Google Classroom, of course). To continue posting growth, Google needs gimmicks. For example, in 2019, Google intentionally made Search less accurate so that users would have to run multiple queries (and see multiple rounds of ads) to find the answers to their questions:

https://www.wheresyoured.at/the-men-who-killed-google/

Thanks to Google's monopoly, worsening search perversely resulted in increased earnings, and Wall Street rewarded Google by continuing to trade its stock with that prized high P:E. But for Google – and other tech giants – the most enduring and convincing growth stories comes from moving into adjacent lines of business, which is why we've lived through so many hype bubbles: metaverse, web3, cryptocurrency, and now, of course, AI.

For a company like Google, the promise of these bubbles is that it will be able to double or triple in size, by dominating an entirely new sector. With that promise comes peril: growth must eventually stop ("anything that can't go on forever eventually stops"). When that happens, the company's stock instantaneously goes from being a "growth stock" to being a "mature stock" which means that its P:E is way too high. Anyone holding growth stock knows that there will come a day when those stocks will transition, in an eyeblink, from being undervalued to being grossly overvalued, and that when that day comes, there will be a mass sell-off. If you're still holding the stock when that happens, you stand to lose bigtime:

https://pluralistic.net/2025/03/06/privacy-last/#exceptionally-american

So everyone holding a growth stock sleeps with one eye open and their fists poised over the "sell" button. Managers of growth companies know how jittery their investors are, and they do everything they can to keep the growth story alive, as a matter of life and death.

But mass sell-offs aren't just bad for the company – it's also very bad for the company's key employees, that is, anyone who's been given stock in addition to their salary. Those people's portfolios are extremely heavy on their employer's shares, and they stand to disproportionately lose in the event of a selloff. So they are personally motivated to keep the growth story alive.

That's where these growth-at-all-stakes maneuvers bent on capturing an adjacent sector come from. If you remember the Google Plus days, you'll remember that every Google service you interacted with had some important functionality ripped out of it and replaced with a G+-based service. To make sure that happened, Google's bosses decreed that the company's bonuses would be tied to the amount of G+ activity each division generated. In companies where bonuses can amount to 90% of your annual salary or more, this was a powerful motivator. It meant that every product team at Google was fully aligned on a project to cram G+ buttons into their product design. Whether or not these made sense for users, they always made sense for the product team, whose ability to take a fancy Christmas holiday, buy a new car, or pay their kids' private school tuition depended on getting you to use G+.

Once you understand how corporate growth stories are converted to "key performance indicators" that drive product design, many of the annoyances of digital services suddenly make a great deal of sense. You know how it's almost impossible to watch a show on a streaming video service without accidentally tapping a part of the screen that whisks you to a completely different video?

The reason you have to handle your phone like a photonegative while watching a movie – the reason every millimeter of screen real-estate has been boobytrapped with an icon that takes you somewhere else – is that streaming services believe that their customers are apt to leave when they feel like there's nothing new to watch. These bosses have made their product teams' bonuses dependent on successfully "recommending" a show you've never seen or expressed any interest in to you:

https://pluralistic.net/2022/05/15/the-fatfinger-economy/

Of course, bosses understand that their workers will be tempted to game this metric. They want to distinguish between "real" clicks that lead to interest in a new video, and fake fatfinger clicks that you instantaneously regret. The easiest way to distinguish between these two types of click is to measure how long you watch the new show before clicking away.

Of course, this is also entirely gameable: all the product manager has to do is take away the "back" button, so that an accidental click to a new video is extremely hard to cancel. The five seconds you spend figuring out how to get back to your show are enough to count as a successful recommendation, and the product team is that much closer to a luxury ski vacation next Christmas.

So this is why you keep invoking AI by accident, and why the AI that is so easy to invoke is so hard to dispel. Like a demon, a chatbot is much easier to summon than it is to rid yourself of.

Google is an especially grievous offender here. Familiar buttons in Gmail, Gdocs, and the Android message apps have been replaced with AI-summoning fatfinger traps. Android is filled with these pitfalls – for example, the bottom-of-screen swipe gesture used to switch between open apps now summons an AI, while ridding yourself of that AI takes multiple clicks.

This is an entirely material phenomenon. Google doesn't necessarily believe that you will ever want to use AI, but they must convince investors that their AI offerings are "getting traction." Google – like other tech companies – gets to invent metrics to prove this proposition, like "how many times did a user click on the AI button" and "how long did the user spend with the AI after clicking?" The fact that your entire "AI use" consisted of hunting for a way to get rid of the AI doesn't matter – at least, not for the purposes of maintaining Google's growth story.

Goodhart's Law holds that "When a measure becomes a target, it ceases to be a good measure." For Google and other AI narrative-pushers, every measure is designed to be a target, a line that can be made to go up, as managers and product teams align to sell the company's growth story, lest we all sell off the company's shares.

If you'd like an essay-formatted version of this post to read or share, here's a link to it on pluralistic.net, my surveillance-free, ad-free, tracker-free blog:

https://pluralistic.net/2025/05/02/kpis-off/#principal-agentic-ai-problem

Image: Pogrebnoj-Alexandroff (modified) https://commons.wikimedia.org/wiki/File:Index_finger_%3D_to_attention.JPG

CC BY-SA 3.0 https://creativecommons.org/licenses/by-sa/3.0/deed.en

--

Cryteria (modified) https://commons.wikimedia.org/wiki/File:HAL9000.svg

CC BY 3.0 https://creativecommons.org/licenses/by/3.0/deed.en

#pluralistic#kpis#incentives matter#ui#ux#video streaming#google plus#g plus#ai#artificial intelligence#growth stocks#business#big tech

617 notes

·

View notes

Text

yeah alr ig

#this is what domestic au's are to me personally#hlvrai#hlvrai fanart#half life vr but the ai is self aware#benrey#hlvrai benrey#gordon feetman#hlvrai gordon#gonna be somewhat honest i havent posted in a month cuz i was busy reading homestuck for the first time. merp :p#i fomo'd too close to the sun and here we are#wimb art

1K notes

·

View notes

Text

my action figure art of charles leclerc. artists > generative AI forever and always | bsky/twt | inspired by marsabrin

#as usual im perpetually late to any art trend happening but ive been so busy#i really wanted to do this tho also cus the ai trend of this made me so mad#charles leclerc#f1#formula 1#j.exe#j.art

502 notes

·

View notes

Text

(3/4) Scans a man with the head of a dog. Yep... That's Dogman... 🤖🐶

Part 1 || Part 2 || -- || Part 4

#dogman#petey the cat#lil petey#detey#robodog#rebuild robodog au#dogman ai buddy#dogman fanart#yams art#yall i was super busy this week i didnt get a chance to draw NOTHING!!!#so thats why this update took longer soz#we getting to the end tho!#still got one more comm to sketch tho so well get there when we get there !!!#dog man

597 notes

·

View notes

Text

this is odydio to me

#odydio#odysseus#diomedes#odysseus of ithaca#diomedes of argos#personally (i'm projecting) ody is aspec/demi. both -romantic and -sexual (and someone easily flustered by ppl he genuinely likes)#this is also how he is with pen#obviously lol#mind you ody can flirt (strategically)with people like nobody's business but if its someone he Actually likes? TO him? Gone. Done for. Dead#side note this is after dio got shot in the foot and ody got speared through the gut... chest#or smth; yk - and didn't die somehow lmao#i'd draw fading bite marks if i had the energy to (the idea came to me after line art and coloring already)#ari's art#my art#fuck ai#i put a disproportionate amount of time and effort for a shitpost but whatever i like it.#digital art#meme#ship dynamics#chat should i make a penody version? (that's not even a question tbh...)#penodydio ver coming at some point in the future (don't take that as a promise actually)#ody isn't actually all that flustered by like. sex or h*ndholding itself or anything its just the idea of being loved by someone he loves.#:9

276 notes

·

View notes