toonedtoons-blog

332 posts

He/Him 🏳⚧ ● 28 ● full time gremlin artist ● En/Esp🇦🇷

Don't wanna be here? Send us removal request.

Text

Hoping tumblr will roll back the idiocy but in case it doesn't:

Glaze applies an invisible-to-humans filter that interrupts style mimicry. Link

Nightshade, by the same people, poisons datasets. Link

Lastly, remember how this went when ArtStation tried to pull this?

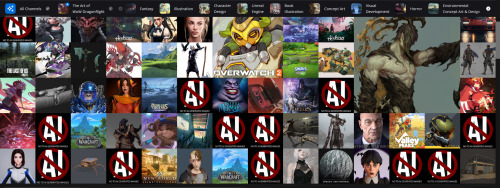

[ID: 3 images of various thumbnails from the ArtStation website. A large number of them are the same image of "AI" in a barred circle, over the text "No to AI generated images". A number of the thumbnails, on close inspection, are AI-generated images that copied this anti-AI image and incorporated it into the results. End ID.]

Just saying.

95 notes

·

View notes

Text

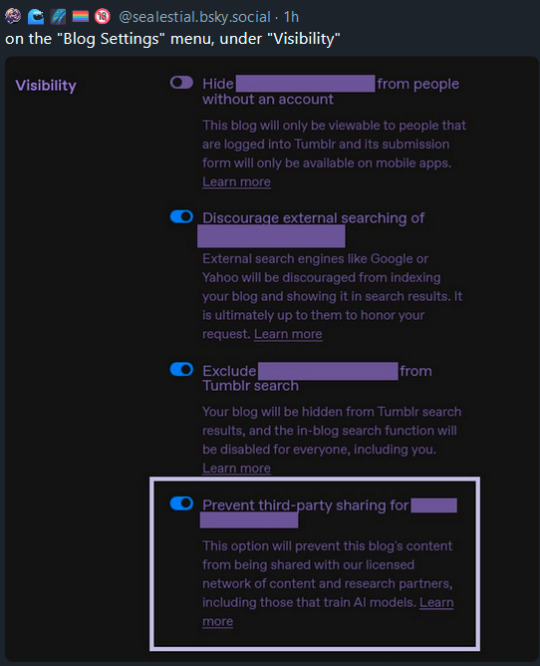

Protect your blogs from this new AI horseshit Tumblr's rolling out.

Transcription: On the 'Blog Settings' menu, under 'Visibility'

348 notes

·

View notes

Text

@staff

OUR CONTENT SHOULD BE OPTED OUT OF AI TRAINING BY DEFAULT!

21K notes

·

View notes

Text

I don't get what you're saying but it sounds like an insult so I'm gonna be sad about it

8K notes

·

View notes

Text

god forbid 5000 year old girls do anything

324K notes

·

View notes

Text

andijusttoreuptheticketandican’tgoinanywaysothere

6K notes

·

View notes

Text

I watched the entirety of Lego Mokie Kid in one night bc i have no self control and its such a cool show✨

#be prepared bc this show is my new hyperfixation#this will be the only thing ill talk about for a while#my art#sona#Lego Monkie Kid#LMK#lego monkey kid#lego#sun wukong#syrenart#syrenking

49 notes

·

View notes

Text

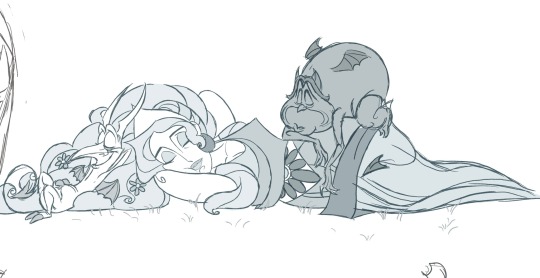

Now look who's here! Scribble & Doodle returned✨

#my art#toon#cartoon#my ocs#rubberhose#toonedtoons#toonblr#syrenking#goat#pencil#crayon#ToonedToons Lily#ToonedToons Scribble#ToonedToons Doodle#happy pride 🌈#too#bc Scribble and Doodle are dating💖✨#artsy lesbians#i love em

16 notes

·

View notes

Text

Craig and Lily are not thrilled to share the same space

#my art#toon#cartoon#my ocs#rubberhose#toonedtoons#toonblr#syrenking#goat#ToonedToons Lily#ToonedToons Craig#ghost#sailor#pipe

16 notes

·

View notes

Text

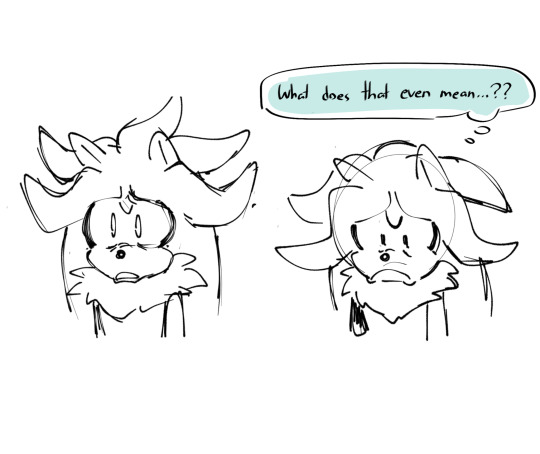

Couple of bonuses I couldn’t fit in the last batch 😭✨ I love them??

When your dog has more game than you and your minions think your wife is their mom

2K notes

·

View notes

Text

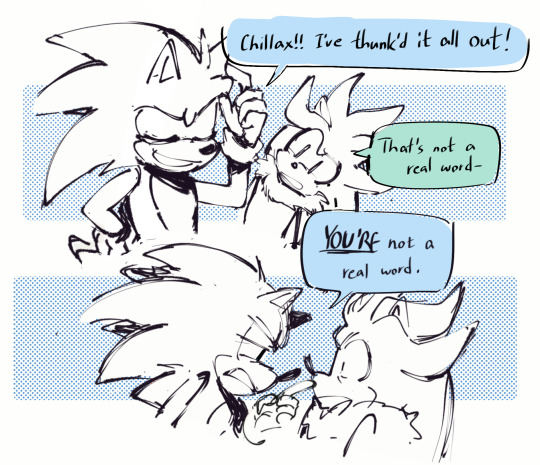

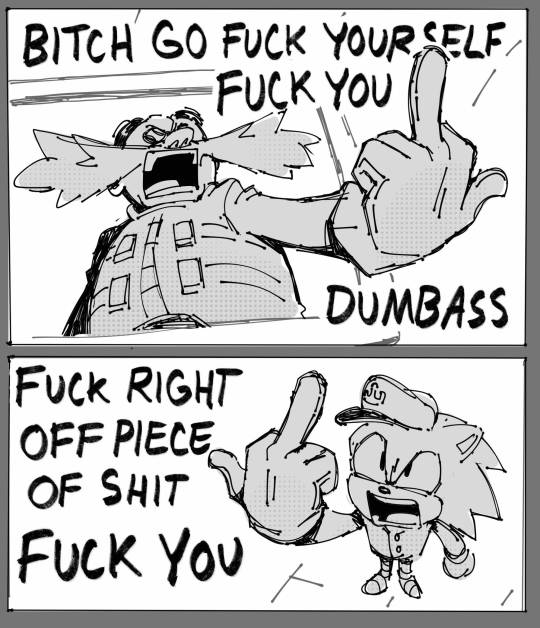

doodles i made while watching the latest @snapscube murder of sonic stream WAHOO!!!!

10K notes

·

View notes

Text

tumblr powered microwave. reblog to fire 1 wave at this beef

29K notes

·

View notes

Text

Decided to make another Sonic Sona but this time closer to irl me

#my art#sona#sonic sona#sonic#sonic the hedgehog#sth#sega#video games#digital art#in case you are wondering#its a patagonian mara#patagonian mara#mara#rodent#syrenking

25 notes

·

View notes

Photo

BABY GIRL

(flats by mulberry_shrike)

#i love this comic#and you will love it too#i recommend#sebastian comic#comics#pumpkin#artists on tumblr#possum#opossum

207 notes

·

View notes

Text

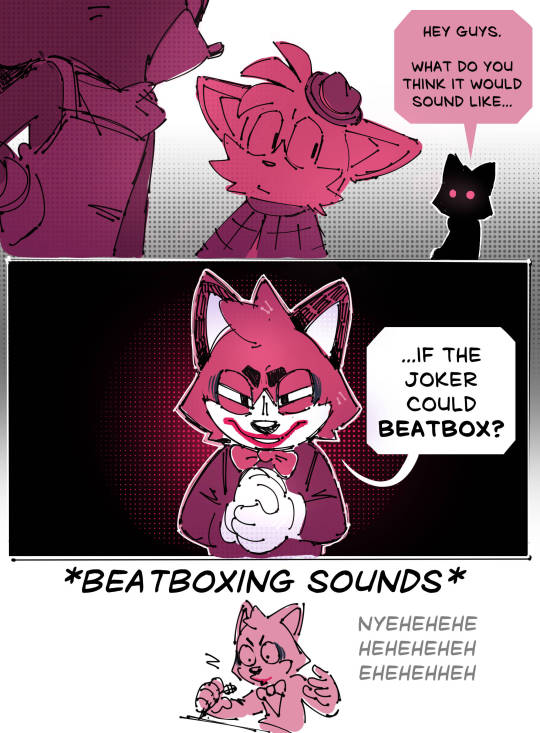

Sonic was murdered stop beatboxing!

#lmao#ok listen i know at lest 9000+ ppl made this joke#probably#but it was too funny not to draw#they knew what they were doing#my art#sonic fanart#sonic#sth#sonic the hedgehog#tails miles prower#the murder of sonic the hedgehog#sega#video games#barry the quokka#beatboxing meme

5K notes

·

View notes